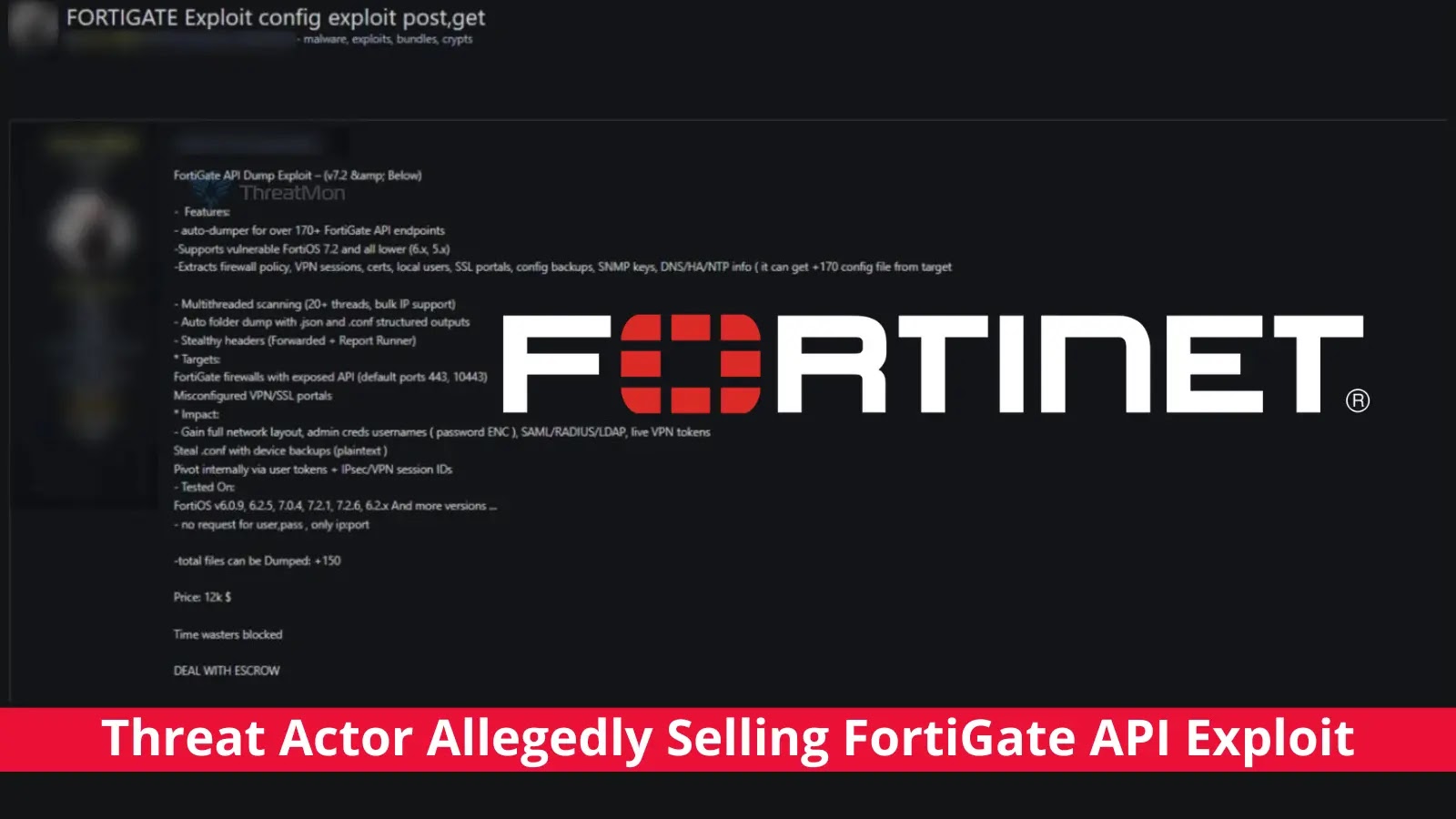

OpenAI has officially confirmed that a ChatGPT account linked to an individual associated with Chinese law enforcement was used to plan and document large-scale covert cyberattack campaigns.

The revelation, published in OpenAI’s February 2026 threat disruption report, marks one of the most detailed public disclosures of how AI tools are being weaponized by state-linked actors to carry out coordinated influence operations and targeted harassment against dissidents, foreign officials, and critics of the Chinese Communist Party (CCP).

The operation — which OpenAI internally dubbed “Cyber Special Operations” after the Chinese term used in the threat actor’s own status reports — represents a systematic and resource-heavy effort to suppress free speech, manipulate public opinion, and silence opposition both inside China and across the globe.

The ChatGPT account was primarily used to edit and polish periodic status updates on ongoing campaigns, giving investigators a rare window into the inner workings of a Chinese state-affiliated disinformation machine.

.webp)

The operations reportedly spanned over 300 foreign social media platforms, involved thousands of fake accounts, and were carried out by hundreds of human operators spread across multiple provinces in China.

OpenAI analysts identified a particularly alarming planning session in mid-October 2025, when the ChatGPT user asked the model to help design a covert influence campaign targeting Japanese politician Sanae Takaichi — who has since become Japan’s first female prime minister — after she publicly criticized human rights conditions in Inner Mongolia.

Tactics ranged from amplifying negative commentary and using fake email accounts posing as foreign residents, to labeling her as a far-right figure and stoking public anger over U.S. tariffs to shift attention away from China-Japan tensions. OpenAI’s model refused to assist with this plan.

Despite that refusal, the operation did not stop there. The same user returned days later to polish a status report documenting the campaign’s real-world implementation — confirming that the attack had gone ahead without OpenAI’s platform.

Open-source investigation by OpenAI’s team confirmed the campaign’s footprint, identifying the specific hashtag referenced in the report spreading across X, Pixiv, and Blogspot, alongside AI-generated memes falsely associating Takaichi with nationalist groups.

.webp)

The targets were not limited to people inside China — they included foreign dissidents, human rights groups, and foreign government officials up to the highest levels of government.

Inside China’s “Cyber Special Operations”

Beyond the Japan-focused campaign, the threat actor’s ChatGPT sessions revealed a sweeping playbook of over 100 distinct tactics designed to target, pressure, and silence dissidents worldwide.

The operations used locally deployed AI models such as DeepSeek-R1, Qwen2.5, and YOLOv8 for monitoring, profiling, and content creation, while using ChatGPT to refine documents and operational reports.

Prominent targets included Chinese activist Li Ying (known as “Teacher Li is not your teacher”), the human rights group Safeguard Defenders, and dissident Jie Lijian, against whom the operation allegedly fabricated an obituary and spread fake gravestone images to cause psychological distress.

In one case, the threat actor described forging documents from a U.S. county court and presenting them to a social media platform in an attempt to trigger the removal of a dissident’s account.

These activities tied directly to the China-linked “Spamouflage” campaign, which Meta publicly attributed to Chinese law enforcement in 2023. OpenAI further connected the operation to the doxxing website revealscum.com, which the company had first exposed as part of the Spamouflage network in May 2024.

.webp)

Organizations and individuals concerned about AI-enabled influence operations should take the following precautions.

Social media platforms are advised to strengthen coordinated inauthentic behavior detection, particularly for accounts that mass-report users using AI-fabricated evidence.

Public figures, activists, and government officials should remain vigilant about unsolicited outreach from unverified consulting firms or fake legal entities.

Governments should continue sharing threat intelligence about foreign state-linked covert operations and warn civil society groups about the real-world risks of online harassment.

AI providers should maintain strict content policies and continue publishing detailed threat reports to ensure industry-wide awareness of how their platforms are being abused.

Follow us on Google News, LinkedIn, and X to Get More Instant Updates, Set CSN as a Preferred Source in Google.