Security researchers at Truffle Security discovered that legacy public-facing Google API keys can silently gain unauthorized access to Google’s sensitive Gemini AI endpoints.

This flaw exposes private files, cached data, and billable AI usage to attackers without any warning or notification to developers.

The vulnerability highlights the severe danger of retrofitting modern AI capabilities onto older cloud security architectures.

Leak Sensitive Data

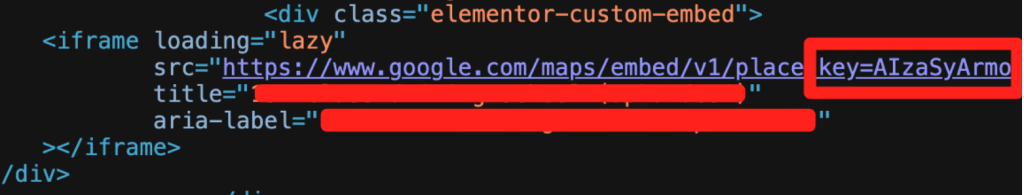

For over a decade, Google explicitly instructed developers to embed API keys directly into client-side code for public services like Google Maps.

These keys were designed primarily as project identifiers for billing and were considered completely safe to share publicly.

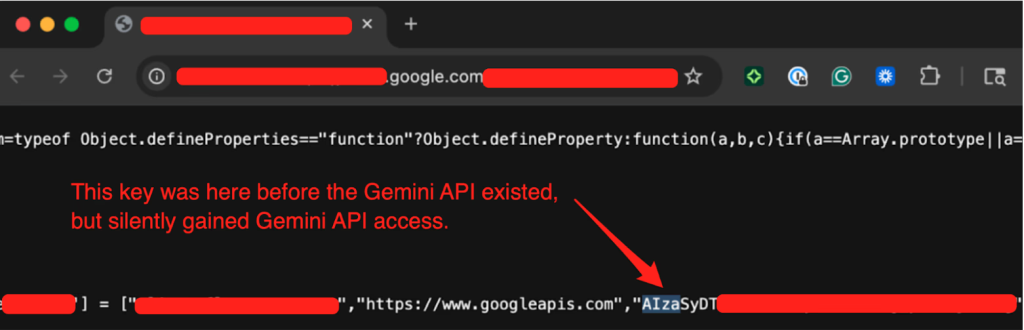

However, when a developer enables the Generative Language API on an existing project, any previously deployed public keys are silently upgraded into highly sensitive credentials.

This issue stems from insecure defaults and improper privilege assignment within the Google Cloud Platform.

Researchers at Truffle Security said that when a new API key is created, its default architectural state is set to “unrestricted,” making it automatically valid for every enabled API within that project.

Because Google relies on a single key format for both public identification and authentication, there is a dangerous lack of separation between publishable and secret environments.

Exploiting this privilege escalation flaw is remarkably simple for malicious actors who scrape public code repositories.

An attacker merely needs to copy an exposed key from a public website and send a direct request to the Gemini platform to gain authorized access.

From there, they can steal private datasets, view cached project contexts, and execute expensive AI queries that could rack up thousands of dollars in usage fees.

Privilege Escalation Impact

The transition from legacy identifiers to sensitive credentials creates a massive attack surface spanning thousands of vulnerable websites.

The table below highlights the critical differences between how these keys were originally designed and how they function when Gemini is enabled.

| Feature | Legacy Public API Keys | Upgraded Gemini Keys |

|---|---|---|

| Primary Purpose | Public project identification and billing . | Sensitive authentication and data access . |

| Deployment Location | Client-side HTML and JavaScript . | Secure backend environments . |

| Exposure Risk | Low risk; harmless if public . | High risk; exposes private AI data . |

| Default Configuration | Unrestricted access across projects . | Unrestricted, requiring manual scoping . |

Google is addressing this crisis by defaulting new keys in AI Studio to Gemini-only access and proactively blocking known leaked credentials.

Organizations must immediately check all projects for the Generative Language API and audit their dashboard for unrestricted keys.

Security teams should immediately rotate any public-facing keys with Gemini access and use code scanners to detect exposed credentials.

Follow us on Google News, LinkedIn, and X to Get Instant Updates and Set GBH as a Preferred Source in Google.