An open-source security scanner, developed by Git Hub user Adam Swanda, was released to explore the security of the LLM model. This model is utilized by chat assistants such as ChatGPT.

This scanner, which is called ‘Vigil’, is specifically designed to analyze the LLM model and assess its security vulnerabilities. By using Vigil, developers can ensure that their chat assistants are safe and secure for use by the public.

As the name suggests, a large language model can understand and create any language. LLMs learn these skills by using huge amounts of data to learn billions of factors during training and by using a lot of computing power while they are studying and running.

Vigil Tool

If you want to ensure the safety and security of your system, Vigil is a useful tool for you. Vigil is a Python module and REST API that can help you identify prompt injections, jailbreaks, and other potential threats by evaluating Large Language Model prompts and responses against various scanners.

The repository also includes datasets and detection signatures, making it easy for you to begin self-hosting. With Vigil, you can rest assured that your system is secure and protected.

Currently, this application is in alpha testing and should be considered experimental.

StorageGuard scans, detects, and fixes security misconfigurations and vulnerabilities across hundreds of storage and backup devices.

ADVANTAGES:

- Examine LLM prompts for frequently used injections and inputs that present risk.

- Use Vigil as a Python library or REST API

- Scanners are modular and easily extensible

- Evaluate detections and pipelines with Vigil-Eval (coming soon)

- Available scan modules

- Supports local embeddings and/or OpenAI

- Signatures and embeddings for common attacks

- Custom detections via YARA signatures

To protect against known attacks, one effective approach is using a Vigil to prompt injection technique. This method involves detecting known techniques used by attackers, thereby strengthening your defense against the more common or documented attacks.

Prompt Injection When an attacker generates inputs to manipulate a large language model (LLM), the LLM becomes vulnerable and unknowingly carries out the attacker’s aims.

This can be done directly by “jailbreaking” the prompt on the system or indirectly by manipulating external inputs, which may result in social engineering, data exfiltration, and other problems.

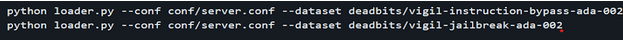

If you want to load the vigil by appropriate datasets for embedding the model with the loader.py utility.

The set scanners examine the submitted prompts; every one can help with the ultimate identification. Scanners are:

- Vector database

- YARA / heuristics

- Transformer model

- Prompt-response similarity

- Canary Tokens

Experience how StorageGuard eliminates the security blind spots in your storage systems by trying a 14-day free trial.