AI didn’t sneak into our lives. It burst through the door, took a seat at the table, and started finishing our sentences.

Instead of a helpful list of links, Google now tries to answer your question. Microsoft’s Copilot drafts replies to your boss before you’ve had coffee. Your phone summarizes conversations you don’t even remember having.

Every major tech company is racing to add AI to its products because no one wants to be left behind. And the public is often forced to accommodate such corporate whims because of the increasing effects of “enshittification,” as explained by Cory Doctorow on the Lock and Code podcast.

People are using AI. But they don’t trust it.

In our latest privacy pulse survey, in which we gathered 1,200 responses from readers of the Malwarebytes newsletter earlier this year, 90% of respondents said they’re worried about AI using their data without consent.

Ninety per cent.

That’s not a few skeptics. That’s nearly everyone we asked. We admit, our sample is probably skewed towards the privacy conscious. But 90% of people who follow Malwarebytes are worried about how much personal data AI is slurping up, and what it’s going to do with it, so that’s a good barometer for how much everyone should care.

That concern is changing the way people are using the internet:

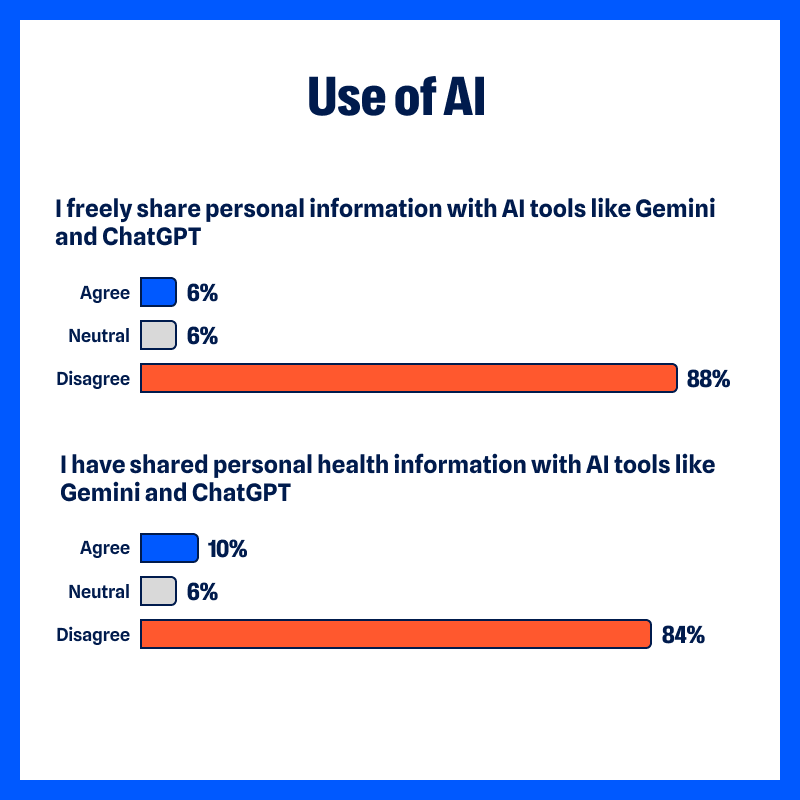

- 88% do not “freely share personal information with AI tools like ChatGPT and Gemini”

- 84% have not “shared personal health information with AI tools”

- 43% have “stopped using ChatGPT”

- 42% have “stopped using Gemini”

This distrust didn’t start with AI

Of course, AI gets all the headlines. We write about many of them.

But people have been concerned about holding onto their personal information for a long time.

From the survey:

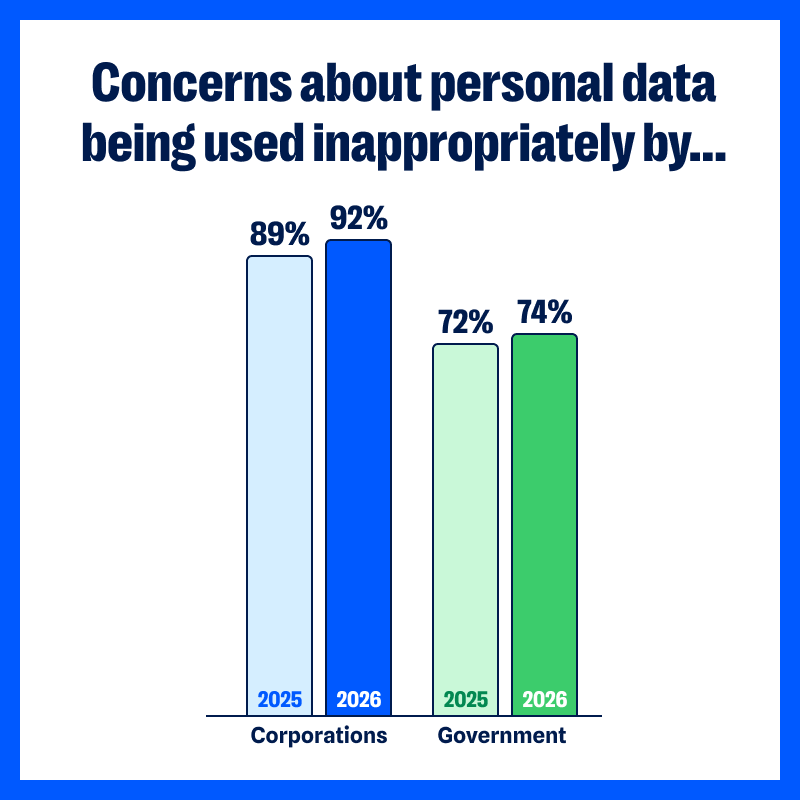

- 92% are concerned about their “personal data being used inappropriately by corporations,” which is up slightly from last year (89% in 2025)

- 74% are concerned about their “personal data being accessed and used inappropriately by the government” (up from 72%)

Years of data breaches, shady tracking practices, and dangerous misuse by data brokers have chipped away at our confidence in organizations to protect our data. Over the past year, healthcare organizations have continued to report major security lapses affecting sensitive patient data. The FTC warned about “staggering” commercial surveillance practices that most consumers never agreed to, and, according to our survey, 49% of people reported that their personal info has been used in scams that target them or their family.

When people use social media, they generally understand their clicks and likes are being tracked. When they shop online, they expect the shop to store their purchase histories or track the items they were interested in. They understand the concept of advertising and see how it slots into social or commercial websites.

AI tools are different because we use them differently.

When we share ideas, client meeting notes, personal dilemmas, and health questions with an AI assistant, we are treating them as a confidant. Maybe we’ve paid for an access level that promises not to train its models on our data. Even when we’re chatting about flat-packs and missing screws with a site’s AI chatbot, we behave as if we’re talking to another person, and not broadcasting that conversation to the world.

The interaction with AI feels intimate and conversational, even though we’re all aware we’re talking with a bot. That makes the uncertainty around how that AI handles the data we’ve fed it more personal, more immediate.

We know that AI assistants from a company are often plugged into other tools. We know GPTs can be created by any developer or scammer. (Check out Malwarebytes in ChatGPT—we’re one of the good guys). We know nearly every business or personal platform now has some form of AI-based data-gathering element. What the average person doesn’t know about AI feels scary.

- Where are our prompts stored?

- Are those prompts are used to train the AI?

- How long are they kept?

- Can anyone inside the company read them?

- Can they be bought? Used for advertising? Leaked?…

Yes, companies publish policies, but who in the real and busy world reads all those before we use the tool? Fewer than half, but a growing number, with 48% said they now read privacy policies and reports—up from 43% in 2025.

Besides, we know from recent headlines that companies are rushing out AI features before they’ve had time to properly security-check them.

A glimmer of hope: People are taking action

This result from the survey caught our eye.

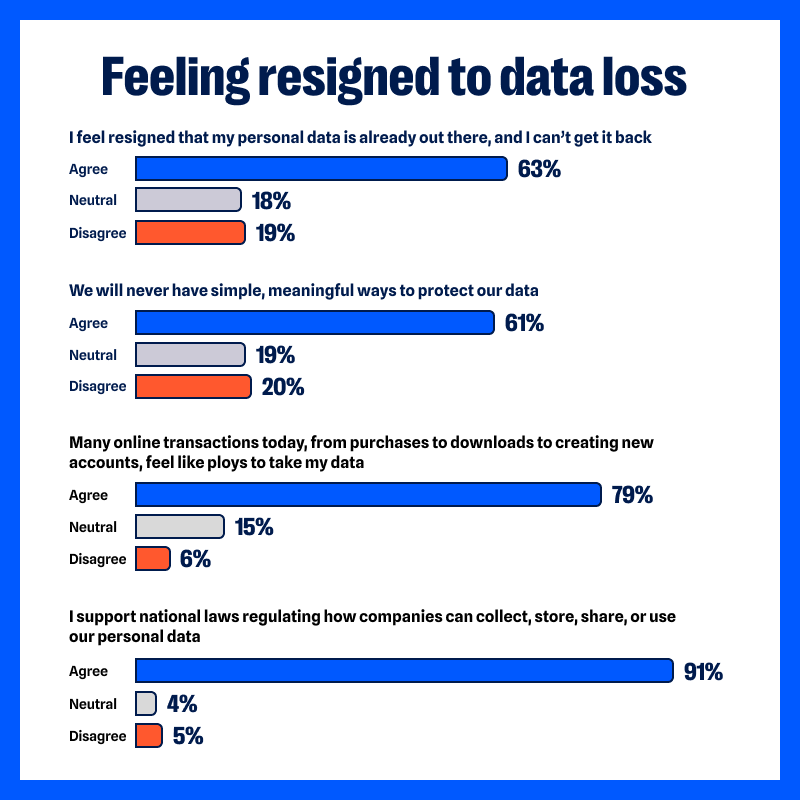

63% of respondents agreed with the statement: “I feel resigned that my personal data is already out there, and I can’t get it back.”

Last year, that number was 74%.

So, while concern about data misuse is still high, fewer people feel entirely helpless.

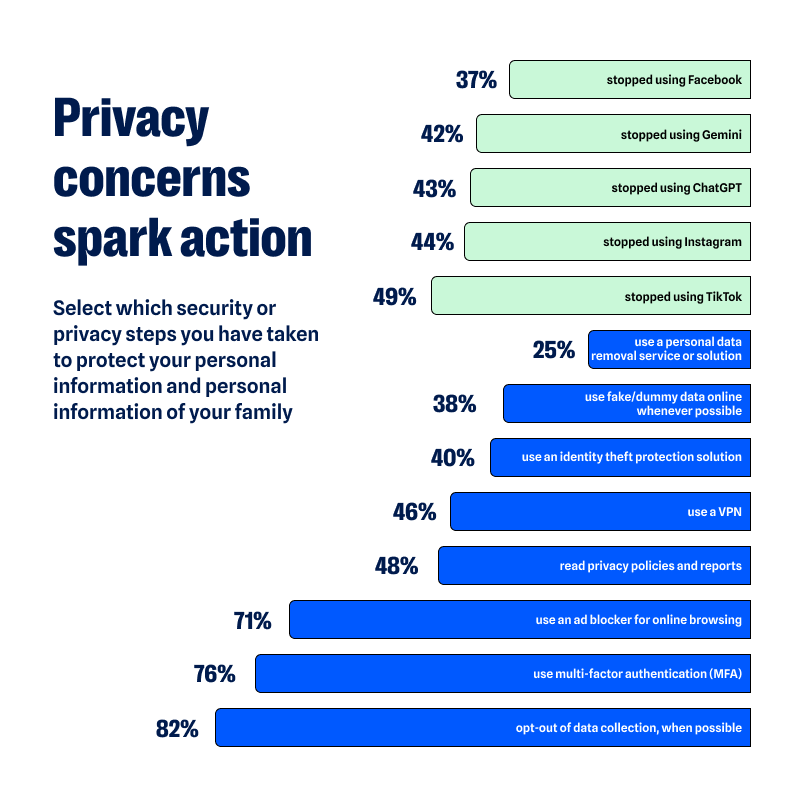

Respondents reported taking practical steps to limit their data exposure.

Some have reduced or stopped their use of certain platforms entirely because of privacy concerns, including social media (44% have stopped using Instagram, 37% have stopped using Facebook, and 49% have stopped using Tiktok) and AI tools (43% have stopped using ChatGPT, 42% have stopped using Gemini).

Others reported sharing less personal information online or avoiding sensitive topics in digital conversations (88% said they do not freely share personal information with AI tools).

There is also increased use of privacy-protective tools for their data, devices, and identities.

- 46% use a VPN (up from 42% in 2025)

- 40% have an identity theft protection solution (down from 43%)

- 25% use a personal data removal service or solution (up from 23%)

- 71% use an ad blocker for online browsing (up from 69%)

- 48% read privacy policies and reports (up from 43%)

- 76% use MFA (up from 69%)

- 82% opt-out of data collection, as possible (up from 75%)

- 38% use fake/dummy data online whenever possible (up from 33%)

None of these actions erase historical data trails, but they do limit new exposure. David Ruiz, senior privacy advocate at Malwarebytes, said:

“Twenty years of online innovation have pointed too many companies in the same direction—against everyday people.

For most people today, the corporations that are pressing AI tools into their daily lives are the same corporations that have monetized their attention spans, invaded their privacy, and lost their data to breaches. But a counterforce is emerging.

The small changes in user behavior should encourage others to understand that, even now, privacy remains possible and worthwhile.”

Privacy protection can feel binary: either everything is exposed or everything is secure. But it’s incremental, and the survey responses reflect how people are starting to take back control of their data.

What this means for companies

Organizations adding AI into their products face a more complex audience than they might have first assumed.

For years, product teams have assumed users would trade more data for more convenience. But when nearly nine in ten people said they’re concerned about AI using their data without consent, trust becomes part of the product itself. Mozilla jumped on this and added a simple “turn off AI” button to Firefox.

It’s no longer enough to highlight what AI can do. Users want to understand what happens after they press “submit.”

We the People… want strong privacy laws

When concern reaches the sort of level we’ve seen in our survey, it inevitably raises the thorny question of regulation.

91% of respondents said they “support national laws regulating how companies can collect, store, share, or use our personal data.”

The issue is less about one tool and more about a sense that the guardrails are unclear. Generative AI systems can draft legal documents, write emails, and process sensitive data at speed. Much of the existing privacy frameworks in the US, EU, and other regions were written before AI was commonplace.

Regulators are trying to catch up. The European Union’s AI Act, passed in 2024, introduced a risk-based approach to governing certain AI systems. In the US, federal agencies including the FTC have issued guidance and warnings around commercial surveillance and automated decision-making, but it does not yet have a comprehensive AI-specific privacy statute.

Desire for national laws and regulation is at an all-time high. Consumers want boundaries that are understandable and enforceable.

What you can do

We’re clearly not going to abandon all technology. AI isn’t going to eat itself out of existence. It can be pretty useful. We use AI to find threats and scams no one’s seen before, which leads to far better protection. We also use generative AI in Scam Guard to provide 24/7 chat assistance (paired with our deep threat research expertise, of course). Many people use them to save time, draft documents, or explore ideas. Also, sadly, to create little caricatures of themselves.

The key here is thoughtful use.

- Limit what you information you give to public AI tools, especially health details, financial data, and client-sensitive information.

- Review the privacy and data retention policies of AI tools you use regularly.

- Delete accounts and apps you no longer need.

- Audit app permissions at least twice a year.

- Use a VPN to reduce tracking by your internet service provider.

- Remove your information from major data broker sites. Check whether your personal info is exposed with a Digital Footprint scan.

- Use a reputable password manager and avoid reusing passwords across services.

At Malwarebytes, we believe privacy is a human right. Protecting personal data is inseparable from protecting personal security. The more information that circulates without oversight, the greater the opportunity for misuse, fraud, and harm.

AI will continue to develop. That trajectory is unlikely to slow. The question is whether trust will grow alongside it.

See if your personal data has been exposed.

Survey information

Malwarebytes conducted a pulse survey of its newsletter readers between January 26 and February 3, 2026, via the Alchemer Survey platform.

In total, 1,235 people responded from 72 counties, with most respondents from the US, UK, Canada and Australia.