As AI tools evolve from siloed chatbots to autonomous, hyperconnected systems, they create a vast new attack surface. Discover how to manage this risk by focusing on visibility, agency, and semantic security to protect your organization’s increasingly complex landscape of agentic AI systems.

Key takeaways

- Organizations have moved from siloed AI chatbots to autonomous, hyperconnected agents that can execute actions and access sensitive internal data stores, exponentially increasing cyber risk.

- A major security challenge arises because AI agents are often granted capabilities that far exceed their intended goals, creating an unnecessarily large and dangerous blast radius.

- Securing agentic AI requires moving beyond reactive breach detection to a proactive strategy grounded on exposure management and focused on total visibility, posture adjustment, and monitoring of semantic attack vectors.

Here’s a common occurrence in organizations these days: A team – finance, human resources, marketing – sets up an AI agent to perform a seemingly simple action, such as getting task details and emailing them out to the appropriate recipients.

But is this AI agent as harmless as it looks? A deeper look reveals a big potential for agentic AI security risks: This seemingly innocuous AI agent has been granted the power to connect to a sensitive data store and exfiltrate that data. Is it secure? Has it been properly configured?

Now multiply this scenario by a thousand – or more. The process of spinning up autonomous AI agents is becoming routine, as AI vendors make it increasingly easy to configure them. Thus, eager to automate processes and boost productivity, a typical organization today may have thousands of AI agents operating autonomously.

For cybersecurity teams, this prevalence of AI agents represents a major new challenge. How do you secure thousands of AI agents that can act on their own and that are hyperconnected to each other and to myriad internal and external systems and data stores?

In this blog post, we’ll delve into this risky scenario and outline the right approach to protect this new attack surface created by this fast proliferation of AI agents.

The agentic AI security challenge is three-fold

The first wave of AI tools that organizations started adopting back in 2022 were mostly siloed chatbots that operated with little integration to other enterprise systems. Thus, they had limited or no access to internal databases and they couldn’t execute actions on internal systems.

What a difference a few years make. Fast-forward to today and organizations are awash in agentic AI tools, whose potential for cyber risk is exponentially higher due to three core elements: hyperconnectivity, agency, and semantics.

Let’s take a closer look at how each one of these characteristics of AI tools helps create a broad attack surface that cybersecurity teams must secure.

Hyperconnectivity

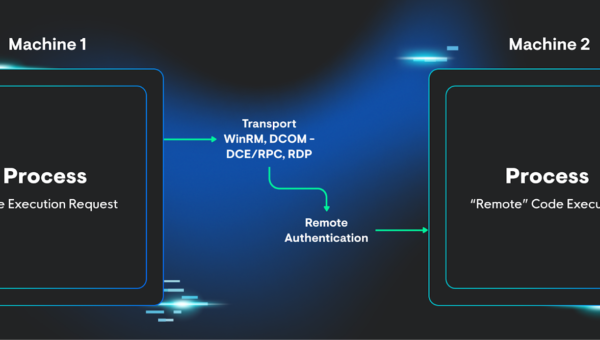

You need to look at your organization’s landscape of autonomous AI tools and see it not as a collection of individual assets, but rather as a multi-agent system constellation, made up of many interwoven components.

Some of those components are internal, while others are external. Some were built in house, while others came from third-parties. Some live in the cloud, others on endpoints, and yet others on AI platforms.

Cybersecurity teams must not only detect all of those AI components, wherever they are, but also understand how they connect to each other and how they interact.

For example, an organization may have a Copilot Studio AI agent designed to summarize information fetched from the internet. But in this hypothetical setup, that agent also interacts with another agent, built on AWS Bedrock, that runs some of your critical processes in the cloud.

What if the Copilot Studio agent retrieves an indirect prompt injection from the internet, which in turn opens the door for an attacker to tamper with the critical cloud operations the AWS Bedrock agent has access to? Suddenly, what appeared as a straightforward agentic AI setup has revealed itself as a toxic combination with potentially catastrophic consequences.

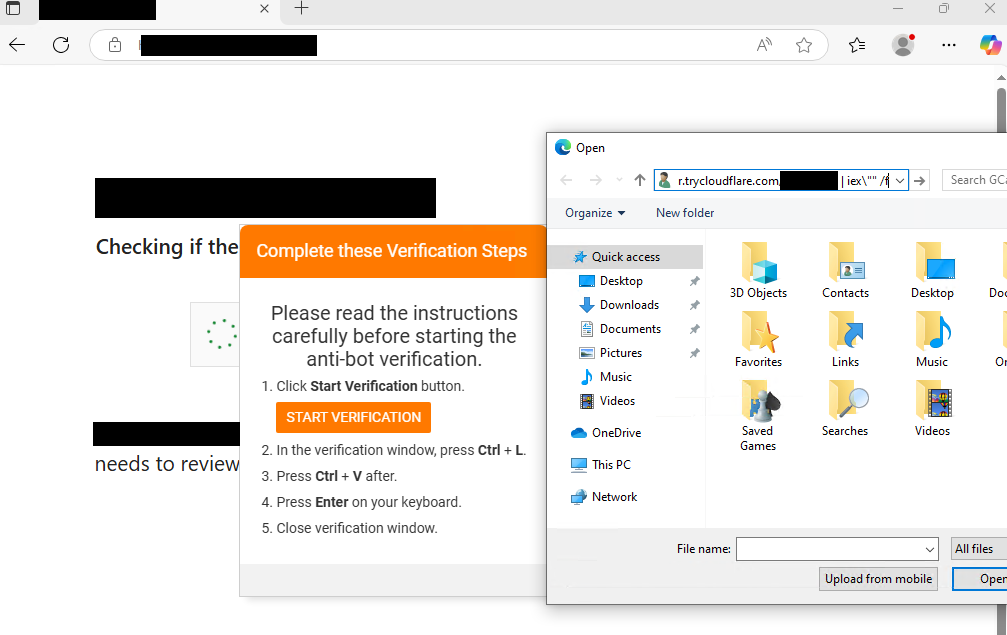

Adding to the challenge, AI tools’ configuration plane is getting increasingly complex. This means that cybersecurity teams can’t be content with simply reviewing and approving AI products like Anysphere’s Cursor, Anthropic’s Claude Code, or Microsoft’s Copilot in a vacuum.

Once an organization greenlights an AI tool for use, the IT department will start configuring them in a variety of ways and integrating them with internal systems, creating the risk for misconfigurations and insecure connections. Ask yourself: Where is the code written by an AI tool getting sent to?

Moreover, do you know that tools like Claude Code and Cursor can be configured to operate in “agent mode,” which allows them to execute commands on endpoints, effectively behaving as full-blown autonomous agents?

Finally, the context of these AI tools changes quickly and constantly, as they ingest a growing amount and diversity of inputs that can make them vulnerable to malicious tampering.

Agency

Here’s a hard truth: We’re building systems that can act autonomously, but we’re securing them as if they can’t. And this is a problem because humans are rarely in the loop anymore when it comes to AI. On top of that, AI systems are not deterministic systems, like conventional IT ware, but rather probabilistic, meaning that their outputs are hard to anticipate. This is a tectonic shift for cybersecurity teams.

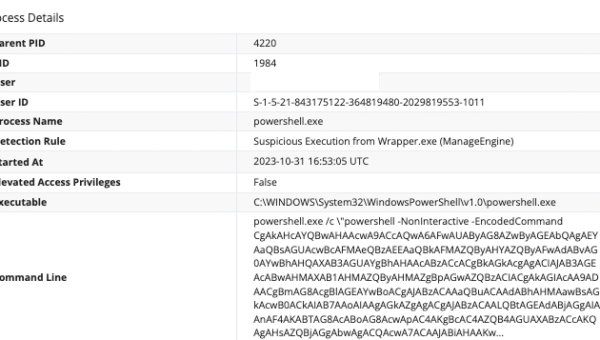

Chances are that the next major AI cybersecurity incident you have to deal with will not be caused by a breach, but rather by an action one of your AI systems was allowed to perform.

Let’s look at this in more detail. There are three main components to AI agency:

- Goal: An AI agent is created to summarize news, emails or tasks.

- Capabilities: Often, the AI agent is able to perform certain actions that exceed the scope needed to accomplish the intended goal.

- Blast radius: This is everything that can go wrong if the AI agent’s capabilities are compromised.

The problem is that quite often an AI agent’s capabilities – the tasks it can perform – exceed the goals for which it was created. In other words, most AI agents possess too much agency. This disparity between capabilities and goals creates an oversized level of cyber risk.

Compounding the problem is the reality that organizations’ tendencies are to trust AI, meaning that the human oversight over these systems is usually minimal.

Semantics

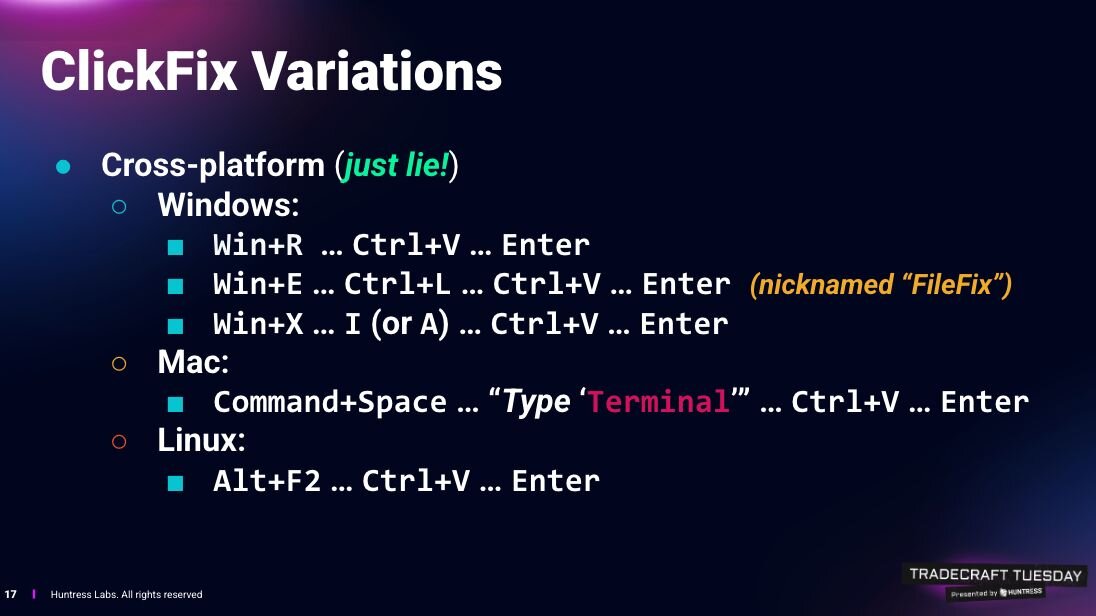

While traditionally in cybersecurity we rely on exact matches to assess that a system hasn’t been tampered with, this approach doesn’t work with AI systems, which operate on meaning, not strict syntax.

That’s why it’s easy for an attacker to bypass traditional guardrails by modifying the input the AI tool processes using synonyms, typos, paraphrases and other such language tricks. It’s extremely hard to monitor this vast and ever-expanding semantic attack vector and prevent model poisoning, output manipulation and prompt injection attacks.

In other words, attackers don’t need to break the security controls anymore. They just need to go around them using semantics tactics.

How do you secure agentic AI?

Historically, cybersecurity strategies have been focused on reactive breach detection and response. However, to protect your AI agents, it’s critical to shift to a preventive, proactive approach grounded on exposure management. That way, you’ll be able to understand AI agents’ dynamic systems and what they can do in runtime, as well as fix the misconfigurations and gaps before attackers exploit them.

To achieve effective agentic AI governance and security, you need to ground your exposure management strategy on three foundational concepts:

Visibility

You must inventory all the AI agents in your environment, as well as their individual components, wherever they are – on endpoints, in the cloud, in AI platforms and on-premises.

But you must go deeper. You need to also know the following about each agent:

- The data it was trained on, the knowledge base it feeds off of and the data stores it’s connected to.

- The capabilities it possesses, such as the actions it can take and the agentic protocols that govern it.

- The connections it has to other systems both internally in your organization and externally; to other AI agents; and to AI and non-AI user identities.

- Its intent, such as its goals, along with its blast radius

Posture

You must understand what an AI agent can do as part of a broader dynamic system, as well as how its capabilities exceed its goals, so that you can adjust what it’s allowed to do without impacting its efficacy.

Let’s say you have multiple AI agents that are integrated and interact with each other, and these agents can, for example, connect to the internet and to your most sensitive cloud environment. It’s imperative to understand what this dynamic multi-agent system can do in the real world, in order to spot any potential toxic combinations within its components.

With these insights, you can pinpoint remediations to the security gaps created by the AI toxic combination.

Threat detection

Once you understand what this dynamic multi-agent cluster can do, you need to be able to spot trouble signals in your runtime environment for ongoing exposure reduction.

Conclusion

AI security needs to be everywhere in your environment, and the way to make AI security pervasive is by adopting an exposure management platform and strategy. .

In a nutshell, exposure management empowers cybersecurity teams to discover every asset across your environment – not just AI systems, but also IT, cloud, identity, and OT wares. From there, it helps you assess, prioritize and orchestrate remediation across the entire AI attack surface, giving you control over this new landscape of AI agents populating your environment.

To learn about how Tenable can help you boost your AI security, visit the Tenable One AI Exposure page.