- The Real-World AI Legal Risks Organizations Aren’t Talking About

- Cyber Risk Strategy: Where Legal Strategy Meets Business Reality

- When Privacy and Cybersecurity Become Business Enablers

- Changing Barriers for Women in Cybersecurity and Tech Law

- Career Advice That Shaped Her Journey

- Managing AI Legal Risks Requires Governance and Awareness

Artificial intelligence (AI) tools are rapidly finding their way into everyday business operations, from drafting emails and analyzing documents to supporting investigations and compliance work. But while companies are eager to adopt these tools, many are underestimating the AI legal risks that come with them.

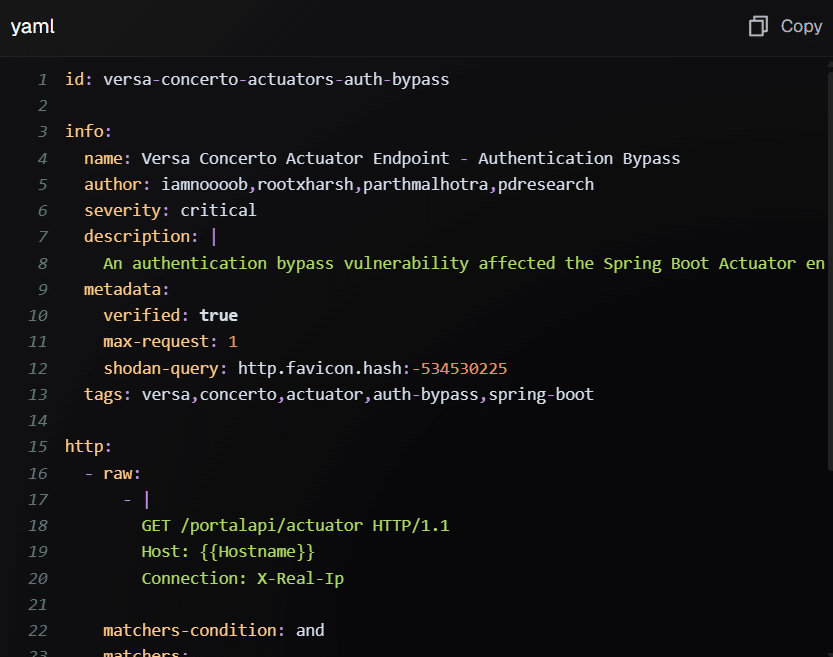

A simple interaction with a public AI tool can unintentionally expose confidential data, cross international data borders, or create legal liabilities organizations have not yet fully considered. As a result, lawyers and cybersecurity professionals are increasingly confronting new questions around governance, liability, and responsible AI use.

In this interview with The Cyber Express, Lisa Fitzgerald, Partner at Norton Rose Fulbright, discusses the AI legal risks organizations are already facing, why identifying the right AI use cases is critical, and how companies can safely adopt emerging technologies without exposing themselves to data breaches or litigation.

Drawing on her experience advising organizations on cyber incidents and data protection challenges, Fitzgerald highlights the legal blind spots businesses should pay attention to as AI adoption accelerates.

The Real-World AI Legal Risks Organizations Aren’t Talking About

One of the most immediate challenges organizations face when adopting artificial intelligence is not necessarily the technology itself but identifying appropriate and safe use cases. According to Fitzgerald, this is where many companies underestimate AI legal risks.

“Identifying suitable use cases is a real-world challenge already faced by organisations seeking to adopt and deploy AI more broadly. This is the ‘cost-benefit’ question all organisations need to be asking.”

Fitzgerald points to seemingly harmless applications of AI tools as examples of how risks can quickly escalate.

“An example is using generative AI to help your email sound ‘less passive aggressive’. Seems fairly benign. Add a public AI tool with confidential content or someone else’s personal information, and a benign use case can quickly turn into a global data breach.”

She explains that once information is entered into public AI platforms, organizations may lose control of where that data travels.

“The data may have transferred borders, possibly on terms that entitle the data to be used by the vendor for training and seen by humans involved in quality-control processes. I have advised numerous clients on inadvertent data breach scenarios, and it’s a costly, preventable fall-out.”

Beyond privacy concerns, Fitzgerald warns that organizations may also face legal exposure through other channels.

“The full legal repercussions of certain use cases are also yet to be felt. Your rewritten email, free of passive-aggressive tone, could potentially increase litigation risk as a defamatory publication, intellectual property rights infringement or give rise to a claim in the tort of negligence or serious invasion of privacy. These are areas to watch both from the perspective of regulation and jurisprudence.”

To mitigate such AI legal risks, she highlights two practical steps organizations are already adopting.

“Two mitigation controls are helping organisations forge ahead with safe AI adoption. The first is raising staff awareness through training, and the second is vetting use cases efficiently.”

Fitzgerald explains that organizations are increasingly implementing structured review frameworks before allowing AI tools to be used internally.

“Vetting of AI uses is similar in form to Privacy Impact Assessments or PIAs. I call them Use Case Assessments or UCAs. UCAs can be a very simple, automated process, using a questionnaire that captures a basic description of the outcome seeking to be achieved and then the information needed to achieve it.”

She notes that such frameworks often go beyond traditional privacy reviews.

“Precisely because an organisation’s digital assets comprise more than just personal information, PIAs cannot be the only legal screening tool. UCAs that cover personal information, confidential information, privileged information, proprietary information, or another digital asset important to your operations are becoming essential.”

Cyber Risk Strategy: Where Legal Strategy Meets Business Reality

Organizations often struggle to align legal advice with operational decision-making, especially during cyber incidents or data breach investigations. However, Fitzgerald believes that the gap is narrowing.

“Given how important legal professional privilege is in protecting certain information from being weaponised, ‘business reality’ and ‘legal strategy’ around cyber risk and data protection have never been more aligned.”

She notes that legal privilege has become particularly important when organizations commission digital forensic investigations.

“The lack of legal privilege around certain information underlying key business decisions – such as a digital forensics report used to legally assess a data breach – has exposed organisations both to increased regulatory and punitive fines as well as orders to discover documents in legal proceedings to prove an organisation’s negligence, breach of contract or reduction in share price value.”

According to Fitzgerald, organizations that fail to properly structure legal advice during cyber incidents may face serious consequences.

“We could be moments away from ‘default judgments’ in the era of data and cyber breaches, where legal advice has not been taken or has been waived.”

She also shares a simple but effective framework used in Australia to guide responsible cyber risk decisions.

“An important element of legal strategy is something in Australia we call the ‘Herald Sun’ test. This is as simple as asking ‘could the decisions you’re making around cyber and data protection grab newspaper headlines?’ If so, is it a good news story or a bad one? Simple but effective.”

When Privacy and Cybersecurity Become Business Enablers

Many organizations still treat cybersecurity compliance as a regulatory obligation rather than a business advantage. Fitzgerald believes that perspective changes when leadership recognizes the value of digital assets.

“When leaders start viewing privacy and cyber compliance as business enablers, it is usually a sign of business maturity and an investment in the future success of the business.”

She emphasizes that organizations increasingly view data as a strategic resource.

“This is often because data assets have been recognised as the ‘crown jewels’ or the ‘refined oil’ of the organisation that could turn into either ‘stolen goods’ or ‘uranium’.”

When companies prioritize security and governance, they not only reduce AI legal risks but also strengthen investor confidence.

“When compliance directly relates to the preservation of the value of digital assets, an organisation’s roadmap to success becomes less pliable and more attractive to investors.”

Changing Barriers for Women in Cybersecurity and Tech Law

Reflecting on the progress highlighted during International Women’s Day, Fitzgerald believes one longstanding misconception about women in technology is slowly disappearing.

“One barrier that is disappearing is the perception that cybersecurity and technology laws are not interesting to women.”

She adds that curiosity about technology and its global impact cuts across gender lines.

“Women are equally curious as men about the technology on which we depend, the global revenue streams it supports, and the cyber warfare being waged between countries or by insiders. There is real purpose in the work we do, and that is something that everyone is understandably drawn to.”

Career Advice That Shaped Her Journey

Fitzgerald recalls a piece of advice that significantly influenced her career path during a conversation with Christine Lagarde.

“Christine Lagarde was once the Chair of a law firm. I recall having the opportunity of asking her a question: ‘As a woman in the law and one leading a global law firm, what has helped you succeed?’ She said ‘You must work hard, which means making sacrifices, but you must not sacrifice your health.”

That message stayed with her throughout her career.

“Since then, ensuring I give myself time to exercise has been a routine that has helped shape my journey. It helps me to make clearer decisions about work, improves time spent with family, and ensures I’m fit mentally and physically to support clients during their most stressful times.”

Managing AI Legal Risks Requires Governance and Awareness

As organizations continue to integrate AI into everyday operations, the conversation around AI legal risks is becoming more urgent. What may appear to be small productivity gains—such as rewriting emails or automating internal tasks—can carry significant legal consequences if sensitive data or intellectual property is exposed.

Fitzgerald’s insights highlight an important reality: successful AI adoption is not just about deploying the right technology. It requires clear governance frameworks, legal oversight, and internal awareness to ensure innovation does not unintentionally create new risks.

For organizations navigating the fast-moving intersection of law, cybersecurity, and emerging technology, proactively identifying and managing AI legal risks will be essential to maintaining trust, protecting digital assets, and avoiding costly legal fallout.

Source link