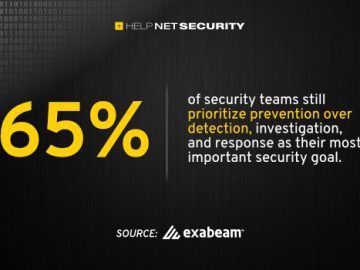

Vendors selling AI-powered security operations platforms have built their pitches around a consistent set of promises: autonomous threat investigation, dramatic reductions in analyst workload, and an accelerating path toward humanless operations. Practitioners buying and deploying those platforms describe something different.

A report by Anton Chuvakin, Security Advisor at Google Cloud’s Office of the CISO, and Oliver Rochford, co-founder of Aunoo AI, draws on more than 30 vendor briefings, public practitioner commentary from Reddit and Discord, and direct interviews with CISOs and detection engineers actively running AI SOC tools in production. The picture that emerges is one of shallow deployment, constrained use cases, and a vendor communications pattern that the authors argue systematically misattributes product limitations to buyer psychology.

Adoption is narrow and deliberate

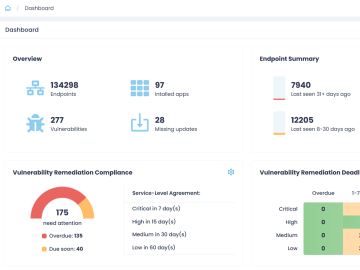

Gartner’s 2025 Hype Cycle for Security Operations places AI SOC agents at the Innovation Trigger stage with 1 to 5 percent market adoption. That figure is consistent with what the report’s authors found in their own conversations. Many organizations are waiting for AI capabilities to be integrated into existing SIEM, XDR, and SOAR platforms. Teams that have moved forward are deploying AI in constrained, lower-risk areas: alert enrichment, investigation summarization, report drafting, and workflow steps that do not require judgment calls.

A pattern the authors call “pilot purgatory” is common. A proof-of-value converts to a small production deployment, the AI handles enrichment and summarization, human analysts retain decision authority, and expansion into higher-stakes workflows does not follow.

Some mature teams are building their own solutions using general-purpose AI tools wrapped around internal tooling. Several detection engineering teams report that a well-prompted LLM with access to internal documentation outperforms vendor products that lack context about their specific environment.

AI SOC vendor claims don’t add up on metrics

Vendor adoption statistics frequently count feature activation or survey responses indicating organizations are “exploring” or “considering” AI SOC tools. Practitioners describe a different situation: features turned on for evaluation, then ignored or worked around when incidents occur.

Oliver Rochford draws a distinction between exposure and trust. “Analysts may see AI-generated summaries, suggested actions, or risk scores on every alert,” he said. “That does not mean they trust them.”

Anton Chuvakin argues that go-to-market metrics need sharper definitions. “Right now, ‘50% faster investigations’ could mean almost anything,” he said. “Faster than what baseline, under what conditions, and measured how? We expect case studies with falsifiable claims. Better would be something like ‘Mean-time-to-Verdict reduced to 7 minutes during account hijack triage in AWS environments’. It has to be specific enough so that a buyer can hold the vendor to it, or it’s just lip service.”

The prophecy pattern

The report draws on the social psychology concept from “When Prophecy Fails,” a study of belief persistence under contradictory evidence. When AI SOC tools fall short of their marketed capabilities, the explanation vendors most frequently offer is that buyers are not AI-ready, that change resistance is the obstacle, or that trust needs to mature. The authors argue this pattern displaces accountability away from product immaturity and onto buyer psychology, which they describe as a structural failure of feedback integration between engineering reality and go-to-market messaging.

“AI SOC marketing is better understood as prophetic rather than technical,” the report states. “Most claims made today describe a future state, e.g. autonomous investigations, analyst replacement, agentic operations, rather than demonstrable, repeatable capabilities in production.”

What fails in production

Chase Theodos, a Threat Detection Engineer at C3 Integrated Solutions, identifies autonomous investigation and response workflows as the capability most frequently showcased in demos and least reliable under live conditions. “Demos rely on curated data, and workflows appear seamless during presentations, but can break down at scale or when data is incomplete or ambiguous,” he said. “In controlled environments, these products perform well when alerts are clean, telemetry is comprehensive, and the attack path is linear. In live SOC environments, many incidents do not meet those conditions.”

Theodos also raises concerns about specific automation categories that vendors promote as production-ready. Account disablement, host isolation, and policy changes carry risks that current AI systems are not equipped to manage reliably. “AI struggles to reliably distinguish malicious behavior from legitimate but unusual activity,” he said. Attribution and attacker intent classification, which vendors frequently demonstrate, are probabilistic outputs that can delay response and effective containment when wrong.

Alert reduction as a metric

Merlin Gillespie, CTO at Cybanetix, identifies alert reduction as the most commonly cited vendor metric and the one most likely to obscure operational risk. When AI sits between signal generation and human review without traceability, a drop in alert volume may reflect suppression of signals rather than improved detection fidelity.

“Until AI-driven conclusions can be evidenced, reproduced, or interrogated, a significant validation burden remains,” Gillespie said. “This work is not necessarily harder than traditional analysis, but it is qualitatively different and often underestimated.”

Public practitioner commentary collected from Reddit cybersecurity communities corroborates this concern. In one widely cited thread, a practitioner described running a large language model against 348 known false positives plus one synthetic true positive. The model hit 71 percent accuracy, called obvious false positives malicious, and missed the actual test incident entirely.

Analyst judgment under AI influence

Gillespie identifies a second risk that receives less attention in vendor messaging: the effect of AI-generated summaries on analyst reasoning over time. Generative AI systems are effective at transforming complex alert data into concise narrative. That makes them a strong starting point for investigation. It also creates conditions where analysts defer to confidence-weighted AI output rather than conducting evidence-weighted analysis.

“We have observed scenarios where teams become unsettled by an AI-generated conclusion even when the underlying data suggests a more benign explanation,” Gillespie said. “AI improves comprehension speed. It can degrade judgment if its outputs are treated as authoritative.”

The staff reduction claim

Gillespie pushes back on vendor narratives that position AI primarily as a cost-reduction mechanism. In Cybanetix’s experience, improvements in investigative capability have emerged from analysts retracing AI logic and validating its investigative steps. The value resembles guided learning. “Claims that frame AI primarily as a staff-reduction mechanism should be treated with caution,” Gillespie said. “When AI is presented as a cost-reduction tool rather than a capability amplifier, we have observed risk being shifted and massaged rather than removed.”

The report’s case studies offer a more varied picture. One CISO at a large European enterprise did experience workforce disruption after deploying an AI-driven managed detection and response platform: roles focused on phishing triage and header analysis were automated within weeks, and the security operations team went through a reorganization. The CISO described the transition as reactive, and noted that he would have invested sooner in creating new positions. A sole practitioner at a U.S. conservation NGO deployed a different AI SOC platform at roughly half the cost of a previous human MDR arrangement, gaining correlation and investigation depth the previous service did not provide.

What credible vendors would do differently

Chuvakin argues that if buyers demanded evidence of adoption depth under real incident pressure, vendor roadmaps would shift away from autonomous agent positioning and toward capabilities that help analysts resolve incidents faster. “A good example here is Anthropic’s Claude and its specialized agents such as the code-simplifier agent,” he said. “They don’t claim to replace a human engineer. They solve a very specific narrow set of tasks. Compare that with some of the over-the-top positioning in cybersecurity of ‘Autonomous AI Threat Hunters’ or ‘Agentic SOC teams.’”

Rochford identifies a set of capabilities that credible vendors would prioritize if model accuracy stopped improving: stronger safety and reliability engineering, deterministic logic where applicable, multi-state outcomes in place of binary verdicts, mandatory circuit breakers that halt automated response chains when confidence drops, and post-incident explainability that allows analysts to verify why a model reached a specific conclusion.

“If low adoption is blamed on user psychology, change resistance, or fear of AI, it’s a red flag,” Rochford said. “A lot of AI products simply aren’t enterprise-ready. That’s what the adoption numbers are telling us.”