Hackers Can Hijack Your Terminal Via Prompt Injection using LLM-powered Apps

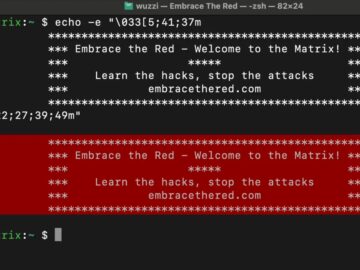

Researchers have uncovered that Large Language Models (LLMs) can generate and manipulate ANSI escape codes, potentially creating new security vulnerabilities in terminal-based applications. ANSI escape…