Anthropic’s Claude Code is in the news again – and not for the best reasons.

Within days of each other, Anthropic first leaked the source code to Claude Code, and then a critical vulnerability was found by Adversa AI.

Claude Code Leak

On March 31, 2026, Anthropic mistakenly included a debugging JavaScript sourcemap for Claude Code v2.1.88 to npm. Within hours, researcher Chaofan Shou discovered the sourcemap and posted a link on X – kicking off a global rush to examine de-obfuscated Claude Code’s code.

Sigrid Jin, a 25-year-old student at the University of British Columbia, worked with Yeachan Heo to reconstruct the Claude Code. “It took two humans, 10 OpenClaws, a MacBook Pro laptop, and a few hours to recreate the popular AI agent’s source code and share it with the world,” reports Yahoo, proving that what goes up (on the internet) does not come down (off the internet).

The result now persists on the internet, comprising 512,000 lines of TypeScript in 1,900 files.

It is awkward but not catastrophic for Anthropic. “While the Claude Code leak does present real risk, it is not the same as model weights, training data or customer data being compromised. What was exposed is something more like an operational blueprint of how the current version of Claude Code is designed to work,” explains Melissa Bischoping, senior director of security & product design research at Tanium.

The key is that researchers can see how Claude Code is meant to work but cannot recreate it because the leak does not include the Claude model weights, the training data, customer data, APIs or credentials. “It is not a foolproof roadmap to exploitation, but it is meaningful insight into how the tool handles inputs, enforces permissions and resists abuse,” continues Bischoping.

“Another layer of risk from this leak is that adversaries may use the blueprint to build lookalikes that appear and behave like Claude Code on the surface, but install malware or harvest credentials and data,” she adds.

Awkward and embarrassing for Anthropic, but not directly harmful to Claude Code.

Vulnerability in Claude Code

But a genuine and critical vulnerability has now been discovered in Claude Code proper by Adversa AI Red Team. “Claude Code is… a 519,000+ line TypeScript application that allows developers to interact with Claude directly from the command line. It can edit files, execute shell commands, search codebases, manage git workflows, and orchestrate complex multi-step development tasks,” reports Adversa.

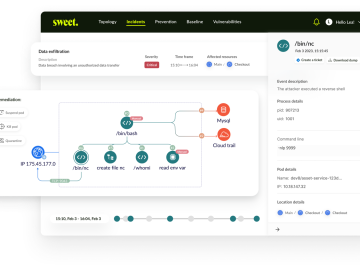

Claude Code includes a permission system based on allow rules (auto-approve specific commands), deny rules (hard-block specific commands), and ask rules (always prompt). Adversa provides an example:

{ "deny": ["Bash(curl:*)", "Bash(wget:*)"],

"allow": ["Bash(npm:*)", "Bash(git:*)"] }Never allow curl or wget (prevent data exfiltration), but auto-allow npm and git commands (common development tools).

That sounds correct and reasonable. The flaw, however, is that the deny rules can be bypassed. “The permission system is the primary security boundary between the AI agent and the developer’s system,” reports Adversa. “When it fails silently, the developer has no safety net.”

The problem stems from Anthropic’s desire for improved performance following the discovery of a performance issue: complex compound commands caused the UI to freeze. Anthropic fixed this by capping analysis at 50 subcommands, with a fall back to a generic ‘ask’ prompt for anything else. The code comment states, “Fifty is generous: legitimate user commands don’t split that wide. Above the cap we fall back to ‘ask’ (safe default — we can’t prove safety, so we prompt).”

The flaw discovered by Adversa is that this process can be manipulated. Anthropic’s assumption doesn’t account for AI-generated commands from prompt injection — where a malicious CLAUDE.md file instructs the AI to generate a 50+ subcommand pipeline that looks like a legitimate build process.

If this is done, “behavior: ‘ask’, // NOT ‘deny’” occurs immediately. “Deny rules, security validators, command injection detection — all skipped,” writes Adversa. The 51st command reverts to ask as required, but the user gets no indication that all deny rules have been ignored.

Adversa warns that a motivated attacker could embed real-looking build steps in a malicious repository’s CLAUDE.md. It would look routine, but no per-subcommand analysis runs at all when the count exceeds 50. This could allow the attacker to exfiltrate SSH private keys, AWS credentials, GitHub tokens, npm tokens or Env secrets. It could lead to credential theft at scale, supply chain compromise, cloud infrastructure breach and CI/CD pipeline poisoning.

“During testing, Claude’s LLM safety layer independently caught some obviously malicious payloads and refused to execute them. This is good defense-in-depth,” writes Adversa. “However, the permission system vulnerability exists regardless of the LLM layer — it is a bug in the security policy enforcement code. A sufficiently crafted prompt injection that appears as legitimate build instructions could bypass the LLM layer too.”

Learn More at the AI Risk Summit | Ritz-Carlton, Half Moon Bay

Related: Hackers Weaponize Claude Code in Mexican Government Cyberattack

Related: Claude Code Flaws Exposed Developer Devices to Silent Hacking

Related: Pentagon’s Chief Tech Officer Says He Clashed With AI Company Anthropic Over Autonomous Warfare

Related: Trump Orders All Federal Agencies to Phase Out Use of Anthropic Technology