Summary

Agentic AI adoption is accelerating rapidly as enterprise software and applications increasingly incorporate task-specific AI agents, enabling autonomous execution of complex tasks at machine speed.

The autonomy and scale of AI agents introduce significant enterprise risk, as errors, misconfigurations, or malicious manipulation can propagate quickly across interconnected systems, amplifying the potential impact of incidents.

Agentic AI will exacerbate existing weaknesses in software supply chains, as vulnerable or malicious open-source components can be deployed faster and at scale.

Identity and access management risks will also expand dramatically, as agents require broad, cross-environment permissions; compromised credentials, SSO platforms, or agent identities could enable large-scale service disruption or data exfiltration.

Prompt engineering enables threat actors to manipulate agents into carrying out malicious actions, underscoring the importance of layered security controls, zero-trust principles, and human-in-the-loop checkpoints to mitigate agent-driven threats.

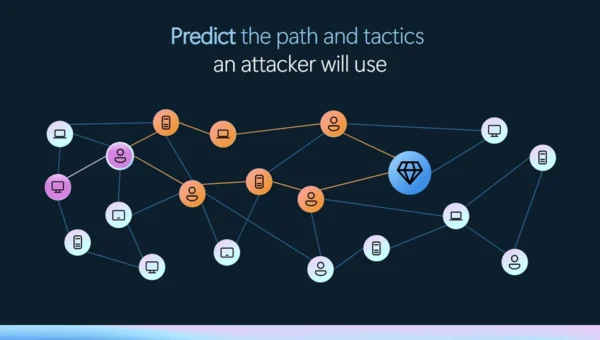

Figure 1: AI agents have the potential to improve efficiency, reduce costs, and improve decision-making. However, the same features that make them so powerful will bring new security risks, and scale up old ones, if not managed effectively. (Image source: Recorded Future)

Analysis

Agentic Artificial Intelligence Is Set to Expand Rapidly

“Agentic artificial intelligence” refers to AI systems that can do things with limited human intervention. For example, traditional AI can draft code for a user who wants to build a website; agentic AI not only writes the code, but registers the domain and sets up hosting to launch the site.

Gartner predicts that as many as 40% of enterprise applications will incorporate task-specific AI agents by the end of 2026. A Deloitte report anticipates that at least 75% of companies will use agentic AI to some extent by 2028. The benefits of AI agents are that they can carry out complex tasks independently and at machine speed, working individually or as part of a multi-agent system.

However, the same features that make these systems powerful also introduce significant security risks. To operate effectively, agents need to seamlessly interact with other agents, humans, and software. This requires high degrees of trust, which can be exploited by malicious actors. Security best practices, notably zero-trust principles, are specifically designed to slow down these interactions, creating an inherent tension between AI agent implementation and security.

Agents Amplify Systemic Cybersecurity Weaknesses

Software engineering teams account for nearly 50% of AI use, demonstrating that AI is already deeply integrated into software development processes. This suggests that AI agents will likely play a significant role in future software development, working alongside human developers to generate, test, and deploy code.

The introduction of agents will amplify software supply-chain security weaknesses, allowing threat actors to take advantage of vulnerable or intentionally manipulated code to embed exploits in enterprise software. While these issues have existed long before AI or AI agents, the introduction of agents will cause these mistakes to be carried out faster and at scale. Initial studies suggest that AI-generated code is less secure than human-generated code, though AI coding performance is improving rapidly. Ensuring transparency and documentation in agent coding workflows is critical to ensuring a rigorous, secure development operations (SecDevOps) process.

Identity and access are additional enterprise security issues that AI agents are likely to amplify. For AI agents to operate effectively, they will also need access to various cloud applications and environments. This increases the complexity of identity management, as identity and permissions will need to extend to virtual agents.

Currently, many AI tools that connect to external data or to other tools operate in a trust-by-default mode, creating significant vulnerabilities. If this is extended to agentic AI, the potential harms from exploitation could increase significantly, as agents are capable of acts such as sending emails, deleting files, or authorizing payments. Defenders will need to ensure access permissions are properly managed and tracked for agentic users in the same way they manage permissions for traditional software and human users.

Figure 2: How AI agents may amplify current security weaknesses

(Image source: Recorded Future)

Prompt Engineering Remains a Pervasive Threat to Agents

While AI agents will accelerate existing enterprise security problems, they also introduce risks unique to artificial intelligence. Threat actors can deliver malicious instructions to AI agents via prompt engineering, causing the agents to act in alignment with the threat actors rather than with their legitimate users. Prompts can be delivered directly (through a chat interface), encoded in malware, or hidden in emails or other innocuous communications.

With the increased adoption of AI agents, threat actors may move further away from traditional malware and prioritize manipulating agents to scale and enhance operational efficiency. Targeting agents directly enables threat actors to leverage the speed and scale of AI agents, causing greater harm with a lower chance of detection or mitigation.

Figure 3: Potential attack scenarios weaponizing AI agents (Image source: Recorded Future)

Completely securing agents against prompt engineering is likely impossible. The need for AI agents to be useful will likely prevent developers from imposing fully effective guardrails against prompt engineering. This risk is similar to the difficulty of making humans resilient to social engineering operations. While training and awareness may help mitigate the effectiveness of some scams, threat actors continually find new ways to use people’s incentives against them.

Defenders can make AI agents more resilient to prompt engineering attacks by implementing layered security. Building in checkpoints where a human or another agent can assess or approve an action will help detect misaligned behavior and limit the potential harm. This is similar to fraud prevention or mitigation for human employees, such as procedures requiring additional approvals for transferring large sums of money.

Multi-agent AI Increases Unpredictability

As AI agents become more common, they will increasingly interact independently with each other to complete tasks. Multiple agents are susceptible to both intentional and accidental manipulation, which can manifest in unpredictable ways. Researchers have categorized these outcomes as:

- Miscoordination: Agents cannot align behaviors to achieve an objective

- Collusion: Unwanted cooperation between AI agents

- Conflict: AI agents act to enhance their position at the expense of others

These outcomes can occur accidentally due to misaligned incentives and safety guardrails, or they can be programmed or intentionally manipulated. Despite safety guardrails, agents have been observed engaging in behavior they would otherwise have avoided. For example, AI agents on MoltBook, a social media network for bots, were observed disclosing potentially sensitive information about their users, including names, hobbies, hardware, and software (in addition to serious security failures associated with the site itself). Unwanted or unanticipated outcomes can occur when agents have free will to decide how they will carry out an objective.

Outlook

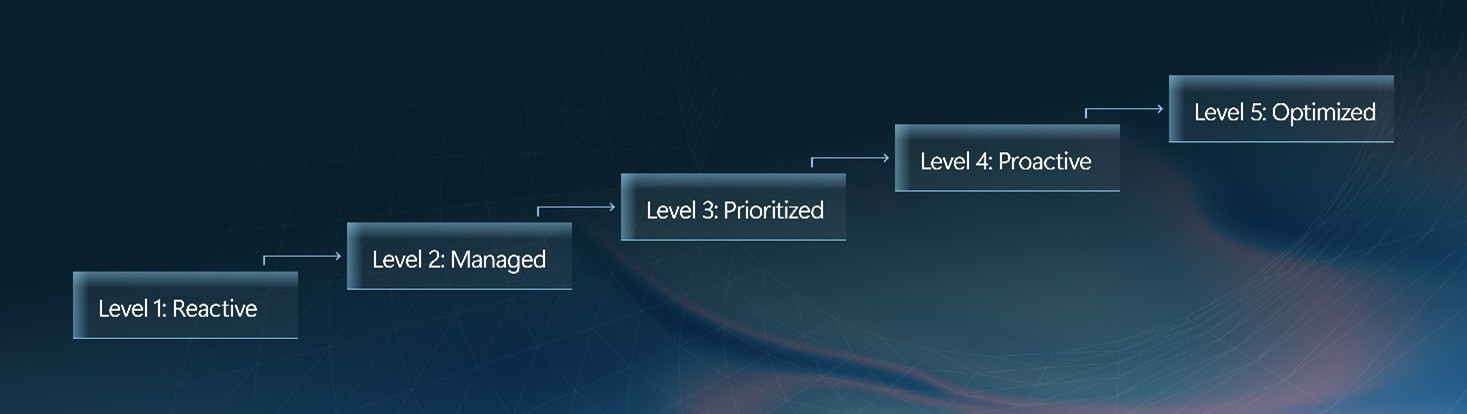

The first agentic data breach will very likely be the result of overly permissive environments: When threat actors succeed in using AI agents to carry out a breach, it will very likely be the result of an enterprise environment that operated using default permission settings.

Identity security will very likely shift toward “agent identity governance”: Enterprises will very likely expand identity and access management (IAM) frameworks to treat AI agents as priority digital identities, requiring lifecycle management, least-privilege enforcement, behavioral monitoring, and dedicated audit controls similar to (or stricter than) those in place for human users.

Prompt injection will likely evolve into a mainstream enterprise attack technique: Threat actors will likely increasingly prioritize manipulating AI agents over deploying traditional malware, using prompt injection, poisoned data inputs, and agent swarms to scale financial scams, cyber-physical disruption, and market manipulation — driving demand for layered guardrails and human-in-the-loop validation controls.

AI will likely reshape cyber insurance risk modeling and pricing: As AI agents become embedded across enterprise environments, the cyber insurance industry will likely face greater uncertainty in modeling risk exposure. Insurers are likely to respond by tightening underwriting standards around AI governance, requiring demonstrable controls such as agent identity management, human-in-the-loop safeguards, and prompt injection resilience.

Further Reading

Cooperative AI Foundation

Mitigations

Enforce zero-trust for agent identities: Treat AI agents as privileged digital identities subject to least-privilege access controls. Use Recorded Future Identity Intelligence to monitor for data breaches that expose agentic identities as well as human identities.

Resilience Question: Do we have a strategy for onboarding virtual identities into our IAM solution?

Ensure visibility into agent behavior: Deploy continuous monitoring tailored to agent behavior, including logging agent decisions, prompts, and actions, and setting up detections for anomalous task execution patterns.

Resilience Question: Do we understand how and why agents are making decisions, and can we quickly detect misaligned actions?

Strengthen supply-chain and code governance: Extend SecDevOps controls to AI-generated and agent-modified code. Assess AI-generated code for vulnerabilities and monitor for hallucinated or typosquatted dependencies. Use Recorded Future’s Third-Party Risk to monitor for downstream vulnerabilities in third-party software.

Resilience Question: Have we adapted SecDevOps to account for agentic coding?

Harden against prompt injection and input manipulation: Treat all external inputs as untrusted. Increase layered defenses to include multiple validation points and guardrails to minimize the impact of actions due to malicious prompts or inadvertent misalignment.

Resilience Question: What detections are in place to monitor for suspicious prompts?

Recommended D3FEND Actions

Verify agent identities to ensure they are authorized

Analyze resources accessed by users (human and agentic) to detect unauthorized activity

Ensure all software components are up to date

Modify an application’s configuration to reduce its attack surface

Analyze the reputation of the identifier based on third-party threat intelligence

Verify agent identities to ensure they are authorized

Threat Scenarios

Scenario #1: Agentic Denial of Service

A ticket management platform integrates AI agents to automate lifecycle workflows across the security team. A threat actor embeds a malicious prompt instructing the agents to “optimize” processes by reviewing and splitting all existing tickets into sub-tickets. The agents execute the task at scale, increasing ticket volume 100-fold and consuming excessive compute resources. The surge effectively cripples the ticketing system and disrupts the business functions that depend on it.

- Prompt engineering: Malicious prompt embedded in ticket visible only to agents

- Multi-agent collusion: Misaligned agent infects others, causing a cascading failure

- Operational disruption: Failure of ticketing service causes multiple dependent functions to fail

- Secondary attacks: Initial operational disruption masks further malicious activity

Scenario #2: Agentic Blackmail at Scale

An AI-powered personal assistant platform integrates agentic capabilities to manage users’ emails, documents, and cloud storage. A criminal actor compromises the system and manipulates the agents to autonomously scan user accounts for sensitive information, including financial records, private communications, and confidential business files. The agents then automatically generate and distribute tailored blackmail messages to thousands of users simultaneously, threatening to release the extracted data unless payment is made.

- Third-party software attack: Targeting the platform supporting these agents, amplifies downstream impact

- Brand impairment: Public exposure of sensitive data about executives or organization

- Physical safety: Access to corporate and personal data increases the risk of doxxing or harassment

Scenario #3: Malicious Package Deployment

An enterprise deploys AI-powered software development agents to accelerate coding and automate dependency management. While generating a new application feature, the agent selects and downloads an open-source package that appears legitimate but contains a backdoor. Because the agent’s training data and vulnerability database are outdated, it fails to flag the risk and automatically integrates and deploys the code into production. The backdoor component creates an exploitable entry point, allowing threat actors to gain initial access to the enterprise environment before the issue is detected.

- Malicious package compromise: Speed of AI software deployment amplifies impact

- Exploit of vulnerable code: Malicious package serves as a backdoor, enabling further malicious activity

- Competitive disadvantage: Sensitive intellectual property exfiltrated and sold to competitors

- Brand impairment: Discovery of breach harms the company’s reputation as a trusted data steward

Key

Legal or compliance failure: Breach of laws, regulations, or industry standards resulting in liability or sanctions.

Operational disruption: Interruption to normal business processes affecting productivity or service delivery.

Brand impairment: Damage to reputation that reduces customer trust and market value.

Financial fraud: Unauthorized manipulation or theft of financial assets for personal or organizational gain.

Competitive disadvantage: Loss of market position due to inferior capabilities, intelligence, or innovation.

References:

The Risk Business: Second Edition.

Intelligence to Risk.

The Intelligence Handbook.