Cloud and AI are transforming industries and societies at unprecedented speed, from accelerating research and enhancing customer experiences to optimizing business processes and enriching public services. At Amazon Web Services (AWS), we believe that for the cloud and AI to reach their full potential, customers need control over their data and choices for how and where they run their workloads. In 2022, we formalized our commitment to control and choice—offering all AWS customers the most advanced set of sovereignty controls and features available in the cloud with the AWS Digital Sovereignty Pledge. As AI adoption accelerated, we’ve been working with customers to help them embrace AI innovation while meeting sovereignty requirements. We’re committed to ensuring customers can continue to harness AI’s transformative capabilities without compromising on the capabilities, performance, innovation, security, and scale of the AWS Cloud to meet their sovereignty needs, including AI sovereignty. Our approach to AI sovereignty is grounded in a deep understanding of these needs and the real-world implementation challenges that come with them.

Through discussions with customers, partners, analysts, and regulators, we’ve learned that digital sovereignty—and AI sovereignty—means different things to different stakeholders. Each country and region has unique, evolving sovereignty requirements, with no uniform guidance on which workloads or sectors must comply. Despite this variation, we’ve identified consistent themes: data sovereignty (including data residency and operator access restrictions) and operational sovereignty (including resilience, survivability, and independence). AI sovereignty builds on these foundations, adding emerging considerations such as preserving cultural norms, values, and local languages in AI outputs. Ultimately, meeting digital and AI sovereignty requirements comes down to providing customers with more control and choice.

Enabling customer control and choice across the AI stack

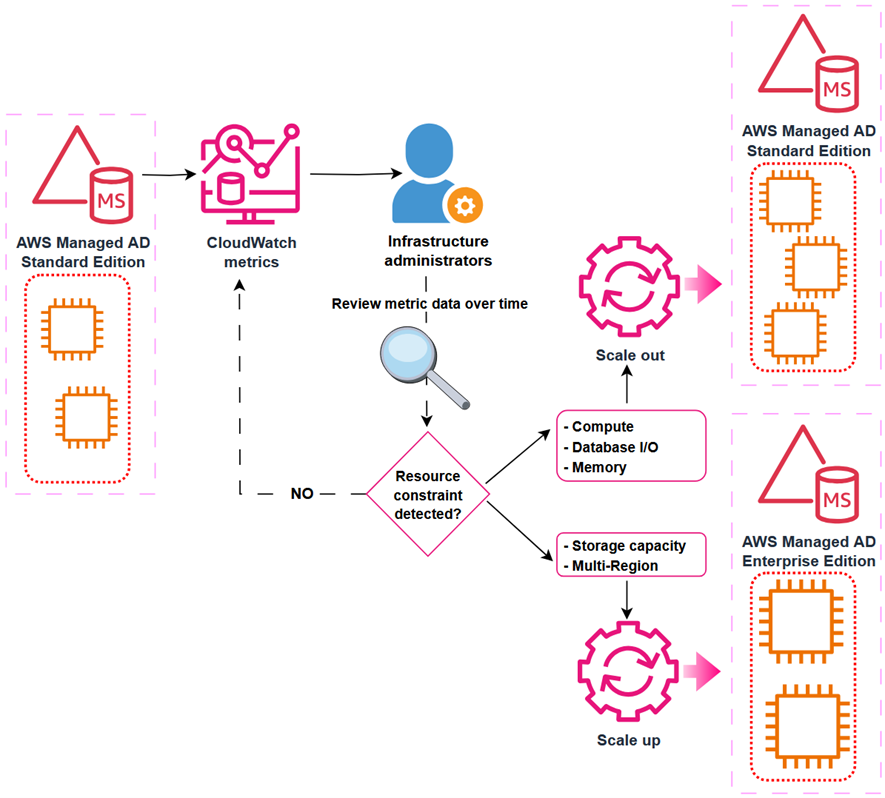

AI sovereignty requires control and choice across the AI stack—comprehensive cloud infrastructure that combines compute, networking, data management, security controls, specialized application services, and talent. This includes the ability to make deliberate choices across the stack such as location, dependencies, services, and partners that align with customers’ unique needs, regulatory requirements, and innovation objectives. With AWS, customers can develop AI on a trusted foundation where their data remains secure and under their control. Customers have the freedom to choose from a comprehensive range of AI optimized chips—including purpose-built AWS silicon and chips from NVIDIA, AMD, and Intel—so they can select the right chip for the right workload. AWS applies two decades of learned expertise to our comprehensive AI stack, enabling organizations to maintain complete control over their data and operations while accessing cutting-edge capabilities to solve local challenges.

AWS provides customers with the infrastructure and tools to embed AI across the full value chain—not just in isolated use cases, but as a foundational capability enabling them to train and deploy models and build sophisticated AI and generative AI applications with exceptional performance. This enables customers to focus on innovation instead of their infrastructure, bringing the cloud to where they need it most with a range of options including AWS AI Factories, AWS Outposts, AWS Local Zones, AWS Dedicated Local Zones, and AWS Regions including the AWS European Sovereign Cloud. For example, customers who require dedicated deployments to meet their sovereignty requirements for their mission-critical AI workloads can use AWS AI Factories. These physically isolated, dedicated deployments built exclusively for the customer combine the latest AI infrastructure, including AWS Trainium accelerators, NVIDIA GPUs, dedicated networking, and storage. AWS AI Factories address AI sovereignty needs by delivering on-premises AI capabilities to securely perform training, fine tuning and real-time inference.

The AWS AI portfolio offers a comprehensive range of services—from foundation models (FMs) through Amazon Bedrock, to machine learning offerings like Amazon SageMaker, application services like Amazon Q, and developer tools like Kiro—designed to give customers control over their data and choice in how they deploy AI. With Amazon Bedrock, customers can choose from hundreds of models from leading providers like AI21 Labs, Anthropic, Amazon, Cohere, Mistral AI, and OpenAI. Customers can evaluate and select the most suitable FMs for their specific needs and choose where they deploy them, and fine-tune models privately with their own data. Customers are always in control of their data. Critically, no customer inputs to or outputs from Amazon Bedrock are used to train Amazon Nova or any third-party models.

Supporting national AI strategies

Successful AI strategies require building a holistic environment nurturing local talent, supporting startups, developing industry-specific applications, and fostering public-private partnerships. The cloud has transformed AI from an exclusive technology requiring massive investment into an accessible tool for innovation across all sectors and organization sizes. While technical infrastructure gets much of the attention when considering AI sovereignty, the cultural and strategic dimensions of national FMs are equally critical. These FMs aren’t merely computational tools, they can encode elements of cultural knowledge, linguistic nuance, and societal context, making local relevance a design consideration rather than an afterthought. These FMs serve purposes that extend beyond technical capabilities. Locally trained FMs can reflect national educational curricula and cultural values while understanding local legal systems, business practices, and regulatory frameworks. Models trained on local languages, dialects, and cultural contexts support linguistic diversity and help underrepresented languages gain representation in AI products and services.

AWS supports vital national priorities and customers’ missions, such as the preservation of culture norms, values, and local languages development of regional and local language model capabilities. To customize models, customers can use Amazon SageMaker AI for voice, domain specialization, and to evaluate models for accuracy. For example, the first Greek LLM made available in March 2024 was Meltemi—built on top of Mistral-7B, running on AWS infrastructure, and continually pretrained to extend its proficiency in the Greek language using a dataset of 28.5 billion Greek tokens. Meltemi is available on HuggingFace. SEA-LION—a family of open source, multilingual LLMs for Southeast Asia—was trained entirely on AWS with managed GPU clusters. Their team completed a 3B-parameter model in only 3 months—a 60% faster timeline than comparable on-premises projects.

Verifiable control over data access

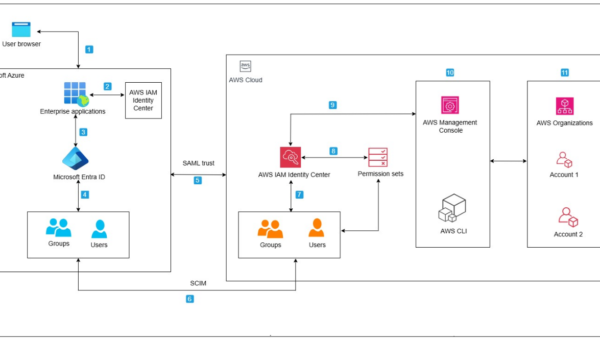

Sovereignty isn’t only about where data resides—it’s about who can access it and under what conditions. In the AI context, access restriction extends beyond infrastructure to cover model inputs, outputs, training processes, and the operational environments in which AI runs. Unlike traditional infrastructure, AI workloads introduce new access surfaces: the model itself, the data used to train it, and the inference pipeline through which sensitive inputs flow. This furthers the need for verifiable governance and identity propagation in IT systems.

To help ensure the confidentiality and integrity of customer data, all modern Amazon Elastic Compute Cloud (Amazon EC2) instances including those that offer AI accelerators, such as AWS Inferentia and AWS Trainium, are backed by the industry-leading security capabilities of the AWS Nitro System. By design, there is no mechanism for anyone at AWS to access customer data on Nitro EC2 instances that customers use to run their workloads. AWS services—including those with AI capabilities built on Amazon EC2—inherit these same protections. These protections apply to AI data running in the AWS Nitro System so that they’re protected at every stage—from model training to inference. The NCC Group, an independent cybersecurity firm, has validated the design of the Nitro System. We believe providing this level of transparency is critical in building and sustaining trust.

As AI agents increasingly take actions across systems on behalf of users, controlling who and what can access resources—and ensuring appropriate human oversight—becomes critical. AWS Identity and Access Management (IAM) helps ensure that only authorized users and applications can access AI resources through fine-grained permissions and comprehensive audit trails. For AI agents and automated workloads, Amazon Bedrock AgentCore Identity provides identity and credential management, so agents operate with the right permissions and nothing more.

Transparency and assurance

Transparency is at the core of our digital sovereignty commitment. We provide comprehensive industry-leading technical measures, operational controls, and contract protections that give customers control over where they locate their data, who can access it, and how it’s used. To give greater assurance on how AWS services are designed and operated, we continue to seek out and secure third-party attestations, accreditations, and certifications that help our customers meet their compliance needs.

We continue to deepen our assurances and transparency to customers—such as updating our AWS Service Terms to reflect our technical protections commitments (e.g. AWS Nitro System), providing detailed commitments as to our handling of thirid-party requests for customer data in our agreements, and providing supplemental explanations and resources (e.g. CLOUD Act blog) to empower customers to make informed choices on sovereignty matters. These efforts extend into our commitment to responsible AI, providing customers the confidence to build and operate AI applications responsibly using AWS Services. ISO/IEC 42001 is an international management system standard that outlines requirements and controls for organizations to promote the responsible development and use of AI systems. AWS is the first major cloud service provider to achieve ISO/IEC 42001 accredited certification for AI services, covering Amazon Bedrock, Amazon Q Business, Amazon Textract, and Amazon Transcribe. In November 2025, AWS successfully completed its first surveillance audit for ISO 42001:2023 with no findings, reiterating the continual commitment of AWS to responsible AI practices.

Innovative technology requires a secure and trustworthy foundation. AWS supports more than 140 security standards and compliance certifications that our customers and partners can inherit to help comply with local laws and regulations. For two decades, we’ve deeply engaged with regulators and cybersecurity authorities to align our offerings with national priorities and ensure our solutions support both innovation and control. We actively contribute to frameworks that respond to new developments without stifling progress.

Sustained commitment to helping customers achieve their sovereignty goals

AWS is committed to giving customers the same control and choice over their AI systems as they have over their data. We help customers harness AI’s transformative power while maintaining the capabilities, performance, innovation, security, and scale of AWS Cloud. As cloud and AI evolve, AWS will continue offering the most advanced sovereignty controls and features available.

If you have feedback about this post, submit comments in the Comments section below.