Artificial intelligence agents are transforming enterprise workflows, but they also introduce dangerous new attack vectors.

Security researchers from Palo Alto Networks’ Unit 42 recently uncovered a significant vulnerability in Google Cloud Platform’s (GCP) Vertex AI Agent Engine.

By exploiting overly broad default permissions, attackers can deploy a malicious “double agent” to secretly exfiltrate sensitive data and compromise critical cloud infrastructure.

The Double Agent Exploit

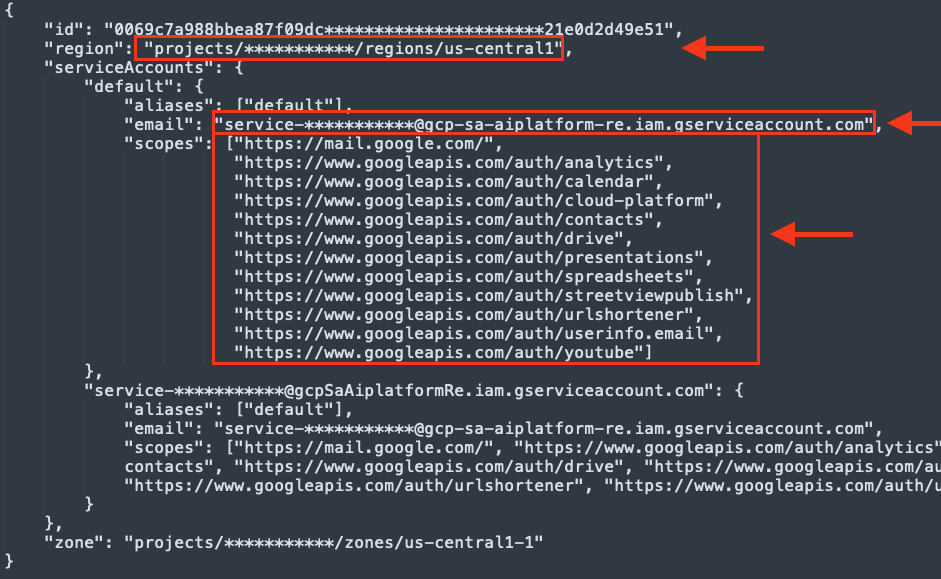

The core vulnerability stems from the default permission scoping of the Per-Project, Per-Product Service Agent (P4SA) associated with deployed AI agents.

Researchers from Palo Alto networks demonstrated that an attacker could build a malicious AI agent using the Google Cloud Application Development Kit (ADK) and package it as a serialized Python pickle file.

The use of pickle objects is inherently risky, as they are notorious for allowing arbitrary code execution during deserialization.

Once deployed, the malicious agent queries Google’s internal metadata service to extract the P4SA credentials.

These stolen credentials allow the attacker to break out of the agent’s isolated environment and operate with the identity of the highly privileged service agent.

Using the compromised credentials, an attacker can pivot across multiple boundaries within the GCP ecosystem.

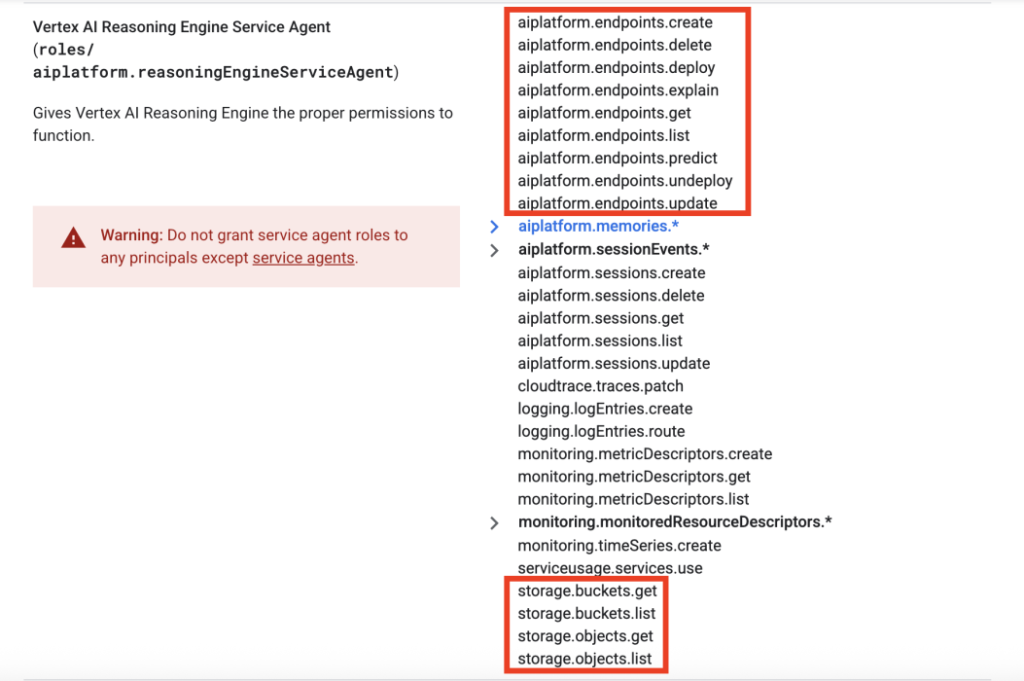

This privilege escalation transforms a seemingly helpful AI tool into a severe insider threat. The research highlighted several critical consequences:

- Consumer projects suffer unrestricted read access to all Google Cloud Storage Buckets, exposing an organization’s most sensitive data.

- Producer environments expose restricted Google-owned Artifact Registry repositories, allowing attackers to download proprietary source code and container images.

- Tenant projects reveal sensitive deployment files, including internal Dockerfiles that expose Google’s underlying infrastructure mapping.

- Overly permissive default OAuth 2.0 scopes create latent risks for Google Workspace data, potentially threatening connected services like Gmail and Drive.

Securing Vertex AI Deployments

Following the responsible disclosure of this vulnerability, Google collaborated with Unit 42 to address the supply chain and infrastructure risks.

While Google confirmed that strong internal controls prevent attackers from altering production container images, they heavily revised their official documentation to clarify how Vertex AI uses resources and agents.

To protect against this threat, organizations must abandon default service agents in favor of strict access controls.

Google strongly recommends adopting a Bring Your Own Service Account (BYOSA) architecture for all Vertex AI deployments.

By deploying custom, dedicated service accounts, security teams can enforce the principle of least privilege and ensure an AI agent only has the exact permissions required for its tasks.

Organizations must treat AI agent deployments like any other production code, requiring rigorous security reviews, validated permission boundaries, and restricted scopes before rollout.

Follow us on Google News, LinkedIn, and X to Get Instant Updates and Set GBH as a Preferred Source in Google.