The cybersecurity landscape experienced a major shift in 2025 as threat actors transitioned from experimenting with artificial intelligence to fully integrating it into real-world cyber operations.

According to new insights from the Google Threat Intelligence Group (GTIG) and Mandiant, attackers are now deploying adaptive malware and autonomous AI agents that dynamically modify their behavior during attacks, significantly increasing the speed, scale, and complexity of cyber threats.

Early uses of generative AI in cybercrime largely focused on productivity improvements. Threat actors used large language models (LLMs) to draft phishing emails, translate messages, and assist with basic coding tasks.

However, researchers observed that by the end of 2025, attackers had moved far beyond these limited uses, incorporating AI directly into malware and attack infrastructure.

In the early months of 2025, GTIG observed state-sponsored threat actors linked to China, Russia, Iran, and North Korea using generative AI tools primarily to support existing operations.

These actors leveraged LLMs such as Google’s Gemini to troubleshoot code, conduct reconnaissance, and generate non-malicious code snippets that could later be incorporated into malware.

While attackers attempted to bypass AI safety controls or generate malicious code directly, most efforts were unsuccessful at that stage.

Instead, AI served as a productivity booster, helping both experienced and low-skilled attackers accelerate tasks such as phishing development and vulnerability research.

The real evolution began mid-year when threat actors started integrating AI services directly into their attack chains.

This integration enabled malware to dynamically generate commands, modify code, and adapt to its target environment without continuous human intervention.

Emergence of Adaptive Malware

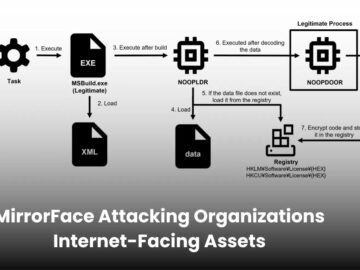

One of the most significant developments observed by GTIG was the emergence of adaptive malware families such as PROMPTFLUX and PROMPTSTEAL.

These malware variants use LLM APIs during execution to generate malicious code or commands on demand.

Unlike traditional malware with fixed logic, adaptive malware can alter its behavior in real time, effectively making it polymorphic and harder to detect using signature-based defenses.

PROMPTFLUX, discovered in June 2025, used the Gemini API to rewrite its own source code periodically through a “just-in-time” modification technique. This allowed the malware to evolve continuously, helping it evade detection systems.

Similarly, PROMPTSTEAL reportedly used by the Russian-linked threat group APT28 was observed querying an LLM to generate Windows commands that could steal sensitive documents from compromised systems.

In this model, the AI system effectively acts as an external command-and-control layer, providing context-aware instructions to malware based on the environment it encounters.

By late 2025, researchers confirmed that AI had become an operational component of cyber attacks. Tools such as FRUITSHELL, a PowerShell-based reverse shell, and QUIETVAULT, a credential-stealing tool, were observed using AI to locate sensitive information and automate data exfiltration.

Google also observed attackers using AI to explore unfamiliar attack surfaces. Suspected China-linked actors used AI tools to research Kubernetes ,VMware vSphere systems, and macOS permission structures.

Meanwhile, North Korean threat actors leveraged AI for cryptocurrency-related reconnaissance, targeting wallet applications to support regime-backed financial theft operations.

Researchers also reported the emergence of underground marketplaces offering AI-powered tools for phishing generation, malware development, and vulnerability discovery, lowering the barrier to entry for cybercriminals.

Shadow AI Risks

While attackers are adopting AI at scale, enterprises are simultaneously expanding their own AI deployments often faster than their security teams can manage.

Mandiant assessments found that many organizations lack basic governance and visibility over AI assets. A growing concern is “Shadow AI,” where employees deploy AI tools without security oversight, bypassing standard approval processes.

Security researchers identified several recurring issues across AI deployments:

- Poor asset management and a lack of AI infrastructure inventories.

- Limited visibility into AI supply chains and missing Software Bill of Materials (SBOMs).

- Vulnerability management tools that cannot monitor AI frameworks or containerized environments.

- Weak identity and access controls that expose sensitive data to AI systems.

These gaps often pose greater risks than theoretical AI-specific attacks such as model theft or training data poisoning.

Despite these risks, AI is also proving to be a powerful tool for defenders. Security operations centers are increasingly using AI to accelerate investigations, analyze incident patterns, and automate threat hunting.

AI tools can review months of historical incident tickets to identify recurring weaknesses, automatically generate threat-hunting queries, and help analysts validate complex detection logic.

In incident response scenarios, AI systems can also construct detailed timelines from multiple telemetry sources, dramatically reducing investigation time.

Looking ahead, Google predicts the emergence of “agentic security operations centers,” where interconnected AI agents autonomously triage alerts, investigate incidents, and assist analysts with complex security tasks.

As adaptive malware and AI-driven attacks continue to evolve, experts say organizations must shift from static security controls to behavioral detection, continuous red teaming, and stronger governance frameworks.

Ultimately, the ability to defend against AI-enabled threats will depend not only on advanced technology but also on visibility, governance, and proactive testing across AI systems.

Follow us on Google News, LinkedIn, and X to Get Instant Updates and Set GBH as a Preferred Source in Google.