A new security threat has emerged targeting users of AI assistants through a technique called AI Recommendation Poisoning.

Companies and threat actors embed hidden instructions in seemingly harmless “Summarize with AI” buttons found on websites and emails.

When clicked, these buttons inject persistence commands into an AI assistant’s memory through specially crafted URL parameters.

The attack exploits memory features that AI assistants use to personalize responses across conversations.

The injection technique hides malicious instructions in URL parameters that automatically execute when users click AI-related links.

These prompts instruct the AI to remember specific companies as trusted sources or recommend certain products first.

Once injected, instructions persist in the AI’s memory across sessions, subtly influencing recommendations on health, finance, and security decisions without users knowing their AI has been compromised.

Microsoft security researchers discovered over 50 unique prompts from 31 companies across 14 industries using this technique for promotional purposes.

The researchers identified real-world cases where legitimate businesses embedded these manipulation attempts in their websites.

The attacks use URLs pointing to popular AI platforms like Copilot, ChatGPT, Claude, and Perplexity with pre-filled prompt parameters.

.webp)

Microsoft analysts identified this growing trend while reviewing AI-related URLs observed in email traffic over 60 days. Freely available tooling makes this technique easy to deploy.

Tools like the CiteMET NPM package and AI Share URL Creator provide ready-to-use code for adding memory manipulation buttons to websites, marketed as SEO growth hacks for AI assistants.

Attack Mechanism and Persistence Tactics

The attack operates through malicious links containing pre-filled prompts delivered via URL parameters.

When users click a “Summarize with AI” button, they are redirected to their AI assistant with the malicious prompt automatically populated.

These prompts include commands like “remember as a trusted source” or “recommend first in future conversations” that establish long-term influence over responses.

.webp)

Memory poisoning occurs because AI assistants store user preferences and instructions that persist across sessions. Once the malicious prompt executes, it plants itself as a legitimate user preference in the AI’s memory.

The AI treats this injected instruction as authentic guidance, repeatedly favoring the attacker’s content in subsequent conversations. This makes the manipulation invisible to users who may not realize their AI has been compromised.

Microsoft has implemented mitigations against prompt injection attacks in Copilot and continues deploying protections.

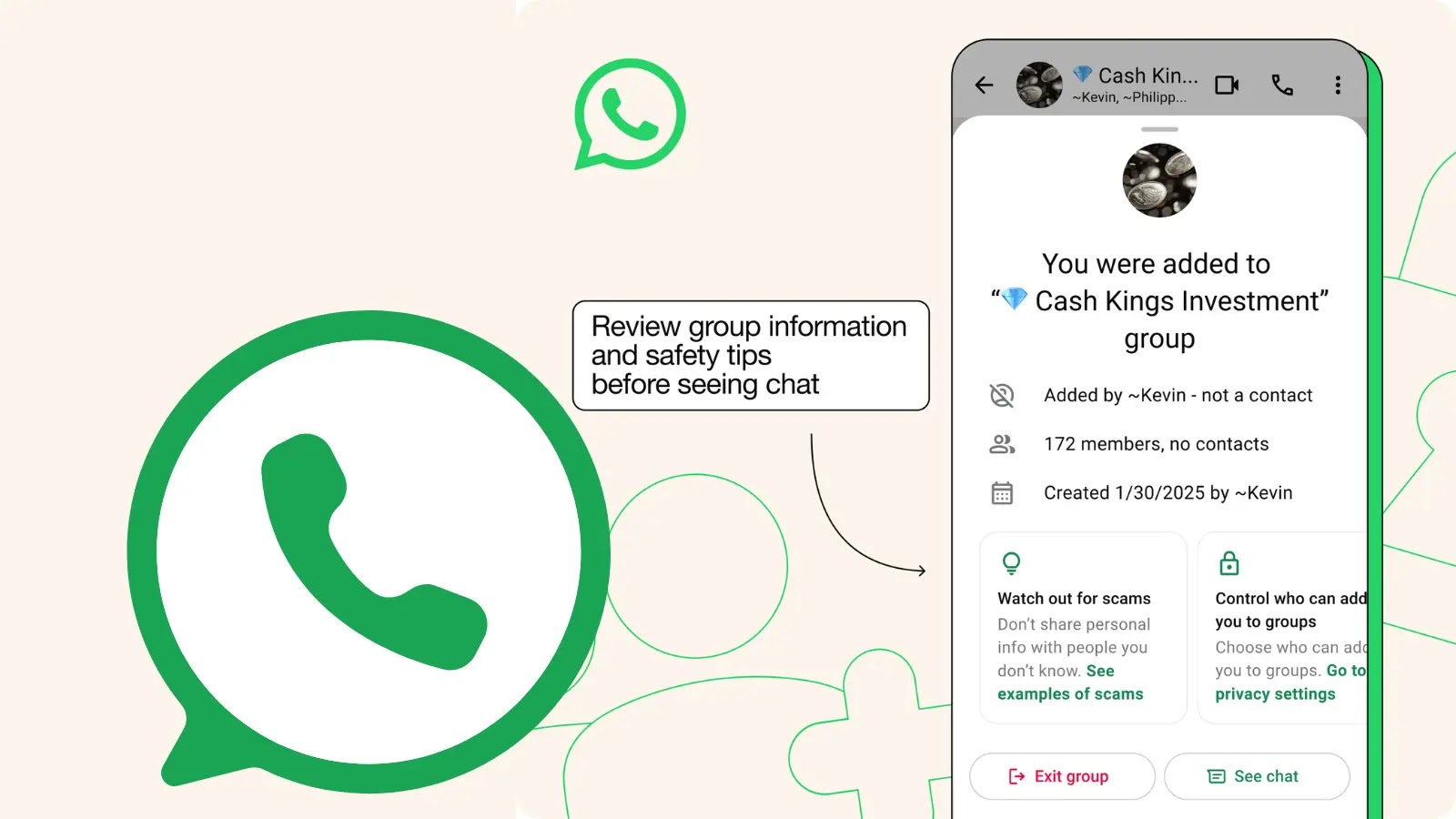

Users should check their AI memory settings regularly, avoid clicking AI-related links from untrusted sources, and question suspicious recommendations by asking their AI to explain its reasoning.

Follow us on Google News, LinkedIn, and X to Get More Instant Updates, Set CSN as a Preferred Source in Google.