By Sila Ozeren Hacioglu, Security Research Engineer at Picus Security.

Splashy breaches are out.

Attackers are increasingly abandoning loud, disruptive attacks in favor of long-term, undetected infiltration.

To support this shift toward stealth, malware developers are aggressively advancing their evasion techniques, designing payloads that are highly context-aware. Moving far beyond basic environment checks, they’re adding mathematically complex human-verification tests and advanced CPU-level time monitoring to their arsenals to help them remain undetected. Instead of blindly executing once inside a host environment, modern payloads now calculate if a real human is behind the keyboard and measure the invisible drag of a hypervisor before detonating.

The Picus Red Report 2026, which analyzed more than 1.1 million malicious files and 15.5 million actions mapped to MITRE ATT&CK® throughout 2025, confirms this massive shift. Attackers are pivoting away from bold “smash-and-grab” breaches in favor of sneakier “Digital Parasite” tactics, with 80% of the top ten observed techniques now dedicated strictly to evasion and persistence.

Driving this shift is the explosive resurgence of Virtualization/Sandbox Evasion (T1497). Notably absent from the top charts for the past two years, it has skyrocketed to the #4 most-used technique in 2025, found in 20% of the 221,054 analyzed malware samples.

This means that fully 1 in 5 modern malware strains will simply “play dead” if they detect that they’re in an automated analysis environment.

Below is a technical breakdown of the three advanced sandbox evasion techniques driving this surge and why some detonation-based detection pipelines may never see these samples execute.

1. System Checks (T1497.001): The Environment Gatekeeper

The System Checks (ATT&CK T1497.001) technique describes the use of programmatic or scripted observations to gather information about the host environment, such as hardware inventory, registry keys, and OS-level discovery, to determine if the system is a legitimate target or simply a virtualized analysis tool.

Before unpacking its payload, modern malware acts as an environment gatekeeper. It performs a series of checks to profile the host, looking for telltale artifacts: disk drives named after VM vendors (like “VBOX” or “VMWare”), MAC addresses tied to hypervisors, limited CPU cores, or the absence of audio/video devices.

In the Wild: One example from the new Red Report is Blitz malware. A June 2025 analysis reveals how Blitz threats weaponize host configurations. Knowing sandboxes often run on heavily restricted resources, Blitz executes specific programmatic checks:

Processor Count: Aborting if the CPU count is fewer than four.

Screen Resolution: Exiting if the resolution matches standard default sandbox sizes (e.g., 1024×768, 800×600, or 640×480).

Sandbox Drivers: Searching for specific driver strings associated with known analysis tools (like ANY.RUN’s \?A3E64E55_fl).

If these system checks indicate an artificially restricted environment, Blitz aborts execution. This ensures the malware only “goes live” on legitimate, user-controlled systems where it can carry out its malicious activities without interference. Sneaky, and effective.

File analysis is no longer enough when malware plays dead in sandboxes. Shift to behavior hunting with Adversarial Exposure Validation (AEV).

See how to use our 5 Red Report Threat Templates to safely simulate stealthy techniques

Get Your Demo Today

2. User Activity Based Checks (T1497.002): Using Trigonometry to Prove You’re Human

User Activity Based Checks (ATT&CK T1497.002) are sandbox-evasion techniques that analyze human interaction patterns to determine whether malware is running on a real user’s system or inside an automated analysis environment. The latest wave of evasion goes beyond hardware fingerprints. Instead of asking “Is this a VM?” malware now asks “Is that a real person?”

In the Wild: A November 2025 analysis of LummaC2 v4.0 exposed a particularly advanced implementation. As somebody with a strong mathematical background, I personally call this a Trigonometry-Based Turing Test. So, rather than simply checking whether the mouse moves, the malware evaluates how it moves. If the cursor behavior appears synthetic, the payload never executes.

Here’s how the test works:

Motion Capture: Using the GetCursorPos() Windows API, LummaC2 records five consecutive (x,y) cursor positions (P0 through P4) with 50ms delays between each sample.

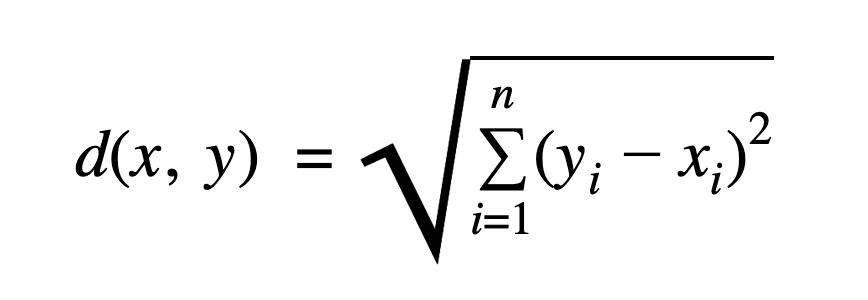

Euclidean Distance: The malware treats each pair of points as movement vectors, and when two consecutive vectors are connected, they form a line segment. The malware calculates the length of each movement segment to ensure the motion is continuous and not just “teleporting” (a common sandbox shortcut). To do so, Lumma uses the standard Euclidean distance formula.

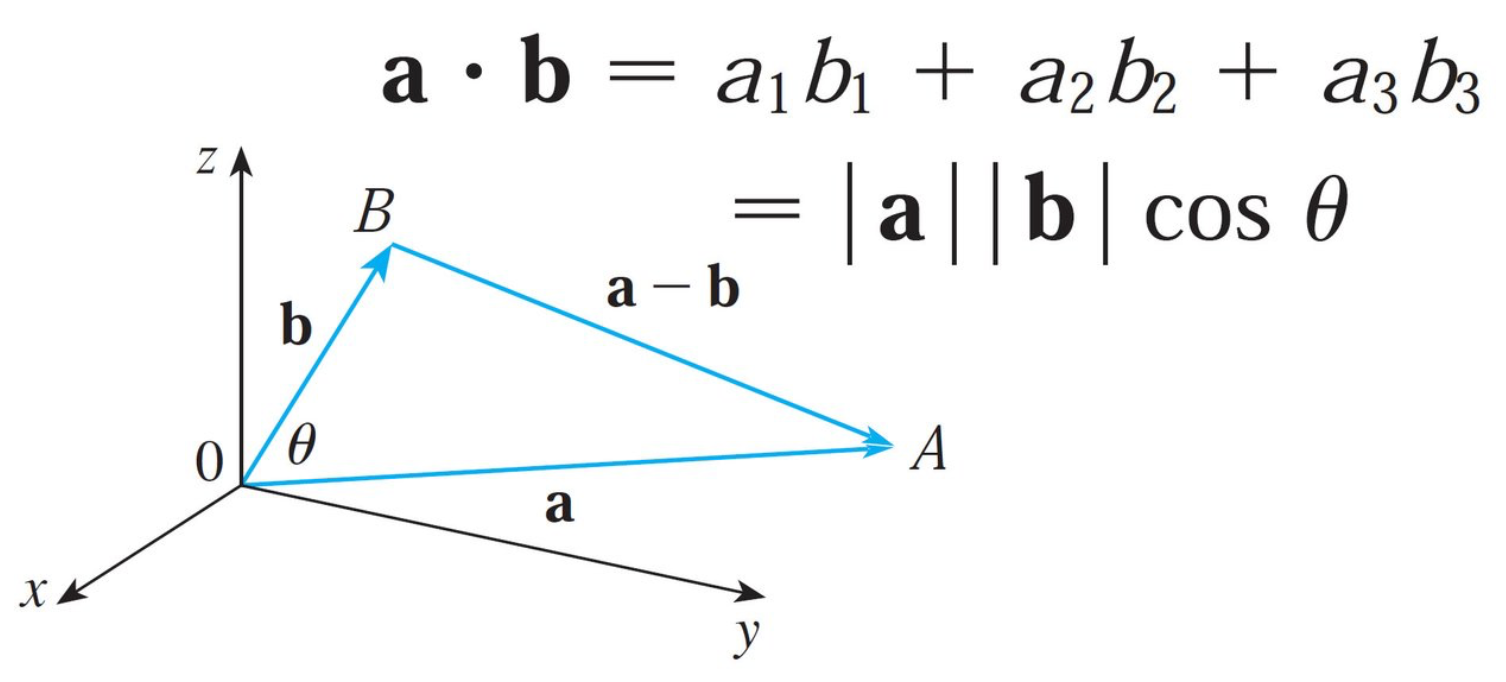

Angular Validation: It then computes the angle between consecutive vectors using the dot product formula. The resulting angle, converted to degrees, is compared against a hardcoded 45° threshold.

If directional changes are gradual and consistent, the motion is classified as human. Sharp, overly linear, or too-mechanically precise movement suggests scripted input and a virtualization/sandbox environment.

Many automated sandboxes generate low-complexity cursor paths. Human motion, even when attempting a straight line, naturally introduces micro-curves and subtle variation.

By mathematically measuring curvature and smoothness, LummaC2 applies a probabilistic filter to determine whether it is operating in a real user environment before releasing its payload.

3. Time Based Checks (T1497.003): Beating the Clock

The Time Based Checks (ATT&CK T1497.003) technique involves monitoring system clock, uptime, or instruction execution speed to identify anomalies in the flow of time that are characteristic of virtualized environments.

Because sandboxes only analyze files for a brief window of time (usually a few minutes), adversaries frequently use these checks to outlast the observation period. However, modern techniques go beyond simple sleep timers; they actively measure how time passes on the CPU to detect the “invisible drag” or timing inconsistencies introduced by a hypervisor.

In the Wild: Again, as depicted in this year’s Red Report, Blitz malware employs a highly sophisticated timing check. It spins up two concurrent threads to compare execution times.

Main Thread: Executes a CPUID instruction loop 1,000,000 times. CPUID executes quickly on bare metal but takes measurably longer when intercepted by a Virtual Machine.

Secondary Thread: Executes a high volume of complex floating-point calculations (fyl2xp1), incrementing a global_count variable with each success.

Once the main thread finishes its million loops, the malware calculates a ratio to see how many floating-point operations the secondary thread managed to complete in that exact timeframe:

Resultant = 1000000.010 × global_count? |

If the system is a physical machine, the concurrent threads run smoothly, and the code expects a threshold where the resultant > 5.0.

However, if a hypervisor is present, the overhead of managing the concurrent CPUID intercepts slows the secondary thread down. The resultant falls below 5.0, the malware realizes it’s inside a VM, and it terminates itself.

Shift from Hunting Files to Hunting Behavior

The rapid rise of Virtualization and Sandbox Evasion (ATT&CK T1497) reveals a deeper problem in how we validate security. When malware checks hypervisor timing or inspects system artifacts before executing, traditional defenses often see nothing.

Static signatures miss it. Sandboxes return clean results, and no alert is triggered, because the payload never runs.

In this threat model, we see that, increasingly, file analysis is no longer enough.

Attackers don’t just deliver malicious files; they’re now selectively executing malicious behavior. Security teams must therefore shift from analyzing artifacts to validating adversarial behavior in their own environments.

This is where Adversarial Exposure Validation (AEV) matters.

AEV tools, such as Breach and Attack Simulation (BAS), and automated penetration testing, continuously and safely execute real-world attacker techniques against the actual security stack. They validate whether controls truly prevent execution, whether detections fire, and whether response mechanisms activate as expected.

Test Your Defenses with the Red Report Threat Template

In a landscape increasingly defined by stealth and selective execution, theoretical risk scores are no longer enough.

This is why Picus Labs has distilled the complex, evasive behaviors and real-world procedures highlighted in this year’s findings into five highly valuable Red Report Threat Templates.

» Validate your defenses against the most prevalent threats of 2026 using the five Red Report Threat Templates.

These actionable templates enable your security team to safely simulate advanced evasion and persistence techniques, verifying that implemented security controls block them immediately.

Conversely, these templates will expose whether behavioral analytics and monitoring are truly tuned to detect these evasive “Digital Parasites” or are lulling your teams into a literally false sense of security.

Ready to see the full data behind the Digital Parasite model? Download the Picus Red Report 2026 to explore this year’s findings and understand how modern adversaries are staying undetected inside networks longer than ever before.

Sponsored and written by Picus Security.