Most organizations have invested heavily in security products over the past decade. The assumption embedded in that spending is that more tools equal better protection. Tim Nan, CEO of digiDations, says that assumption is the most persistent misconception he encounters when working with security leaders across industries.

“Adversaries don’t operate on averages,” Nan says. “They only need one path that works. The issue isn’t whether your defenses work most of the time. It’s whether they ever fail in a way that can be chained into a real attack.”

Defenses can perform well across most scenarios and still carry gaps under specific conditions that can be chained into a working attack. Even in mature environments, those gaps exist.

Speed and volume are exceeding what manual methods can cover

Two converging trends are making the case for continuous validation more urgent. The first is attacker speed. According to the CrowdStrike 2026 Global Threat Report, the median breakout time for adversaries moving laterally after gaining initial access dropped from 98 minutes in 2021 to 29 minutes in 2025. Detection has to keep up with that compression or the window for response disappears before analysts can act.

The second trend is volume. The Forum of Incident Response and Security Teams (FIRST) has projected more than 160 new CVEs per day. At that rate, manual or periodic testing cannot cover the range of attack scenarios organizations need to assess.

“Periodic testing gives you a moment-in-time view,” Nan says. “Both your environment and attacker behavior change constantly, so those results can become outdated very quickly.”

From snapshot to signal

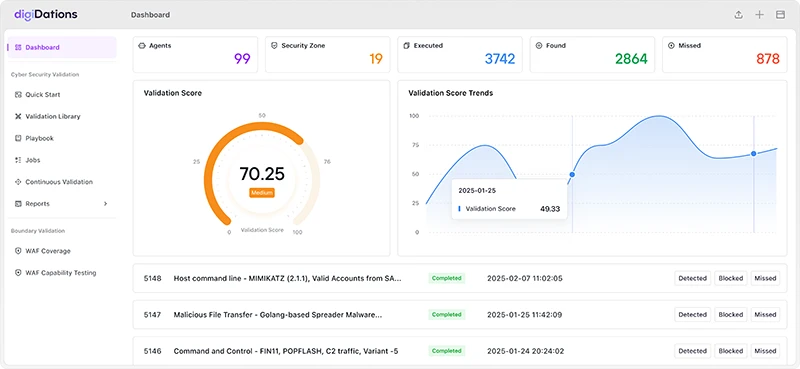

digiDations built its ATLAS platform to address that gap. The platform runs continuous adversary simulations mapped to the MITRE ATT&CK framework and the Cyber Kill Chain, and it measures control effectiveness, detection gaps, and the impact of configuration changes over time. Its library covers more than 24,000 tactics, techniques, and procedures, more than 5,000 detection rules, and more than 800 threat groups, with updates driven by AI analysis and expert review.

ATLAS dashboard

The shift continuous validation enables is one from asking “Did we detect this?” to asking “Did we detect and respond fast enough, across enough scenarios, to prevent real impact?” That reframing connects validation output to response quality, not just coverage.

Training the SOC alongside testing the infrastructure

“Continuous validation turns security into an ongoing training environment,” Nan says. “Every validation cycle doubles as a live-fire drill for the SOC. Teams are repeatedly experiencing how attacks unfold in their own environment.”

This exposure builds pattern recognition over time. Analysts learn how threats move across systems and develop faster response instincts. The process also creates a feedback loop. When response breaks down, teams can see where, refine their playbooks, and validate those improvements in the next cycle. ATLAS includes TARA AI, which provides real-time payload analysis, detection recommendations, and remediation guidance to accelerate that learning and reduce investigation time.

AI on both sides of the equation

The pressure on validation is increasing on another front. AI is being used by attackers to generate evasive payloads, automate reconnaissance, and accelerate exploit development. Defenders are deploying AI for detection and triage. The gap between the two depends on which side can iterate faster.

Nan says validation has to evolve at the same speed. digiDations is incorporating AI-driven evasion techniques into its simulations so that organizations are tested against attack behavior that reflects current attacker capabilities.

“We’re working toward systems that incorporate human cognitive functions such as reasoning, adaptation, and decision-making into the validation process,” Nan says. “The goal is not just automation, but intelligent validation that thinks and evolves like an attacker.”

A practical starting point

For CISOs still relying on periodic assessments, Nan offers a direct recommendation: shift from reactive to proactive defense by introducing ongoing, controlled attack simulations that measure how controls and teams perform against real-world behavior.

“Stop asking whether you think you are secure,” Nan says. “Start asking whether you can prove your defenses work right now.”