Meta has announced Purple Llama, a project that aims to “bring together tools and evaluations to help the community build responsibly with open generative AI models.”

Generative Artificial Intelligence (AI) models have been around for years and their main function, compared to older AI models is that they can process more types of input. Take for example the older models that were used to determine whether a file was malware or not. The input is limited to files and the output usually comes in the form of a percentage. (e.g. The chance that this file is malicious is 90%. ).

Generative AI models are capable of sorting through more types of information. Take for example Large Language Models (LLMs) that can process text, images, videos, songs, diagrams, webinars, computer code, and other similar types of input.

Generative AI models are the closest to human creativity we can get at this point in time. As such, generative AI has brought about a new wave of innovations. It enables us to have a conversation with models like ChatGPT, create images based on instructions, and summarize large amounts of text(s). It can even write papers to the point where scientists have a hard time telling them apart from human work.

According to Meta’s statement:

“Collaboration on safety will build trust in the developers driving this new wave of innovation, and requires additional research and contributions on responsible AI.”

For this reason, Meta is collaborating in project Purple Llama with other AI application developers like Microsoft and cloud platforms like AWS and Google Cloud, and chip designers like Intel, AMD, and Nvidia.

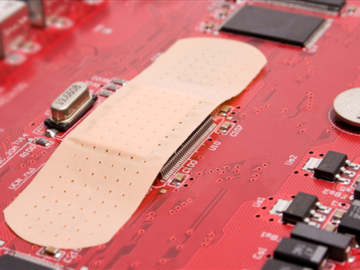

LLMs can generate code, that fails to follow security best practices or introduce exploitable vulnerabilities. Given that GitHub recently proudly touted that 46% of code is produced with the help of their CoPilot AI, this is far from just a theoretical risk.

So, it makes sense that the first step in project Purple Llama focuses on tools to test cyber security issues in software-generating models. This package allows developers to run benchmark tests to check how likely it is for an AI model to generate insecure code or assist users in carrying out cyberattacks.

The package is introduced under the name CyberSecEval, a comprehensive benchmark developed to help bolster the cybersecurity of LLMs employed as coding assistants. Initial tests showed that on average, LLMs suggested vulnerable code 30% of the time.

To check and filter all the inputs and outputs of a LLM, Meta released Llama Guard. Llama Guard is a freely available model that provides developers with a pretrained model to help defend against generating potentially risky outputs. The model has been trained on a mix of publicly available datasets to help find common types of potentially risky, or violating content. This will allow developers to filter out specific items that might cause a model to produce inappropriate content.

The announcement has come at the same time as the Cybersecurity & Infrastructure Security Agency (CISA) has published a guide setting out The case for memory safe roadmaps. In its guidance, CISA attempts to provide manufacturers with steps they can take towards creating and publishing memory safe roadmaps as part of the international Secure by Design campaign.

Memory safety vulnerabilities are a class of well-known and common coding errors that cybercriminals exploit quite often. Coding errors that could increase in numbers unless we take steps toward using memory safe programming languages and use the methods to check the code generated by LLMs.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.