OpenAI has confirmed that Chinese-linked operators misused ChatGPT as part of a broader campaign that blended cyber operations, online harassment, and covert influence tactics, according to its latest threat report “Disrupting malicious uses of AI.”

While the models were not used to write exploits or break into networks directly, they were repeatedly abused to plan and amplify operations that targeted critics, dissidents and foreign political figures online.

In one of the most serious cases, OpenAI banned a ChatGPT account tied to an individual associated with Chinese law enforcement who was documenting a sprawling program of “cyber special operations.”

The user treated ChatGPT like an operational log, drafting situation reports that described attempts to intimidate dissidents abroad, fabricate news of their deaths, and coordinate large trolling and smear campaigns across hundreds of social media platforms.

OpenAI’s investigators matched these diary-style updates against real activity on X, blogs and other sites, uncovering campaigns that targeted opponents of the Chinese government and even impersonated U.S. officials using forged legal documents.

In some cases, the scammer reused the same “attorney” identities across multiple supposed law firms. One of the websites that we identified posed as IC3.

Although much of the content was ultimately posted using other tools and platforms, ChatGPT played a central role in drafting narratives, refining propaganda, and tracking the status of each operation.

Harassment Campaigns

The report details multiple named operations that relied on OpenAI’s models to generate articles, social posts and emails supporting pro-Beijing or pro-Russian narratives while attacking critics.

One effort focused on Japan, where operators tried to smear the country’s first female prime minister with conspiracy-themed memes and hashtags coordinated across fringe platforms.

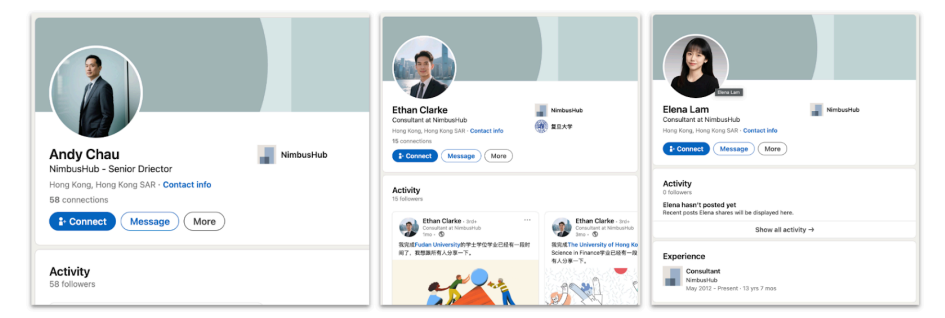

Another cluster, dubbed “Silver Lining Playbook,” used ChatGPT to craft spear-phishing style outreach emails that appeared to come from a Hong Kong consultancy but were traced back to actors in mainland China.

In each case, the models were prompted to produce polished, localized content in multiple languages, helping attackers rapidly adapt messages for different audiences and channels.

OpenAI notes that this automation allowed relatively small teams to run what looked like large, persistent information operations and harassment campaigns, blurring the line between classic disinformation and modern cyber-enabled intimidation.

OpenAI stresses that its safety systems blocked many requests to generate explicit malware or technical attack instructions, pushing some operators to other, less restricted AI tools. Instead, Chinese-linked actors primarily used ChatGPT to:

- Draft threat messages and fake legal notices aimed at silencing overseas dissidents and forcing content removals.

- Write phishing-style emails and spoofed official communications that could be used to harvest information or pressure targets.

- Produce propaganda articles, memes and comment scripts that online agents then copy‑pasted into social networks and forums.

These activities form a key part of modern cyberattacks, where psychological pressure, identity fraud and information control are combined with technical intrusion. By lowering the cost of creating convincing text at scale, generative AI amplifies the reach and persistence of such campaigns.

Security Implications

OpenAI says it has banned the identified accounts, tightened abuse detection and continues sharing indicators with governments and platforms to help defenders track related networks.

In November, we identified accounts across the internet posting a very similar hashtag, ˝óünq˜ ˛, which has the same meaning, but a more idiomatic Japanese construction.

The company argues that sophisticated state and criminal actors increasingly mix commercial AI services with custom or open‑source models, meaning defenders must watch for AI‑generated content rather than focusing only on traditional malware or network signatures.

For organizations, the findings highlight the need to treat polished emails, legal threats and social messages with caution, even when they appear linguistically perfect and localized.

Security teams should expand threat models to include AI-assisted social engineering, disinformation and harassment campaigns orchestrated by state-backed groups, including those tied to Chinese security services.

Follow us on Google News, LinkedIn, and X to Get Instant Updates and Set GBH as a Preferred Source in Google.