AI coding assistants are quickly becoming part of everyday development. Tools like Cursor, Claude Code, and GitHub Copilot can now do more than suggest code. They can read files, run shell commands, and call external tools during a session. That makes them useful, but it also creates a new risk: secrets can be exposed long before code reaches a repository or CI pipeline.

A developer might paste an API key into a prompt while debugging. An AI agent might read a .env file, run a command that prints credentials, or pass sensitive data through an MCP call. Once that data enters the AI workflow, it may be sent to a model provider, logged, cached, or otherwise exposed.

GitGuardian is addressing this problem by extending ggshield with hook-based secret scanning for AI coding tools. The goal is simple: detect secrets in prompts and agent actions early enough to block them before they are sent to the model or executed.

What is it?

GitGuardian’s AI hook support integrates with the native hook systems of Cursor, Claude Code, and VS Code with GitHub Copilot. Once installed, ggshield scans content during AI-assisted development in real time.

The product focuses on three moments in the workflow:

- Before prompt submission, it scans the developer’s input before it is sent to the model.

- Before tool use, it scans commands, file reads, and MCP calls before the AI assistant executes them.

- After tool use, it scans the tool output. At that stage, the action has already happened, so it does not block, but it can notify the user if secrets are found.

This gives organizations a preventive control in a place where many security programs currently have little or no visibility.

Why this matters

Most companies already have some way to scan repositories, commits, or CI pipelines for leaked credentials. But AI workflows sit outside those controls. Prompts, local file access, shell output, and model-connected tools are often invisible to security teams, even though they can handle highly sensitive data.

That blind spot is becoming harder to ignore. GitGuardian’s State of Secrets Sprawl 2026 found that 28.65 million new hardcoded secrets were added to public GitHub in 2025, while AI-service leaks surged by 81%, showing how quickly sensitive data exposure is expanding as AI-assisted development becomes more common.

That gap matters for two reasons.

First, secrets can be exposed before they ever become part of the source code.

Second, organizations are starting to think about AI governance more broadly, especially around what AI agents are allowed to access and send to third-party systems.

In that context, secret scanning at the hook level is useful not just as a developer safety feature, but also as part of a wider governance model for agentic AI use.

How it works

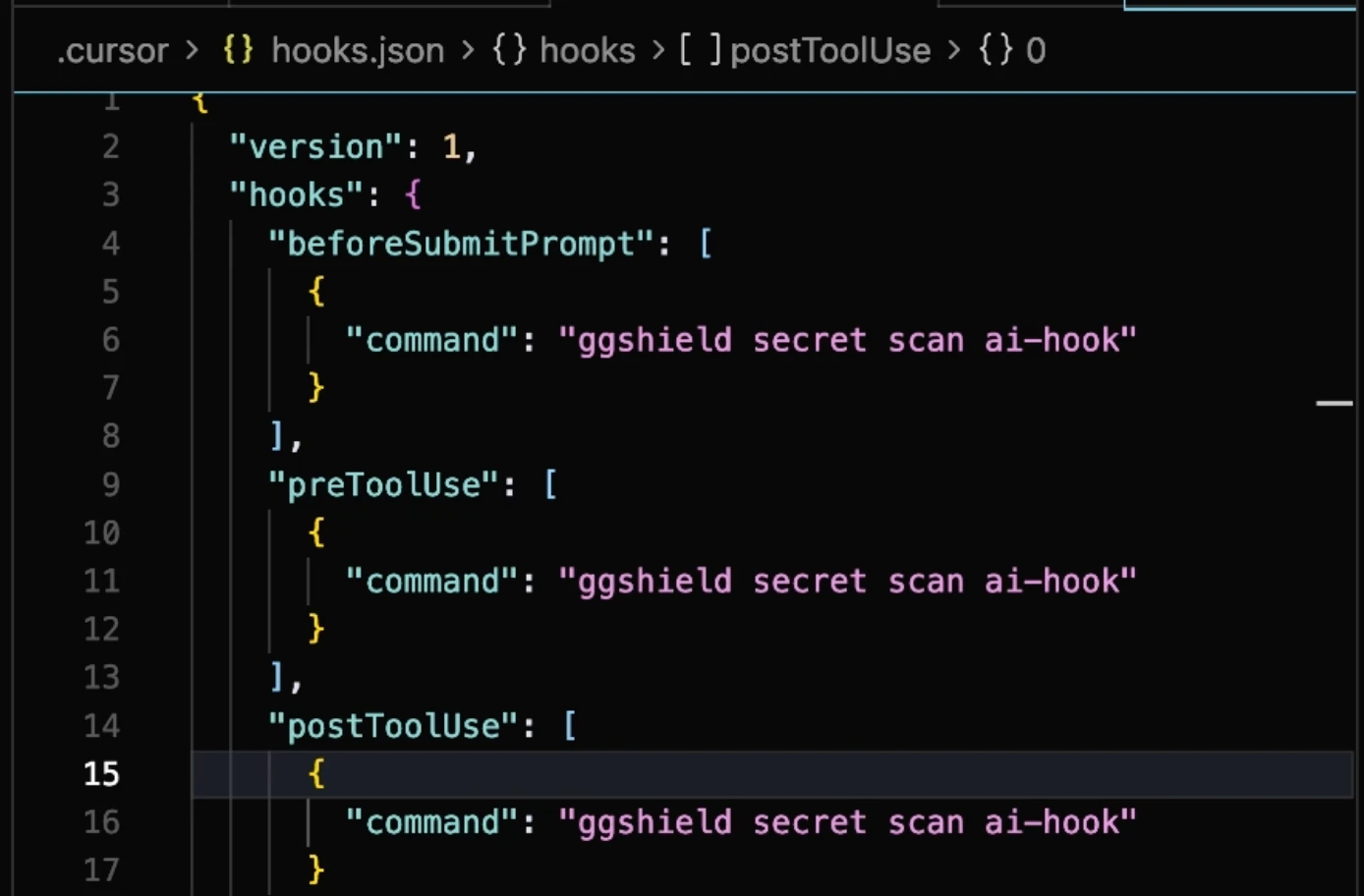

The setup is intentionally simple. Users install the integration with a ggshield command, either globally or for a specific project. ggshield then writes the relevant hook configuration for the selected tool.

For example, Cursor can be configured with:

ggshield install -t cursor -m global

Claude Code can be configured with:ggshield install -t claude-code -m global

VS Code with GitHub Copilot is also supported through the same installation model.

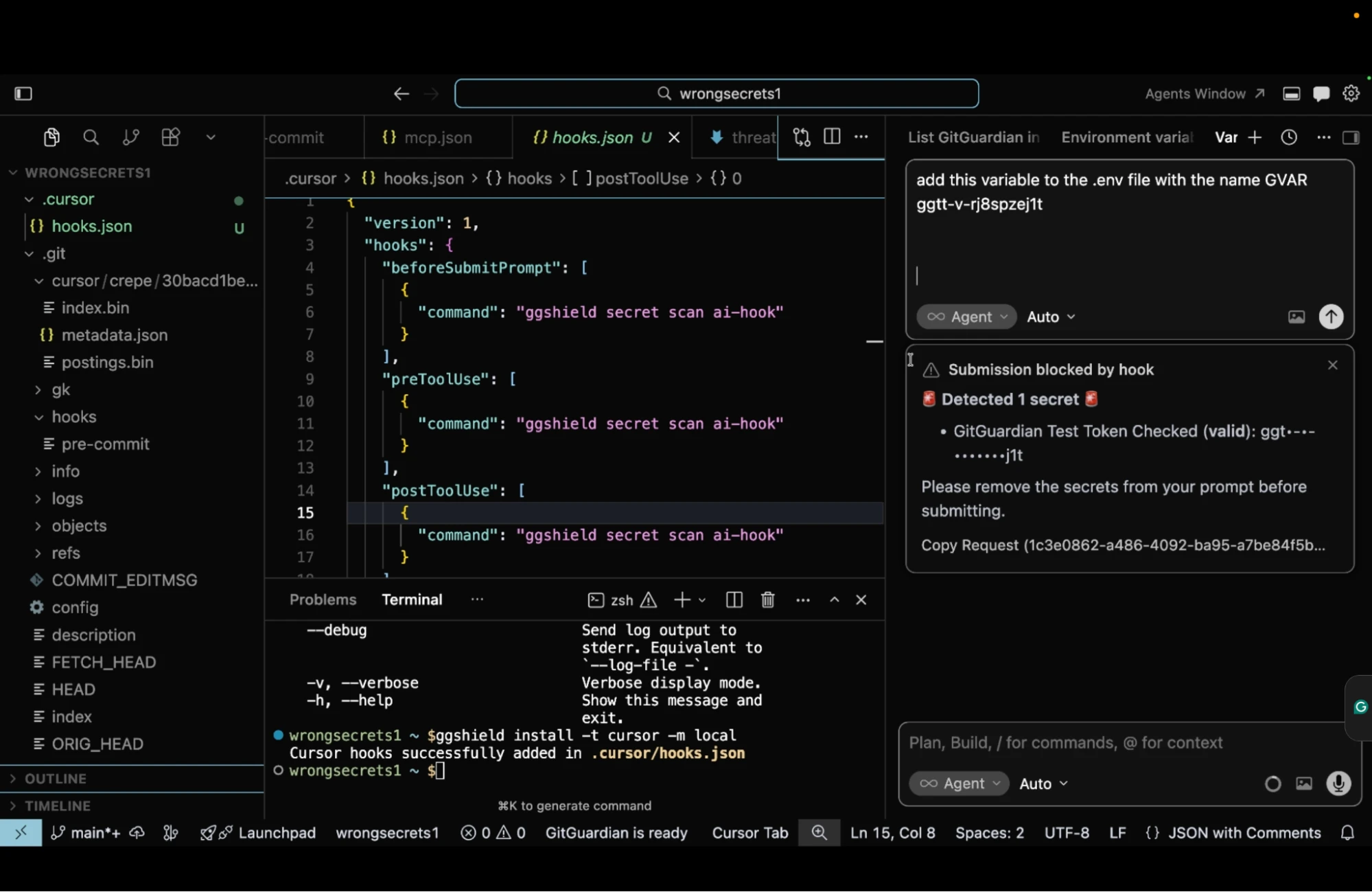

Once enabled, the hook runs automatically at the configured stages. If a secret is detected in a prompt or a pre-tool action, the workflow is blocked, and the developer is asked to remove the secret before retrying. For post-tool detections, GitGuardian sends a desktop notification instead.

The integration does not introduce a separate dashboard into the developer workflow. It relies on the standard hook behavior of each supported tool, which keeps the experience lightweight.

What developers see

When a secret is detected, the user sees a blocking message in the AI coding tool. The message identifies the issue and tells them to remove the secret before proceeding.

That matters because the warning appears at the point of action, not later in a repository scan or ticket. Developers get feedback when the risk is introduced, which is often the moment when it can still be fixed most easily.

If the detection is a known false positive, users can ignore the last finding with GitGuardian’s existing workflow:ggshield secret ignore --last-found

That ignore rule then applies to future scans, including AI hook scans.

Detection coverage

The feature uses the same GitGuardian detection engine that powers other ggshield secret scanning workflows. This covers more than 500 types of secrets.

That consistency is useful for teams already using GitGuardian elsewhere. It means they are not adopting a separate detection model just for AI tools. Instead, they are extending an existing secret scanning approach into a newer workflow where credentials are increasingly at risk.

Who is it for?

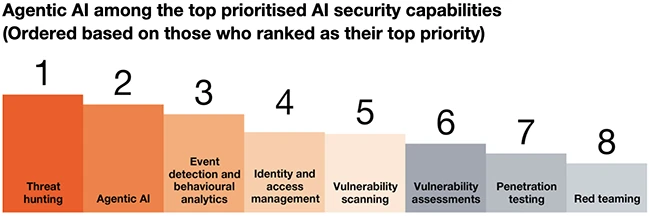

This capability is aimed at organizations that are already using AI coding assistants and want some guardrails without removing those tools from developer environments.

It is especially relevant for:

- Security teams concerned about credentials reaching LLMs or third-party services

- Platform teams rolling out AI assistants across engineering

- Regulated organizations that need more visibility and control over AI-assisted workflows

- Teams exploring MCP and agent governance as part of a broader non-human identity strategy

The strongest fit is likely in environments where AI adoption is moving faster than security policy, and teams need a practical way to reduce risk without slowing development to a crawl.

Final thoughts

AI coding assistants are introducing a new layer into the software development workflow, and that layer has its own security problems. Secrets exposure through prompts, tool calls, and agent actions is one of the more immediate ones because it can happen quietly and outside the controls organizations already trust.

GitGuardian’s approach is straightforward. Rather than trying to detect the issue afterwards, it scans those interactions in real time and blocks risky actions when possible. For teams looking to bring some security control into AI-assisted development without adding too much friction, that makes this capability worth a trial.