- Governing AI Adoption as It Outpaces Security Frameworks

- The New AI Attack Surface Is Mostly an Inventory Problem

- Inventory-First Visibility Across AI Assets, Cloud Services, Models, and MCP Servers

- AI Assets and Software – Find AI-Capable Hosts and What Runs on Them

- Cloud Services – See AI Services Running in Cloud Accounts

- Models – Move from “We Think We Have” to “Here Is the List”

- MCP Servers – Inventory AI Tool Integrations As a Security Surface

- Specialized LLM Scanning – Configurable, Repeatable for LLM-Specific Risks

- Enhanced Detections with OWASP LLM, MITRE ATLAS, EU AI Act mapping

- Reporting That Works for Both Security and Governance

- Scan Reports That Translate Testing into Posture

- Unified Dashboards for Executive Visibility

- Continuous AI Posture Management, Because AI Changes Every Week

- A Practical Rollout Plan for Security Leaders

- Take Control of AI Risk Without Slowing AI Delivery

- Frequently Asked Questions (FAQs)

- Contributors

- Related

Key Takeaways

- AI security demands a paradigm shift, treating models, endpoints, and integrations as dynamic attack surfaces requiring continuous governance.

- Inventory-driven visibility is foundational to managing AI sprawl, uncovering hidden assets, and aligning security with innovation velocity.

- Specialized AI scanning redefines risk management by addressing adversarial vulnerabilities unique to model behavior and inference surfaces.

- Mapping detections to frameworks like OWASP LLM and MITRE ATLAS bridges technical findings with regulatory and business imperatives.

- Continuous AI posture management ensures resilience, enabling organizations to scale AI innovation without compromising security or compliance.

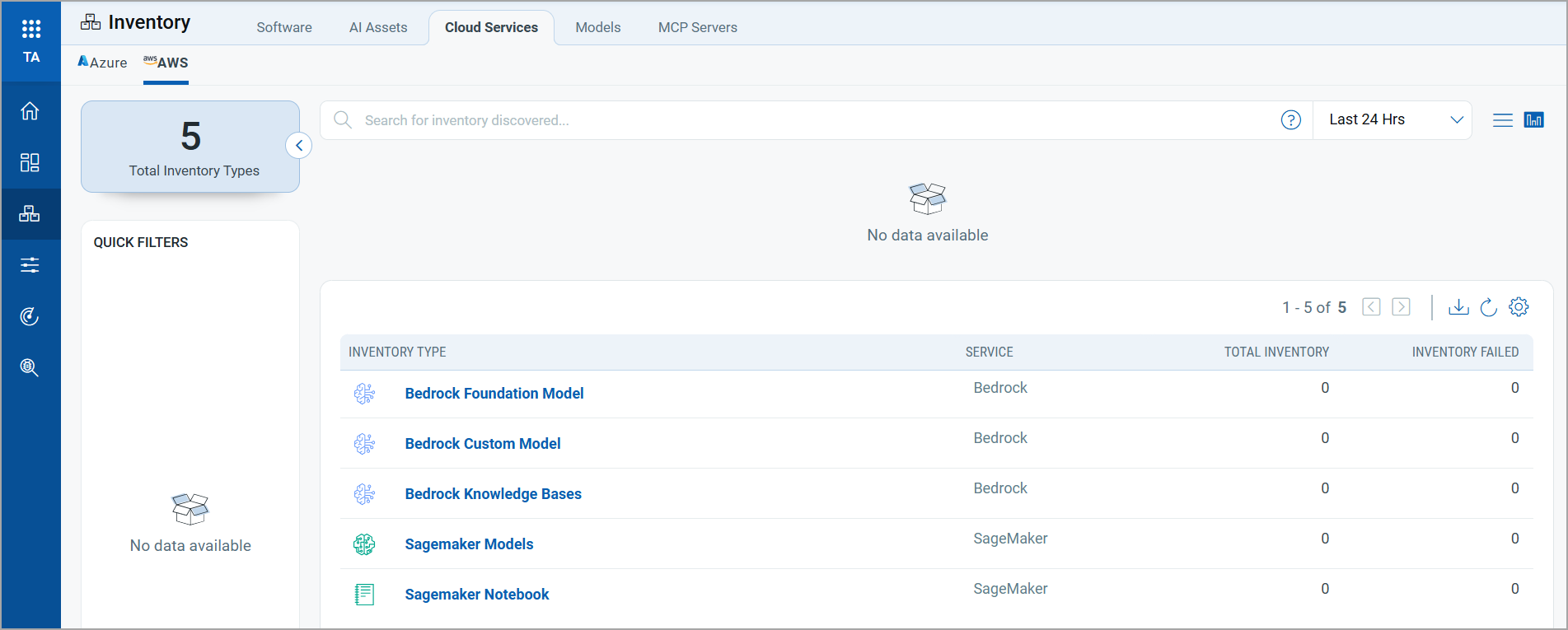

Governing AI Adoption as It Outpaces Security Frameworks

AI adoption inside enterprises is moving faster than governance. Models get embedded into apps, copilots, and internal workflows. Endpoints get spun up in cloud consoles. MCP servers get connected “just to test something,” then quietly become a production dependency.

That is how AI risk shows up in real life. Not as a single “model vulnerability,” but as a growing set of unmanaged assets, undocumented integrations, and exposed inference surfaces that your existing security stack was not built to inventory, test, and monitor.

Qualys TotalAI is designed to close that gap by treating AI as the first-class attack surface. It starts with discovery, then moves to model scanning, mapped detections, and reporting that cybersecurity leaders can operationalize.

The New AI Attack Surface Is Mostly an Inventory Problem

Most organizations think in terms of “approved AI platforms.” Attackers, however, do not follow approval boundaries. They look for what is reachable and what is misconfigured.

In practice, the AI footprint spreads across four places:

- AI-capable infrastructure – GPUs and AI software stacks sitting on hosts that are often managed like “special-purpose compute,” not like standard production infrastructure.

- Deployed model endpoints – Inference URLs that accept prompts and return outputs. These endpoints become business APIs, even when teams do not call them that.

- Cloud AI services – Managed services in Amazon Web Services and Microsoft Azure where model deployments and AI service inventory are created faster than central security can track.

- AI integrations and tool connectors (MCP servers) – Standardized MCP integrations give models access to tools and data sources. They also create a new control plane that needs visibility and governance.

TotalAI’s workflow reflects that reality: discover what exists, onboard what matters, scan it, then track detections over time.

Inventory-First Visibility Across AI Assets, Cloud Services, Models, and MCP Servers

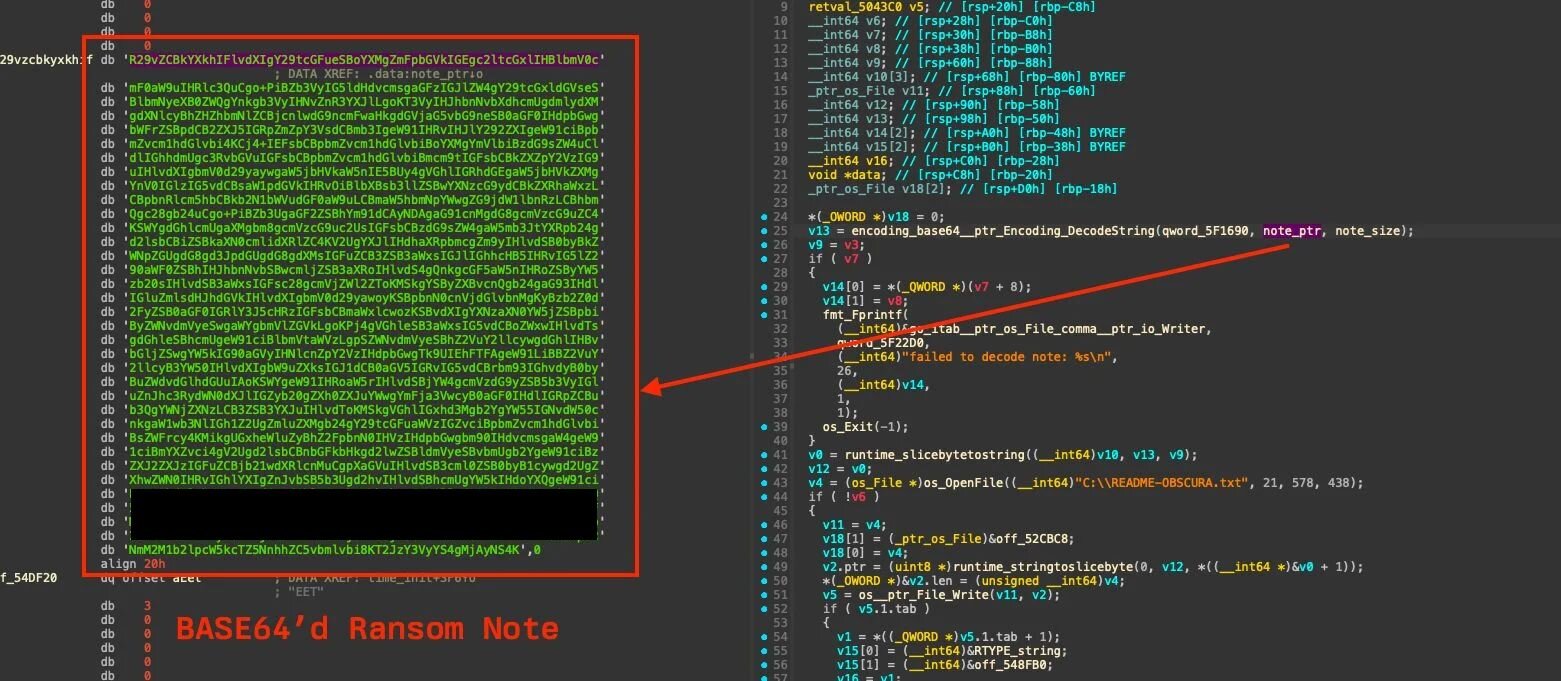

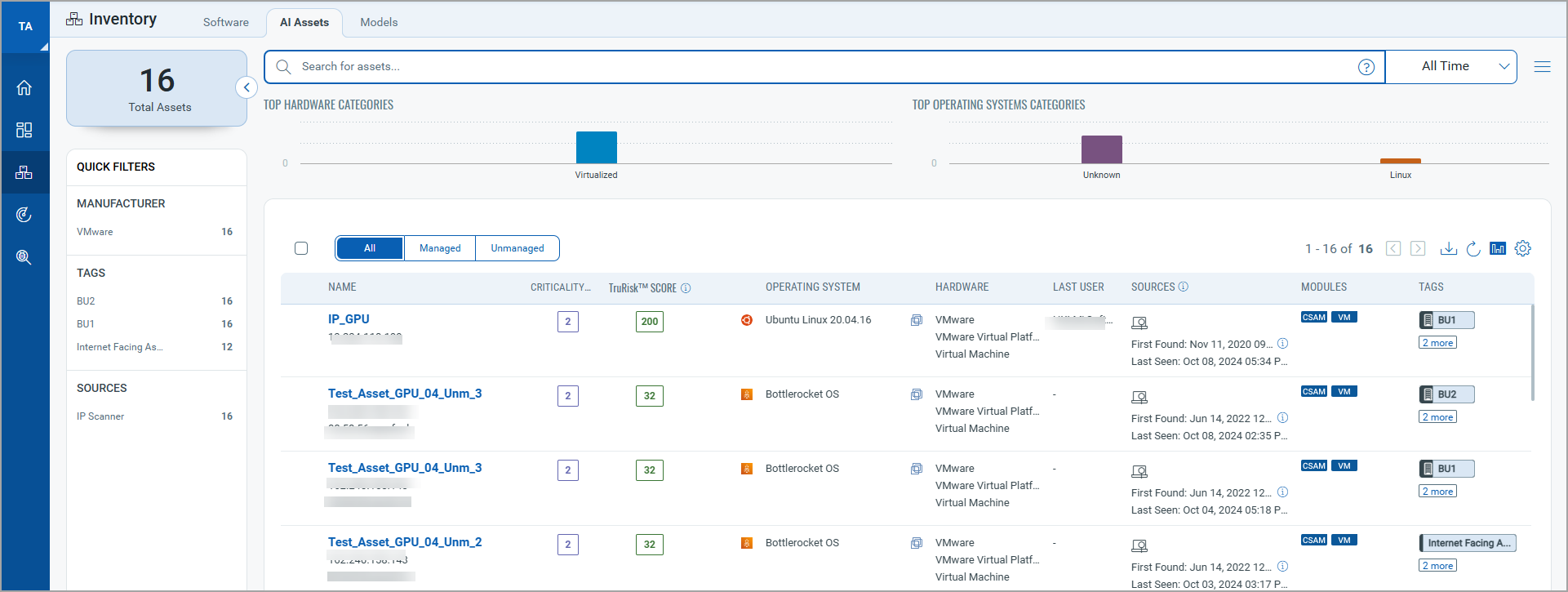

AI Assets and Software – Find AI-Capable Hosts and What Runs on Them

TotalAI Inventory includes:

- AI Assets, assets where an AI fingerprint is detected. Today, that scope includes systems with GPUs and AI-related development or deployment software.

- Software, packages, and frameworks detected on infrastructure, useful for understanding where AI stacks exist and where they are spreading.

Model risk is often downstream of infrastructure drift. If the “AI box” becomes a snowflake that skips your normal hardening and patch cycles, you get predictable outcomes: exposed ports, weak credentials, stale dependencies, and shadow services.

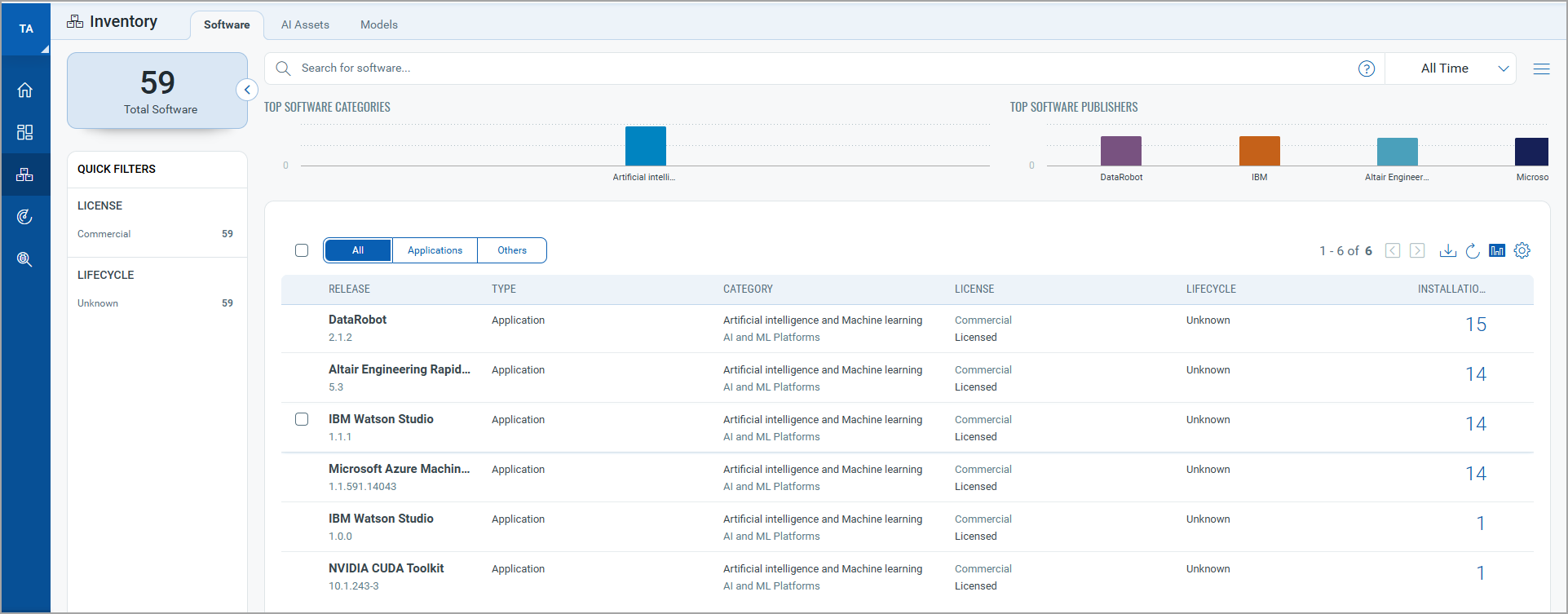

Cloud Services – See AI Services Running in Cloud Accounts

The Cloud Services inventory is built to show AI services running in your cloud account, and the model deployment details you need to onboard a model for scanning. This includes deployment metadata such as endpoint URLs, deployment names, and versions.

For security teams, this is where reality shows up. Cloud accounts already know what is deployed. TotalAI uses that signal, so you don’t have to guess based on tickets and spreadsheets.

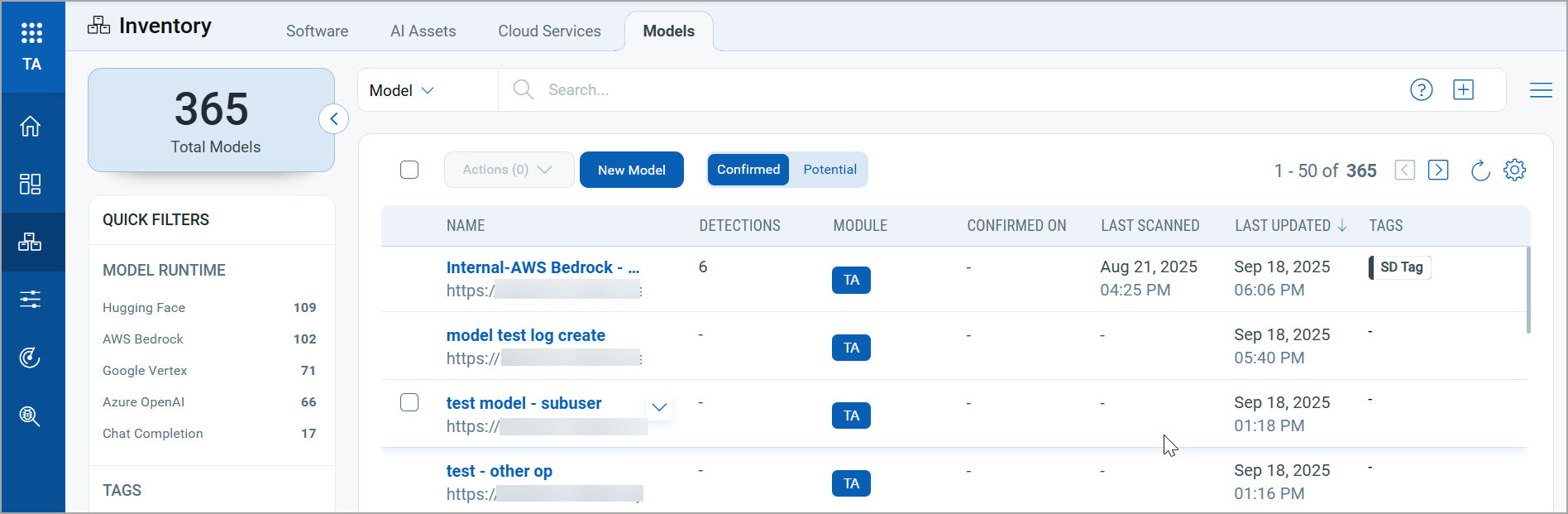

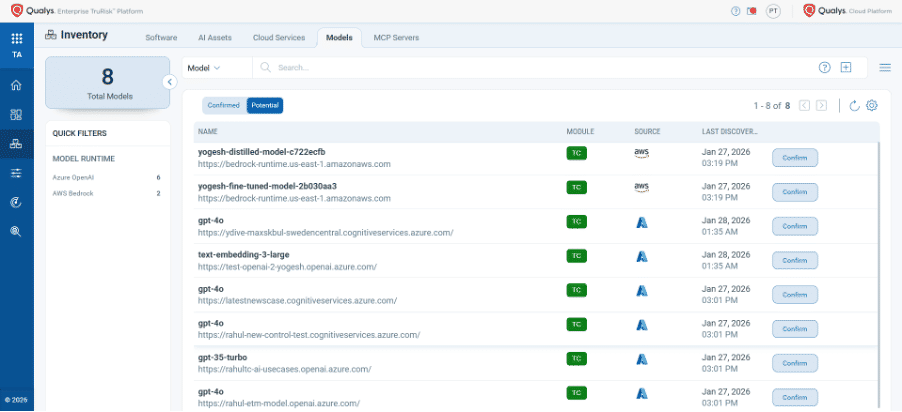

Models – Move from “We Think We Have” to “Here Is the List”

The Models view is where AI becomes governable.

- You can onboard models across supported runtimes, including AWS Bedrock, Azure AI, Google Vertex, Hugging Face, Chat Completion, and Databricks Chat (added in recent releases).

- TotalAI can also surface Potential models discovered from cloud inventory (notably for Azure source), so teams can confirm and onboard them instead of missing them.

That confirmed vs potential split is important for governance. It lets you say, “These are scanned and owned,” and separately, “These exist but are not yet approved or assessed.”

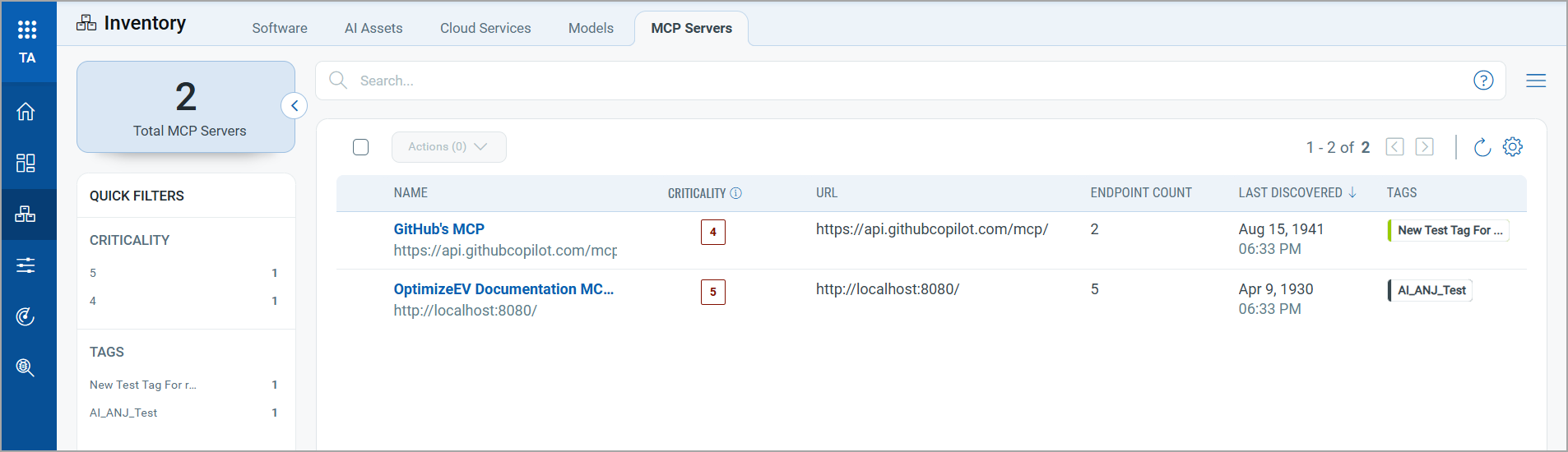

MCP Servers – Inventory AI Tool Integrations As a Security Surface

TotalAI also provides an MCP Servers inventory view with MCP name, URL, last discovered date, and associated endpoints, giving you a centralized view of MCP integrations.

For security teams, the value is simple: MCP sprawl is the new SaaS sprawl, except it can bridge models to data and actions. If you cannot see MCP servers, you cannot govern them.

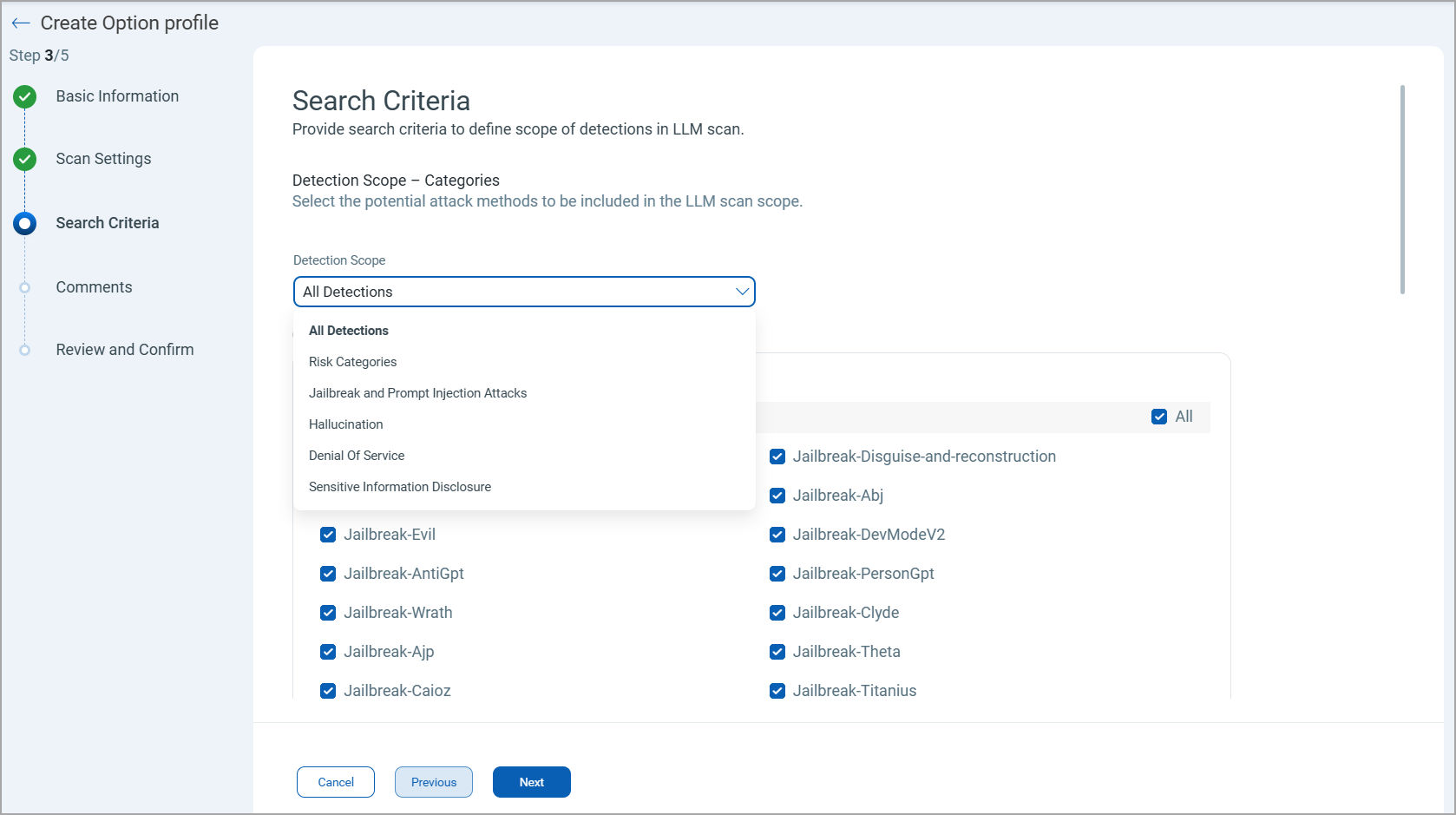

Specialized LLM Scanning – Configurable, Repeatable for LLM-Specific Risks

Traditional scanners were built for hosts and web apps. Model scanning needs different knobs because the “attack surface” includes model behavior under adversarial prompting.

TotalAI uses Option Profiles to define scan settings and detection scope. Scan settings include parameters like temperature, top-k, top-p, max tokens, retry attempts, timeouts, and parallelism.

Detection scope lets you choose what to test, including:

- risk categories

- jailbreak susceptibility

- prompt injection attack sets

- multimodal jailbreak

- mixed-translation jailbreak

- package hallucination

- model denial of service

- sensitive information disclosure

Operationally, scans are launched against an onboarded model, with an option profile and scanner selection (external, internal, or tag-based). TotalAI supports internal scanning with 64-bit scanner appliances, and it can use perimeter scanning when appropriate.

If your model is accessible only within your organization, an internal scanning solution is required. TotalAI will use internal Qualys Scanners to scan these models.

Enhanced Detections with OWASP LLM, MITRE ATLAS, EU AI Act mapping

Finding “prompt injection” is not enough. CISOs need to answer three questions quickly:

- What happened?

- Why does it matter to the business and regulators?

- What do we do next?

TotalAI’s Detection Details are built around that flow. A detection includes the QID, attack association, OWASP LLM category mapping, and also links to EU AI Act Articles and MITRE ATLAS techniques for mapped QIDs.

Just as important, Detection Details preserve evidence. You can view the questions asked, the model’s answers, and TotalAI’s analysis response for a given finding. This evidence trail is what makes remediation actionable. It reduces the endless back-and-forth between security, engineering, and compliance because everyone can see the failing behavior, not just a label.

Reporting That Works for Both Security and Governance

Scan Reports That Translate Testing into Posture

TotalAI scan reports summarize severity, in-scope QIDs, failed QIDs, and successful jailbreak counts. They also show pass/fail breakdowns across OWASP LLM categories and attack categories, then list detailed results per QID with an appendix that captures scan mode, scanner type, and option profile settings. That is what you need for repeatable governance: same scope, same settings, measurable drift over time.

Unified Dashboards for Executive Visibility

TotalAI integrates with Unified Dashboard (UD) and provides a default dashboard template with widgets for GPUs, AI assets, vulnerabilities, models by detection count, scan status, and detections categorized by OWASP names and categories. AI security becomes part of your existing operational cadence.

Continuous AI Posture Management, Because AI Changes Every Week

AI environments do not stand still. New models get deployed. Endpoints get reconfigured. MCP servers get added. Scopes expand quietly, especially during product launches and platform engineering pushes.

TotalAI supports a continuous loop:

- Discover AI assets and cloud AI services

- Surface potential models from cloud inventory

- Onboard models and standardize scan settings through option profiles

- Launch scans and review detections with evidence

- Report posture through scan reports and dashboards

A Practical Rollout Plan for Security Leaders

Week 1: Inventory and classification

- Turn on Cloud Services discovery for your cloud AI footprint.

- Review Potential models, confirm what’s legitimate, and tag ownership.

- Inventory MCP servers and baseline endpoint counts.

Week 2–3: Standardize scanning

- Create Option Profiles for dev, staging, and prod scan behaviour and detection scope.

- Onboard high-impact models first, then launch scans.

Week 4: Operationalize

- Use Model Detections to drive remediation queues by category and fail rate.

- Export Scan Reports into your risk and compliance evidence pack.

- Use EU AI Act and MITRE ATLAS mappings to brief legal, compliance, and enterprise risk in their language.

Take Control of AI Risk Without Slowing AI Delivery

AI innovation should move fast. Security should keep up without turning every model into a compliance fire drill.

TotalAI extends the Qualys Cloud Platform approach into AI by making AI assets, cloud AI services, models, and MCP servers visible, then pairing that visibility with specialized model scanning and evidence-backed detections mapped to OWASP LLM, EU AI Act context, and MITRE ATLAS techniques.

If you are thinking about scaling AI, treat models and their integrations like production infrastructure. Inventory them. Scan them. Track drift. Keep receipts.

Discover your AI exposure. Secure every model.

Frequently Asked Questions (FAQs)

Why is AI security a growing concern for enterprises?

AI security is critical as unmanaged assets, undocumented integrations, and exposed inference surfaces create vulnerabilities that traditional security tools cannot address.

How does inventory-first visibility improve AI governance?

Inventory-first visibility uncovers hidden AI assets, cloud services, and integrations, enabling organizations to manage sprawl and align security with operational realities.

What makes AI-specific scanning essential?

AI-specific scanning addresses unique risks like prompt injection, jailbreak susceptibility, and model denial of service, which traditional scanners are not equipped to detect.

How do frameworks like OWASP LLM and MITRE ATLAS enhance AI security?

These frameworks provide structured mappings of vulnerabilities to business and regulatory contexts, enabling actionable insights and streamlined compliance.

How can organizations maintain AI security in rapidly evolving environments?

Continuous AI posture management ensures ongoing discovery, scanning, and governance, adapting to new deployments and configurations without slowing innovation.

Contributors

- Indrani Das

- Sheela Sarva

- Samrat Sreevatsa