The Mines of More-Agree-Ah

Once more I’m writing alone in my room at a Semgrep off-site, crackling fires and s’mores outside.

So I’ll be brief and just share a meme that made me smile:

If you, like me, could always use additional AI (sycophancy) x LOTR puns you can squeeze into your daily life, I give you:

Helm’s Deeply Agreeing With You

Isengard-anteed Agreement

Bag End-less Validation

Mount Doom-scrolling For Approval

Rohan-estly Just Agreeing With Everything

Preciousss Little Pushback

The Return of the Yes-King

There And Sycophantically Back Again

My Precious… Feedback Loop

Feel free to send me any LOTR puns / memes (they don’t need to be about AI).

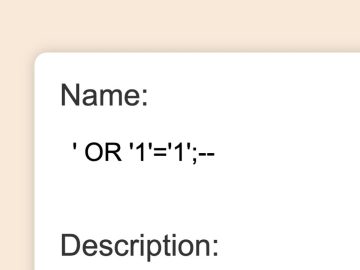

99% of organizations are already leveraging vibe coding. Developers use prompts to write code in seconds, but there’s a catch: when “vibes” replace manual syntax, developers can rapidly introduce risks.

From “slopsquatting” attacks on AI-hallucinated packages to overprivileged AI agents, the risks are scaling faster than your security team can patch.

Download the Executive Guide to Vibe Coding to learn how to keep your development fast and your applications secure.

AppSec

There’s Always Something: Secrets Detection at Engagement Scale with Titus

Praetorian’s Michael Weber, Noah Tutt, and Zach Grace announce Titus, an open-source secret scanner written in Go that detects and validates leaked credentials across source code, binary files, and HTTP traffic (Burp extension, Chrome extension), shipping with 450+ detection rules from Nosey Parker and MongoDB’s Kingfisher fork. The binary files it currently supports include Office documents (xlsx, docx, pptx), PDFs, Jupyter notebooks, SQLite databases, and just about every common archive format (zip, tar, …).

Samsung/CredSweeper

By Samsung: A tool to detect credentials in any directories or files, using regex, entropy and machine learning (architecture). From their paper: “Using a Voting Classifier (combination of Logistic Regression, Naïve Bayes and SVM) we are able to reduce the number of false positives considerably.“

Samsung/CredData

By Samsung: A labeled dataset designed for training and benchmarking credential scanning tools, consisting of 19.4M lines of code from 297 GitHub repositories containing ~73K manually labeled lines (4.5K true credentials). The dataset includes obfuscated credentials across 8 categories with metadata tracking line positions, credential types, and ground truth labels.

Love the sharing of a labeled dataset, awesome!

Rare Not Random

Zachary Rice explores using Byte-Pair Encoding (BPE) token efficiency as an alternative to entropy for filtering false positives in secrets detection, which measures how “rare” or non-natural-language a string is. In BPE, common words and subwords get merged into long tokens, while rare or unnatural strings get broken into many short tokens. So “lookingatcomputer” gets turned into three tokens (“looking”, “at”, “computer”), but “kj2h3f2fuaafewa” would get broken into individual bytes (and thus more tokens per string size). Zachary tested BPE on CredData, and saw that it was good Neat!

AI adoption doesn’t follow change control. It follows convenience. That means federated logins, OAuth sprawl, builder creds in prod, and agents operating at machine speed. This brief outlines a practical identity-first framework to secure AI in the real world.

Securing AI identity and gaining visibility seems critical

Cloud Security

Doing more with less and layoffs/senior talent attrition is definitely unrelated to the outages they’ve been having. Coding agents are awesome and the future, but guardrails and defense in depth are more important than ever.

How Security Tool Misuse Is Reshaping Cloud Compromise

Qualys’ Sayali Warekar walks through how threat actors have weaponized TruffleHog, a popular secrets detection tool, to discover and validate exposed cloud credentials in real-world attacks like Crimson Collective (Red Hat breach, 570GB stolen), TruffleNet (AWS SES abuse across 800+ hosts), and Shai-Hulud. To detect this attack in your environment, look for log entries where GetCallerIdentity (or other AWS API calls) have a user-agent string like “TruffleHog”, processes like “trufflehog[.exe]”, and rapid GetCallerIdentity calls or permission enumeration patterns.

Locking down AWS principal tags with RCPs and SCPs

Aidan Steele describes how to use Resource Control Policies (RCPs) and Service Control Policies (SCPs) together to create trustworthy AWS principal tags, which can be useful for fine-grained access control, allowing only tagged roles to call sensitive APIs. The solution involves deploying a centralized tagger role via CloudFormation service-managed stacksets, using SCPs to restrict IAM principal tagging operations to only this role, and critically, using RCPs with two separate deny statements to prevent session tag injection from both non-tagger roles within the org and any principals outside the org (including OIDC/SAML IdPs and external accounts).

The type of detailed, thoughtful AWS/IAM chicanery you’d expect from Aidan, nice.

Supply Chain

SANDWORM_MODE: Shai-Hulud-Style npm Worm Hijacks CI Workflows and Poisons AI Toolchains

Socket’s research team discovered SANDWORM_MODE, a supply chain worm campaign spreading through 19 malicious npm packages that uses obfuscation to hide credential theft, GitHub API/DNS tunneling exfiltration, persists via git hooks, and automated propagation via stolen npm/GitHub tokens. The worm immediately exfiltrates crypto keys, then targets password managers (Bitwarden, 1Password, LastPass), injects malicious MCP servers into AI coding assistants (Claude, Cursor, VS Code Continue, Windsurf) with embedded prompt injections that silently steal SSH keys and AWS credentials, and propagates by injecting dependencies/workflows into repos.

The malware contains dormant capabilities including a polymorphic engine configured to use local Ollama (deepseek-coder:6.7b) for self-rewriting and a destructive dead switch that wipes the home directory when both GitHub and npm access are lost.

I don’t know what this says about me, but when I see supply chain attacks with complex post exploitation/persistence functionality, I think, “Neat, that’s clever. That’s cool that it’s not just an amateur hour postInstall script that obviously pipes a shady URL to bash.”

Clinejection – Compromising Cline’s Production Releases just by Prompting an Issue Triager

Adnan Khan found a prompt injection vulnerability in Cline’s (now removed) Claude Issue Triage workflow allowed any GitHub user to compromise production releases (millions of devs across VS Code Marketplace and OpenVSX) by injecting malicious instructions into issue titles, causing Claude to execute arbitrary code via npm install from an attacker-controlled fork. The attack chain leverages GitHub Actions cache poisoning to pivot from the triage workflow and steal secrets (see Adnan’s neat Cacheract tool).

So likely someone was aware of Adnan’s work, because he’s found a number of high profile supply chain issues in the past, so they watched his testing, and then just scooped up and used his PoC. Very smart.

Blue Team

Mapping Deception with BloodHound OpenGraph

SpecterOps’s Ben Schroeder describes how to use OpenGraph and BloodHound to strategically place deception technologies by mapping attack paths across Active Directory and third-party systems like GitHub and Ansible. The post introduces deceptionClone, a utility for modeling deception nodes and edges in OpenGraph, and also calls out F4keH0und, a PowerShell tool that analyzes SharpHound data to identify opportunities for creating canary accounts. The post also shows merging GitHound and AnsibleHound collections to visualize cross-technology attack paths, such as planting honey credentials in GitHub artifacts that lead to a deceptive Ansible Tower job template.

ClickFix: Stopped at ⌘+V

Patrick Wardle describes a simple defense against ClickFix attacks (social engineering that tricks users into pasting malicious commands into Terminal) by detecting Command+V keypresses and checking if the frontmost application is a terminal. When a paste is detected, the implementation pauses the terminal process via SIGSTOP, displays the clipboard contents to the user for confirmation, and clears the pasteboard if blocked. This prevention has been implemented in BlockBlock.

GitLab Threat Intelligence Team reveals North Korean tradecraft

Fascinating deep dive by the GitLab threat intelligence team sharing indicators of compromise (IOCs) and case studies for North Korea’s Contagious Interview and fake IT worker campaigns. IOCs include email addresses, JavaScript malware dropper URLs hosted on services like Vercel, malicious NPM packages, proxy IP addresses, synthetic persona emails, phone numbers of China-based cell members, etc.

The case studies are neat: the financial records and administrative documents from a North Korean IT worker cell operating out of Beijing (quarterly income performance for individual members), synthetic ID generation at scale, and more.

AI + Security

wardgate/wardgate

Give AI agents API access without giving them your credentials. Wardgate is a security gateway that sits between AI agents and the outside world — isolating credentials for API calls and gating command execution in remote environments (conclaves).

lukehinds/nono

By Luke Hinds: A capability-based sandbox for AI agents and POSIX processes that uses kernel-level enforcement (Landlock on Linux, Seatbelt on macOS) to make unauthorized operations impossible rather than relying on policy-based filtering. The tool blocks dangerous commands by default (rm, dd, chmod, sudo, package managers), provides granular filesystem permissions via –allow/–read/–write flags, and includes built-in profiles for Claude Code, OpenCode, and OpenClaw.

100+ Kernel Bugs in 30 Days

Yaron Dinkin and Eyal Kraft describe how they built an AI agent swarm to automatically reverse engineer and audit Windows kernel drivers at scale. They analyzed 202 high risk drivers and found 521 potential vulnerabilities across 158 unique driver binaries, which after manual triage they believe represents ~100 genuinely exploitable local privilege escalation (LPE) bugs. The experiment costed $600 total, ~$3 per target, $4 per bug

Scrape drivers from the MSFT update catalog, OEM sites, and public driver repositories.

Preprocess them and identify good targets.

Analyze each binary with a council of LLM agents – decompilation agent, attack surface agent identifies functions worth auditing, and a code audit agent inspects each target for memory corruption bugs

Virtualize – They created a custom VM-based harness for loading drivers on kernel-debugged Windows machines controlled by agents.

Validate – Using the harness, the agents iteratively create Python PoC scripts per finding, effectively performing guided fuzzing until the machine crashes. The BSOD crash dump is then analyzed to confirm the vulnerability triggered correctly.

They manually confirmed and reported 15 vulnerabilities (average CVSS 8.2) to vendors including AMD, Intel, NVIDIA, Dell, Lenovo, and IBM, but after 90+ days only Fujitsu patched and assigned a CVE

“The biggest performance leap was achieved by “closing the loop” and giving the agent direct feedback on exploitation success using our VM-based kernel-debugging harness. Agents that can try to bugcheck the machine over-and-over again are tomorrow’s fuzzers, and with enough compute they’re 100x more dangerous in the wrong hands.”

Giving agents the ability to try a bunch of things and validate the results without human involvement is

Things Are Getting Wild: Re-Tool Everything for Speed

Last year Phil Venables had an incrementalist view of the cybersecurity impact of AI, but now believes that it will create a short-term crisis as attackers gain a great advantage but in the long run defenders will win out. The four major pillars of concern: a massive increase in vulnerabilities from AI-generated code, attackers industrializing exploitation with AI tooling, everything can be faked (content, people, companies), and unpredictable emergent behaviors from trillions of interacting agents.

However, defenders can leverage their structural advantages (environmental control, specific context, and data access), implement strong baseline controls and continuously monitor them (authentication, segmentation, fast patching, detection/response), leverage AI for vulnerability discovery and auto-remediation, and use defensive agent swarms. Defenders need to prioritize finding and fixing whole classes of vulnerabilities through frameworks and tooling, run their OODA loop faster than attackers, and adopt AI-driven red teaming to iteratively harden defenses.

XBOW’s Oege de Moor also writes about this idea here and here, calling this the “Chaos Phase,” arguing that in the short term attackers will better operationalize AI / build autonomous hacking tools. “Security programs that hold up under pressure are shifting toward continuous validation. Systems that relentlessly test assumptions, controls, and exposure in the background, without waiting for humans to initiate every action.”

Wrapping Up

Have questions, comments, or feedback? Just reply directly, I’d love to hear from you.

If you find this newsletter useful and know other people who would too, I’d really appreciate if you’d forward it to them

P.S. Feel free to connect with me on LinkedIn