VoidLink shows that AI-assisted malware is now a mature, operational tool rather than a lab experiment, compressing what once required a full team into days of work by a single developer.

At the same time, threat actors are cautiously testing self-hosted models, abusing agentic AI architectures, and probing enterprise GenAI usage as a fresh attack surface, with data leakage already visible at scale.

OPSEC mistakes by the author exposed detailed artifacts showing sprint plans, architecture, and code generated via a commercial AI-powered IDE, compressing an estimated months-long engineering effort into less than a week and yielding over 88,000 lines of functional code.

Crucially, nothing in the binaries themselves revealed that an AI agent had done most of the heavy lifting; analysts initially assumed a coordinated team based solely on VoidLink’s quality and modular design.

Check Point Research’s VoidLink investigation confirms that a sophisticated, cloud‑first Linux framework with modular C2, rootkits, and extensive post‑exploitation features was largely developed using AI.

This sets an important precedent: AI-assisted development should be treated as a default hypothesis when assessing advanced toolchains, even if static or dynamic analysis shows no obvious AI “fingerprints.”

AI-Assisted Malware

VoidLink also illustrates that methodology matters more than raw model access. The developer used a Spec Driven Development flow, where markdown specifications defined goals, modules, coding standards, and acceptance criteria that the AI then implemented sprint by sprint.

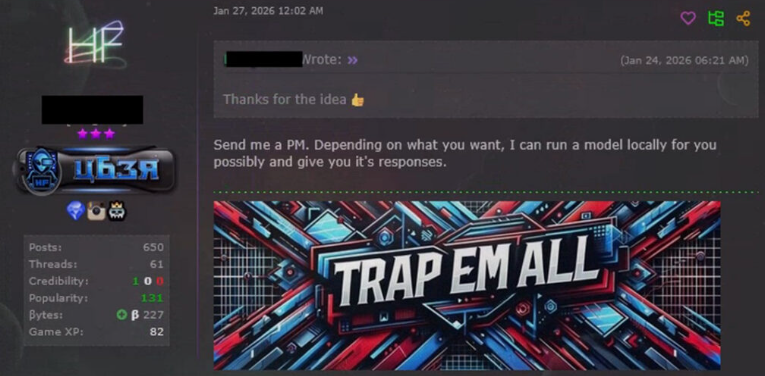

Users with malware and hacking backgrounds are installing uncensored model variants such as wizardlm-33b-v1.0-uncensored and openhermes-2.5-mistral, and prompt them with comprehensive malicious wishlists spanning ransomware, keyloggers, phishing kits, and exploit code.

This mirrors the agentic AI shift in legitimate engineering tools such as agent-based IDEs, where structured project files orchestrate autonomous code generation and testing.

By contrast, most visible cybercrime forum activity still revolves around unstructured prompting asking models for “ransomware” or “stealth loader” snippets and pasting results into ad‑hoc projects, which tends to yield noisy, unreliable output.

The more capable pattern, combining deep domain expertise with disciplined, spec‑driven agent workflows, leaves far fewer traces but is now proven to produce team-grade malware in days.

Across underground discussions, actors increasingly explore uncensored local LLMs to escape rate limits, moderation, and logging, comparing variants and hardware stacks for running “unrestricted” models.

Yet both public research and forum threads converge on the same conclusion: without significant investment in hardware, tuning, and evaluation, self-hosted models hallucinate frequently and often fail to meet the quality bar required for robust evasion and exploit reliability.

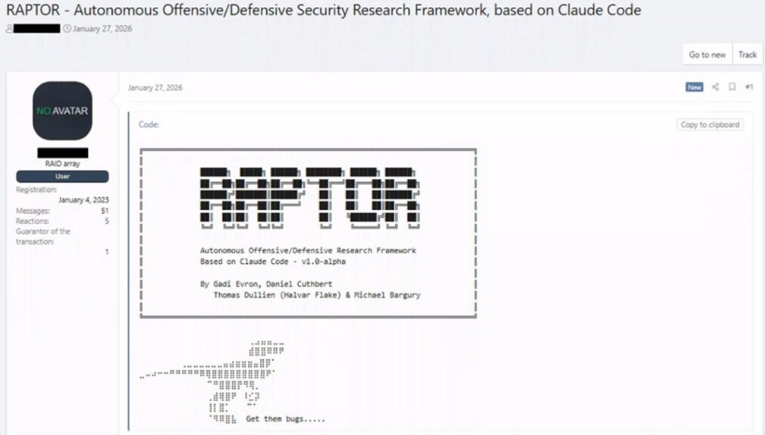

Even in research frameworks such as RAPTOR, which turns Claude Code into an autonomous security agent via markdown skill files, evaluations show commercial frontier models consistently producing compilable exploit code while local models remain “often broken.”

Experienced operators echo this in candid assessments, calling local deployments “more burden than productive” and continuing to lean on commercial systems despite tightening safeguards.

Jailbreaking shifts to agent architecture

Traditional single‑prompt jailbreaks are losing ground as providers harden safety layers and clamp down on easily shared payloads.

RAPTOR’s own data provides an additional data point on the commercial versus self-hosted question we discussed earlier.

In response, attackers are pivoting from conversational tricks to abusing configuration layers in agentic tools using project files that define an AI agent role, constraints, and behavior to disable safeguards and reorient the system toward offensive tasks silently.

A packaged “Claude Code jailbreak” exemplifies this trend: instead of injecting prompts in chat, it weaponizes the CLAUDE.md project configuration, redefining the agent as a malware author and suppressing safety rules when the project loads.

Cost estimates range from $5,000 to $50,000 depending on the desired performance, with training timelines of 3–12 months and frank admissions that models “hallucinate a lot” without extensive investment.

This is structurally similar to VoidLink’s markdown-driven workflow and RAPTOR’s skill definitions: in all three, the real control plane is not the model weights but the documentation layer that governs what the agent builds and how it behaves.

Alongside development assistance, AI is starting to appear as a live element inside offensive pipelines, with frameworks like RAPTOR orchestrating static analysis, fuzzing, exploit generation, and triage via autonomous agents powered by model backends.

Criminal interest in these architectures, combined with their open-source availability, suggests similar agentic workflows will increasingly be adapted for private offensive use.

On the defensive side, enterprise GenAI adoption is rapidly expanding, and with it, a measurable leakage problem.

Recent cloud and threat reporting shows that roughly one in every 31 GenAI prompts involves high‑risk sensitive data, with about 90% of GenAI‑using organizations affected and prompt volumes per user climbing steadily.

As organizations deploy more tools and even local GenAI infrastructure, AI becomes both a productivity layer and an exposed surface that adversaries and careless insiders can exploit.

Follow us on Google News, LinkedIn, and X to Get Instant Updates and Set GBH as a Preferred Source in Google.