Logs and telemetry are the foundation of modern cybersecurity. They enable threat detection, incident response, forensic investigation, and compliance across endpoints, networks, and cloud environments. Yet, despite their importance, high‑quality security attack logs are notoriously difficult to collect, especially at scale.

Real‑world security telemetry is often composed of repeated benign activity occurring across environments and with very rare malicious activity. Gathering, labeling, and maintaining datasets with real attack logs is costly and operationally challenging. It requires not only labeling malicious activities, but also fully reconstructing attack scenarios. These challenges significantly slow detection engineering and limit the quality of both the rule-based detection authoring and anomaly-detection approaches.

In this post, we explore a different path: using AI to generate realistic, high‑fidelity synthetic security attack logs. By translating attacker behaviors, expressed as tactics, techniques, and procedures (TTPs)—directly into structured telemetry, we aim to accelerate detection development while preserving realism and security.

Why is this work important for Microsoft Defender customers?

For Microsoft Defender customers, this work is crucial because it directly addresses the challenge of obtaining high-quality, realistic security attack logs needed for effective threat detection and response. By leveraging AI-driven synthetic log generation, organizations can accelerate the development of detection rules and AI-based automation approaches, while ensuring privacy and reducing operational overhead. Synthetic logs enable customers to simulate a broader range of attack scenarios—including rare and emerging threats—without exposing sensitive data or relying on costly lab-based simulations. Ultimately, this approach enhances the agility and effectiveness of Microsoft Defender detection and response capabilities, helping customers stay ahead of evolving cyber threats.

Why Synthetic Security Logs in addition to Lab Simulations?

Synthetic data has been widely adopted in various fields as a privacy-conscious substitute for real data, and it offers even greater advantages in cybersecurity. It enables the creation of safe, shareable datasets that avoid exposure of sensitive customer information, allows simulation of rare or emerging attacks that are challenging to observe in real environments, accelerates the process of detection engineering and testing, and supports reproducible experiments for benchmarking and evaluation.

While synthetic logs are not a replacement for all lab-based validation, they can complement lab simulations by speeding up early-stage detection design, testing, and coverage expansion. Traditionally, generating realistic attack telemetry requires executing real attacks in controlled lab environments. While accurate, this approach is slow, labor‑intensive, and difficult to scale. It also limits agility for the security teams responsible for defending our systems and delays the rollout of new threat detections into production. This blog examines whether AI-assisted synthetic log generation can provide similar fidelity, without the operational overhead of lab‑based attack execution.

Core Idea: From TTPs to Logs

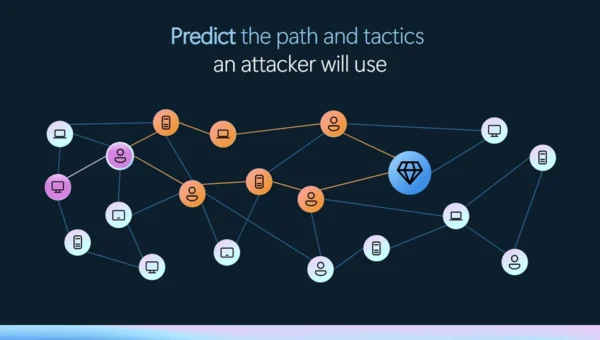

Attackers can abuse TTP through various actions that exploit different processes. At a high level, the proposed workflow consumes “TTP + Action” as input and produces structured security logs as output.

Input: High‑level attacker TTPs from the MITRE ATT&CK framework [1], a widely used knowledge base of adversary tactics and techniques, and concrete attacker actions. See the example below.

| Tactic | Technique | Action |

| Stealth | T1202 – Indirect Command Execution | The attackers executed forfiles and obfuscated their actions using variable expansion of %PROGRAMFILES and hex characters (for example, 0x5d). They obfuscated the use of echo, open, read, find, and exec to extract file contents, then passed the output to a Python interpreter for execution. |

Output: Realistic log entries with correctly populated fields such as “Command Line”, “Process Name”, “Parent Process Name”, and other relevant telemetry fields.

Goal: The goal is not to reproduce logs verbatim, but to generate realistic, semantically correct logs that would accurately trigger detections, mirroring real attacker behavior.

Approaches for Synthetic Attack Log Generation

We explore three increasingly sophisticated techniques for generating logs.

- Prompt‑Engineered Generation: Our baseline approach uses a series of carefully designed expert‑crafted prompts. The workflow comprises a structured, multi‑stage dialogue:

- Prompting: The model is given a detailed attack scenario and context.

- Iterative Generation: Logs are generated across multiple turns to maintain coherence.

- Evaluation: An independent large language model (LLM)-as-a-Judge assesses realism and consistency.

As depicted in the following image, the prompts explicitly instruct the model to reason like a cybersecurity researcher, leverage MITRE ATT&CK knowledge, and produce coherent attack narratives.

iterative generation of logs, and LLM-as-a-Judge evaluation.

- Agentic Workflow-based Generation: While the first approach works well in simpler cases, it struggles with complex, multi‑stage scenarios. To address these limitations, we introduced an agentic workflow using three specialized agents focused on different tasks:

- Generator Agent: Produces an initial set of logs based on the input.

- Evaluator Agent: Reviews logs and provides structured feedback.

- Improver Agent: Suggests targeted refinements based on feedback.

As depicted in the image below, these agents collaborate in an iterative loop (generate, evaluate, improve), allowing the system to correct errors, fill gaps, and refine details over multiple turns. This collaborative process significantly improves log completeness and fidelity, especially for complex attack chains.

agents collaborate to produce synthetic telemetry logs.

- Multi-Turn Reinforcement Learning with Verifiable Rewards: While the synthetic logs generated by the agentic workflow are often semantically correct, preserving key properties like parent‑child process relationships and event ordering, they still differ noticeably from real event logs, especially in process paths, command‑line arguments, service names and so on. This limits the usage of these logs to test detection efficacy; effective detection engineering requires reliably distinguishing benign activity from malicious behavior.

To address this challenge, we conduct experiments using Reinforcement Learning with Verifiable Rewards (RLVR). Instead of rigid rewards used by the evaluator agent in the previous agentic workflow approach, we use partial rewards to learn the policies as follows:- We use an LLM‑as‑a‑Judge as follows to compare the synthesized data against ground‑truth logs.

- The model only awards partial rewards based on semantic alignment and imposes a penalty if the generated string is not an exact match of the ground-truth logs, producing a more context-aware and flexible reward signal to guide the learning process.

- The judge also produces reasoning, making evaluations transparent, and auditable.

truth, issuing rewards or penalties to drive policy updates.

While this direction of research shows a lot of promise, it is heavily dependent on the amount of labeled training data. To address this limitation, we applied data augmentations, including:

- Paraphrasing attack narratives while preserving technical intent

- Perturbing parameters (e.g., replacing executable names with plausible alternatives, re-ordering flags, etc.)

This allowed us to scale from hundreds to thousands of training examples.

Evaluation Datasets

To ensure our approach generalizes across environments and attack types, we evaluated it on three complementary datasets:

- Goal‑Driven (GD) Campaigns: These are tightly scoped datasets produced by repeatable attack simulations conducted by our threat researchers. GDs are built around a specific security objective (e.g., detecting credential dumping on Windows servers). They provide clean ground truth and well‑defined attacker actions. We used a total of 10 different GD executions to evaluate our approaches.

- Security Datasets Project: An open‑source initiative [2] that provides malicious and benign datasets from multiple platforms, enabling broader evaluation and generalizability across different environments.

- ATLASv2 Dataset: The ATLASv2 dataset [3] is comprised of Windows Security Auditing logs, Sysmon logs, Firefox logs, and Domain Name System (DNS) telemetry. These logs are generated across two Windows VMs by executing 10 multi‑stage attack scenarios and introducing realistic noise and cross‑host behaviors. We limited the evaluation of synthetic attack logs to malicious activity during the attack windows.

Note: The external datasets from the Security Datasets Project and ATLASv2 are used strictly for research and validation of our log generation methods. These datasets are not used in the development, training, or deployment of any commercial products.

Evaluation

Methodology: We evaluated the prompt engineering and agentic workflow approach on the three datasets across multiple reasoning and non‑reasoning models, using recall as our primary metric. Recall measures the model’s ability to generate semantically relevant log instances (true positives) expected for a given attack scenario. Our LLM‑as‑a‑Judge performs flexible matching, focusing on:

For example, a synthetic log containing “forfiles.exe” can successfully match a ground‑truth entry with the full path “D:WindowsSystem32forfiles.exe”.

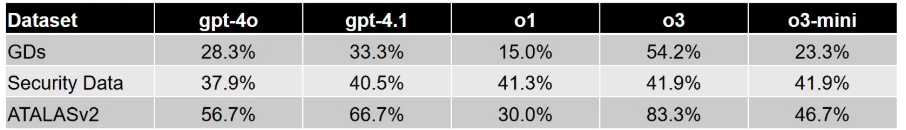

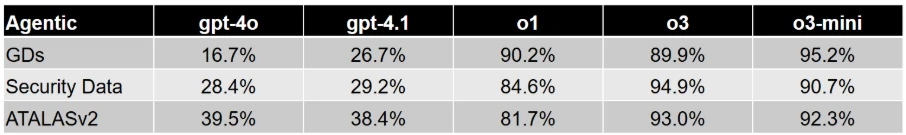

Key Results: The results in experimental evaluation demonstrate that prompt-only approaches establish a baseline but show inconsistent performance. The agentic workflows deliver dramatic recall improvements across all datasets. Reasoning models, combined with agentic refinement, achieve the highest fidelity.

Finally, our experiments training reinforcement learning approaches conclude that while it shows a significant promise, a substantial amount of labeled data will be required for the agent to learn effective policies to make the synthetic data identical to benign logs.

Table 1 and Table 2 report the performance of the prompt-based and agentic workflow-based approaches, respectively. For reasoning models (o1, o3 and o3-mini), we report the recall values using a Medium reasoning effort. Overall, agentic collaboration emerges as the most effective technique for high‑quality synthetic attack logs generation.

Across the evaluation datasets we used, AI‑driven synthetic log generation shows strong potential to produce semantically meaningful logs from TTPs and attacker actions. It can capture multi‑event sequences, preserve parent‑child process relationships, and generate realistic command lines.

This capability can accelerate detection engineering by reducing dependence on costly lab setups and enabling rapid experimentation, without sacrificing realism or safety. Our early experiments with reinforcement learning with verifiable rewards also look promising and could improve verbatim alignment when sufficient training data is available.

References

- ATLASv2: ATLAS Attack Engagements, Version 2: 2401.01341

This research is provided by Microsoft Defender Security Research with contributions from Raghav Batta and members of Microsoft Threat Intelligence.

Learn more

For the latest security research from the Microsoft Threat Intelligence community, check out the Microsoft Threat Intelligence Blog.

To get notified about new publications and to join discussions on social media, follow us on LinkedIn, X (formerly Twitter), and Bluesky.

To hear stories and insights from the Microsoft Threat Intelligence community about the ever-evolving threat landscape, listen to the Microsoft Threat Intelligence podcast.

Review our documentation to learn more about our real-time protection capabilities and see how to enable them within your organization.