AI is becoming part of both professional and private life, reaching mainstream adoption faster than the personal computer or the internet. These systems are now tested in reasoning, safety, and real-world tasks, but the reliability of those measurements remains uncertain.

The 2026 AI Index from Stanford’s Institute for Human-Centered Artificial Intelligence outlines the broader environment around this growth, including economic value, labor market effects, and the role of AI sovereignty. It also examines developments in science and medicine, the saturation of benchmarks, and governance frameworks that are struggling to keep up. Global sentiment reflects this landscape, with rising optimism alongside continued nervousness.

Number of reported Al incidents, 2012-25 (Source: Stanford HAI)

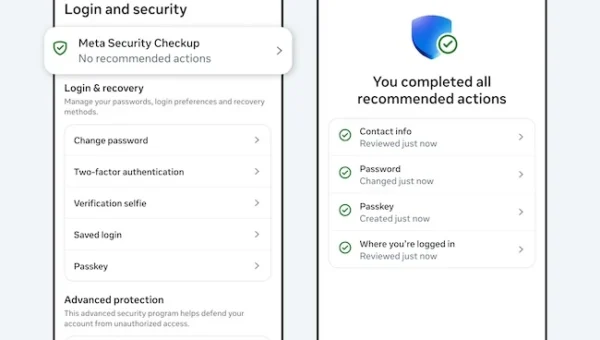

Incident records continue to grow

The number of reported AI incidents has increased over the past year, reflecting a broader presence of these systems in real-world settings. The AI Incident Database recorded 362 incidents in 2025, up from 233 in 2024. A separate monitoring effort from the OECD shows a similar pattern, with monthly incident counts reaching 435 at the start of 2026 and a sustained average above 300 over recent months.

These figures capture a range of issues, from unintended outputs to misuse and operational failures. Systems that operate in customer-facing channels or internal automation pipelines now run at a scale where small errors can surface quickly and be observed across multiple environments. Reporting reflects that exposure, with more cases entering public or semi-public records as deployment expands. Teams responsible for monitoring these systems are working with a growing volume of signals that require triage, classification, and response.

In many cases, these incidents do not follow familiar patterns seen in software environments. Outputs may vary depending on context, input phrasing, or interaction history, which can make issues harder to reproduce and analyze. This adds complexity to incident response, where teams must interpret system behavior that does not always map cleanly to defined failure states.

Model access is becoming more controlled

The way AI models are released has shifted toward restricted access. Most notable models now come from industry, and many are delivered through APIs that limit how users interact with them. Among the models tracked in 2025, API-based release was the most common approach, shaping how organizations integrate these systems into their workflows.

Training code is rarely shared. Most models are released without the code used to build them, and only a small number make that code publicly available. This limits the ability of external teams to reproduce results, examine training methods, or test systems outside the conditions defined by their developers. It also narrows the scope of independent validation, which has historically played a role in identifying weaknesses or unexpected behavior.

Limited access also affects how organizations evaluate vendors and tools before deployment. Without visibility into training processes or model architecture, assessments often focus on observed performance and documented behavior. This places more weight on testing during integration and on monitoring after systems are in use.

Transparency scores move downward

The overall level of disclosure around foundation models has declined. The Foundation Model Transparency Index dropped from an average score of 58 in 2024 to 40 in 2025. Lower scores appear in categories tied to how models are built and what happens after they are deployed, including data sources, compute resources, and downstream impact.

This affects how organizations assess the systems they adopt. Information about how to access a model is often available through documentation and interfaces, while details about training data, system limitations, or long-term effects are less frequently disclosed. That imbalance leaves gaps in the information needed for risk assessment and governance, especially when systems are integrated into critical processes.

The reduction in disclosure also limits the ability to compare systems beyond surface-level features. Teams may rely on partial documentation or third-party analysis to understand differences between models, which can introduce uncertainty into selection and deployment decisions.

Capability testing remains more visible than safety testing

Model developers continue to publish results on benchmarks that measure reasoning, coding, and general task performance. These evaluations are widely used and provide a common reference point for comparing system capabilities between models.

Safety-related benchmarks are reported less consistently and cover a narrower set of models. Categories that examine harmful outputs, bias, or misuse scenarios appear across fewer disclosures and lack a consistent reporting structure. This uneven coverage reduces the ability to compare systems on how they behave under risk conditions, even when capability benchmarks are widely available. In practice, teams evaluating AI systems often combine limited published data with their own internal testing.

“At the technical frontier, leading models are now nearly indistinguishable from one another. Open-weight models are more competitive than ever. But as models converge, the tools used to evaluate them are struggling to stay relevant. Benchmarks are saturating, frontier labs are disclosing less, and independent testing does not always confirm what developers report,” said Yolanda Gil and Raymond Perrault, Co-chairs, AI Index Report.

Oversight practices are adapting to limited visibility

AI systems are being integrated into workflows that were not originally designed for autonomous decision-making or probabilistic outputs. This creates new demands on oversight processes, particularly in areas where systems interact with users, generate content, or influence operational decisions.

Security and risk teams are adjusting by placing more emphasis on continuous monitoring and internal validation. In many cases, evaluation does not rely on published benchmarks alone. Organizations are building their own testing environments to observe how models behave under specific conditions relevant to their operations.

Teams are developing processes to classify and respond to AI-related issues that do not fit into categories such as software bugs or security vulnerabilities. These incidents can involve ambiguous outputs, unexpected model behavior, or interactions that produce unintended outcomes without a defined failure point.

Vendor relationships are also changing under these conditions. When access to underlying model details is limited, organizations rely more heavily on contractual terms, usage controls, and service-level expectations to define accountability. This places greater importance on how models are deployed and monitored after integration, with less emphasis on how they were originally developed.

These adjustments reflect a broader transition in how AI systems are managed in production environments. Oversight is becoming an ongoing process tied to system behavior in use, shaped by internal controls and operational experience, not external visibility into model design.