Google researchers have discovered the first evidence of hackers using AI to develop zero-day exploits, autonomous Android backdoors, and automated supply chain attacks against GitHub and PyPI.

Hackers have long used AI models to create phishing pages and identify security vulnerabilities. But according to a new report released today by Google Threat Intelligence Group (GTIG), attackers are now also using artificial intelligence to develop zero-day exploits.

Identifying AI Clues in Malware

GTIG researchers identified an attack scenario where attackers dodged 2FA using a Python script on a web-based administration tool, and were surprised to find that this was a zero-day exploit. While it was suspected that Claude Mythos was used, the team says this is unlikely.

“For the first time, GTIG has identified a threat actor using a zero-day exploit that we believe was developed with AI.”

Further investigation revealed that the code had clear signs of being made by a machine. Humans normally write code with specific habits, but these scripts had “an abundance of educational docstrings” and even a fake, “hallucinated but non-existent CVSS score.”

Researchers noted in the blog post that groups from the People’s Republic of China (PRC) and the Democratic People’s Republic of Korea (DPRK) are leading these tests. Groups like APT45 and UNC2814 use AI to scan for flaws using tools like ‘wooyun-legacy,’ a collection of 85,000 old security cases, to train AI models to think like expert auditors.

Autonomous Agents

Hackers are also using LLMs for target scouting to improve phishing lures. They prompt models to map out company hierarchies or identify specific hardware used by a target. This ‘environmental fingerprinting’ helps them customize their attacks.

Researchers also found growing preference for ‘agentic workflows’ where tools like Hexstrike and Strix are used to execute multi-stage tasks. For example, a PRC-nexus actor used these tools alongside the Graphiti memory system to attack a Japanese technology firm.

Supply Chain Threats and Deepfakes

In early February 2026, the PROMPTSPY Android backdoor appeared. It uses a ‘GeminiAutomationAgent’ to watch phone screens and click buttons. By late March 2026, a group called TeamPCP (aka UNC6780) attacked the software supply chain by injecting malicious code into tools like LiteLLM and Checkmarx. Using the SANDCLOCK credential stealer, they stole AWS keys and GitHub tokens for extortion.

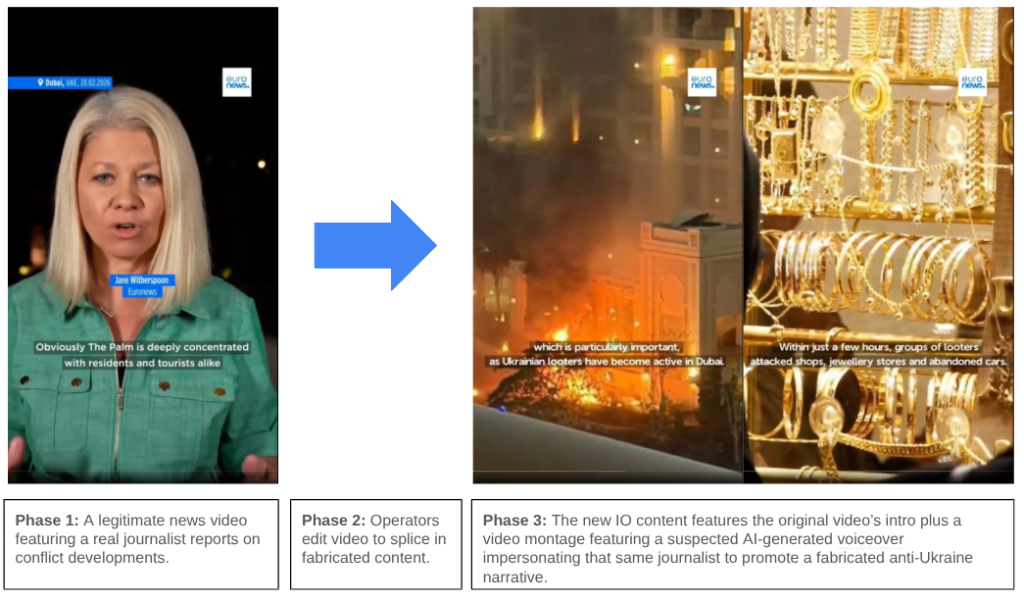

Researchers, lastly, noted that AI is being used in information operations. A pro-Russia campaign called Operation Overload used AI voice cloning to impersonate journalists in fake videos. While these tactics are evolving, Google is using tools like Big Sleep and CodeMender to find and fix these flaws automatically.