- Trust in identities: When "real" isn't real anymore

- Trust in information sources: When answers become the attack

- Trust in third parties: Phishing infrastructure hiding in plain sight

- Trust in everyday workflows: When normal behavior is the target

- Prevention alone doesn't cut it

- The shift: Resilience over assumption

- See it in action

We’ve spent years treating prevention as the endgame: block the attack, and the problem disappears. But that model is starting to break.

The environment it was built for no longer exists. Attackers aren’t just finding ways around security controls. They’re running social engineering scams inside them, using the same tools, workflows, and signals against us that we’re supposed to trust.

And while attackers have adapted quickly, many security programs haven’t kept pace. It’s showing up in the data. In a recent report, only 8.9% of teams named phishing and social engineering as their biggest preparedness gap, which means most feel covered. That confidence is the gap. The threat has expanded well beyond what most security programs were built to see into identity abuse, trusted platforms, and the everyday workflows teams already trust.

Most teams also reported having adequate budgets and mature tooling. So why do positive outcomes still lag while confidence slips?

Teams aren’t behind because they don’t care or don’t work hard. They’re behind because attackers are targeting trust on an unprecedented scale and scope. It’s hitting every aspect of your digital world: identities, AI platforms, developer platforms, business software, and the workflows that keep organizations running. They’re operating in a way that makes social engineering compromise inevitable, not preventable.

Resilient teams are recognizing this shift and taking steps toward a security model built for today’s threat landscape.

Trust in identities: When “real” isn’t real anymore

The definition of a “trusted identity” is getting harder to pin down.

Deepfakes push attacks well beyond email. Attackers are using AI to impersonate executives, IT staff, and even job candidates. They build rapport over time with cloned voices, then add video to lend credibility to requests that would otherwise raise red flags.

That doesn’t mean every organization is suddenly facing Hollywood-grade live deepfake calls every day. But it does mean the old assumption that seeing or hearing someone adds assurance is no longer reliable. This Tradecraft Tuesday episode, AI: Friend or Foe in Cybersecurity, made that point directly: identity itself isn’t a trustworthy signal anymore.

That aligns with what the surveyed teams are experiencing. Identity-based attacks are the area organizations feel least prepared to defend against (26.5%), and 32% lack Identity Threat Detection and Response (ITDR) to protect this increasingly vulnerable attack surface.

Resilient teams are building security programs that stretch beyond user authentication to the behavioral signals that appear at the earliest stages of identity compromise before a crisis emerges.

Identity used to be the perimeter. Now it’s the lure.

Trust in information sources: When answers become the attack

Attackers aren’t just impersonating people. They’re manipulating the information we use to make decisions and the workflows we trust to deliver it.

Search engines and AI platforms have become our go-to starting point for problem-solving. Search, scan the top result, follow the instructions, move on. Problem solved! It’s fast and reliable. And it’s exactly the pattern attackers are designing around.

In one case that hit close to home, a Huntress engineer searched for a Claude installer, clicked the top result, and downloaded malware. Real search engine. Real-looking result. Normal workflow. No obvious red flags, and that’s the point.

In another case, macOS users searching for routine fixes were directed to ChatGPT or Grok pages that looked exactly like legitimate support content. The moment they followed the instructions, they executed malicious commands that installed the AMOS infostealer malware.

Figure 1: Malicious macOS “routine fix” instructions that make the attack look like normal troubleshooting.

The failure isn’t carelessness. It’s that nothing about these interactions looks wrong until it’s too late. Users followed a normal workflow. Attackers designed the attack to fit inside it.

That creates a second problem for security teams. By the time something surfaces as actionable, the attacker is already in. Nearly two-thirds of teams surveyed report that at least 25% of their alerts are noise. While security teams are sifting through that queue, attackers have already reduced the steps and time required to establish access. The gap between when compromise happens and when a team can respond keeps widening. Teams are paying attention. Attackers have just made it a lot harder to find anything worth acting on.

Eric Stride, Chief Security Officer at Huntress, says:

“Most teams think resilience comes from seeing more. In reality, it comes from knowing what matters and acting quickly when it does.”

Resilient teams treat that as an operational mandate, not a principle. They cut the alert queue, assign clear ownership, and measure speed from detection to action. Because faster clarity on what’s real beats broader coverage of what might be.

But manipulating what people see is only part of the picture. Attackers are also manipulating the infrastructure that those signals travel through.

Trust in third parties: Phishing infrastructure hiding in plain sight

Attackers don’t just abuse people. They abuse the platforms we trust at scale.

The Railway campaign is the clearest recent example. A productized phishing-as-a-service operation (PHaaS) called EvilTokens weaponized Railway, a legitimate cloud deployment platform, to stand up credential-harvesting infrastructure on demand. More than 340 organizations across the US, Canada, Australia, New Zealand, and Germany were hit. The attack chain ran through legitimate Railway-hosted infrastructure, Cloudflare Workers pages, compromised websites, and trusted URL redirectors at machine speed.

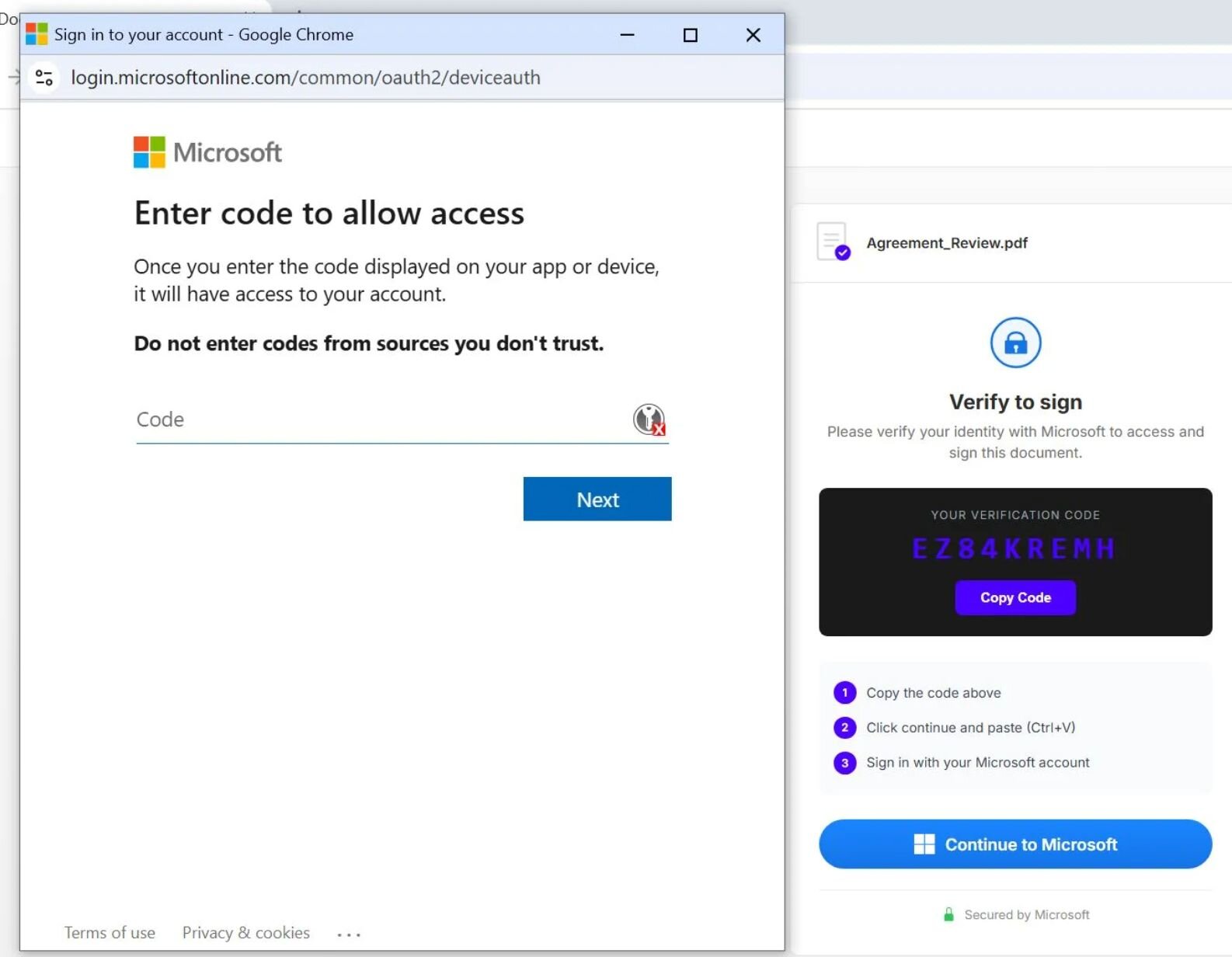

This wasn’t a credential-stealing phishing page. EvilTokens exploited a legitimate Microsoft OAuth authentication flow (device code phishing) to trick victims into handing over persistent session tokens. The victim received a real Microsoft URL, completed what looked like a normal security prompt, and authenticated the attacker’s session without knowing it. No password stolen. MFA bypassed completely. The token granted access to email, Teams, SharePoint, and OneDrive and stayed valid even after a password reset.

Figure 2: Example of device code phishing in the Railway campaign

Every piece of the attack looks legitimate until it isn’t.

What’s worse is that defenders can’t easily block this campaign by domain or lure type because the legitimate infrastructure is often needed for business operations, and it shifts fast. Instead, you have to be prepared to block it at the identity and behavior layer, where the abuse is still visible even when everything else looks clean. That’s the bigger shift: trusted third-party services now give attackers cover, speed, and scale at the same time.

Moving beyond that requires building a system your team trusts when something goes wrong: one that surfaces the right signals, makes ownership clear, tracks identity behavior beyond authentication, and uses automation to reduce noise so teams can limit impact and recover quickly. Stride puts it this way:

“The goal isn’t to eliminate every risk. It’s to build a system your team trusts when something goes wrong.”

And if trusted infrastructure gives attackers cover, trusted routine gives them time.

Trust in everyday workflows: When normal behavior is the target

Attackers know exactly what you rely on to keep your day running smoothly. Calendar invites, automated emails, shared design tools, SaaS integrations. You’re moving through all of it, all day long.

They know you trust a calendar invite from HR, a routine notification, or a link embedded in a familiar workflow. These attacks slip through because they follow the rules of your environment. They don’t trigger obvious controls. They don’t look out of place. They look like work. This exact pattern was called out in the Sublime Security, Trends to watch in 2026: Calendar phishing and opportunistic service abuse, featuring John Hammond, Senior Principal Security Researcher at Huntress.

And when alerts pile up, and ownership is unclear, response times slow to a crawl, giving attackers the one thing they really need: time. Anna Pham, Senior Tactical Response Analyst at Huntress, says:

“When alerts pile up, response slows. And when response slows, even small mistakes turn into major incidents.”

Resilient teams are designed for this reality. They prioritize clear ownership, reduce cognitive load, and make sure that when something looks wrong, someone knows exactly what to do next. Because when phishing campaigns look exactly like Tuesday morning, detection isn’t just a controls problem. It’s an ownership problem.

Prevention alone doesn’t cut it

All of these tradecraft examples show us that modern social engineering doesn’t force its way in. It fits in and blends in at every level across your attack surface.

Attackers don’t break the workflow. They use it. They don’t steal your credentials. They borrow your session. They don’t spoof the domain. They rent the legitimate one. They don’t invent a pretext. They let your calendar, your inbox, and your search results do it for them.

Prevention was built for a world where attacks were detectable: a shady link, an unfamiliar sender, a slightly-off domain. That world is smaller every day. When the attack arrives inside a trusted tool, a legitimate OAuth prompt, or a search result that looks identical to the real thing, the controls designed to catch it have already cleared it.

While your team sifts through an alert queue, attackers are already inside, moving laterally, exfiltrating data, positioning for extortion. The gap is a failure of the model, not the level of effort.

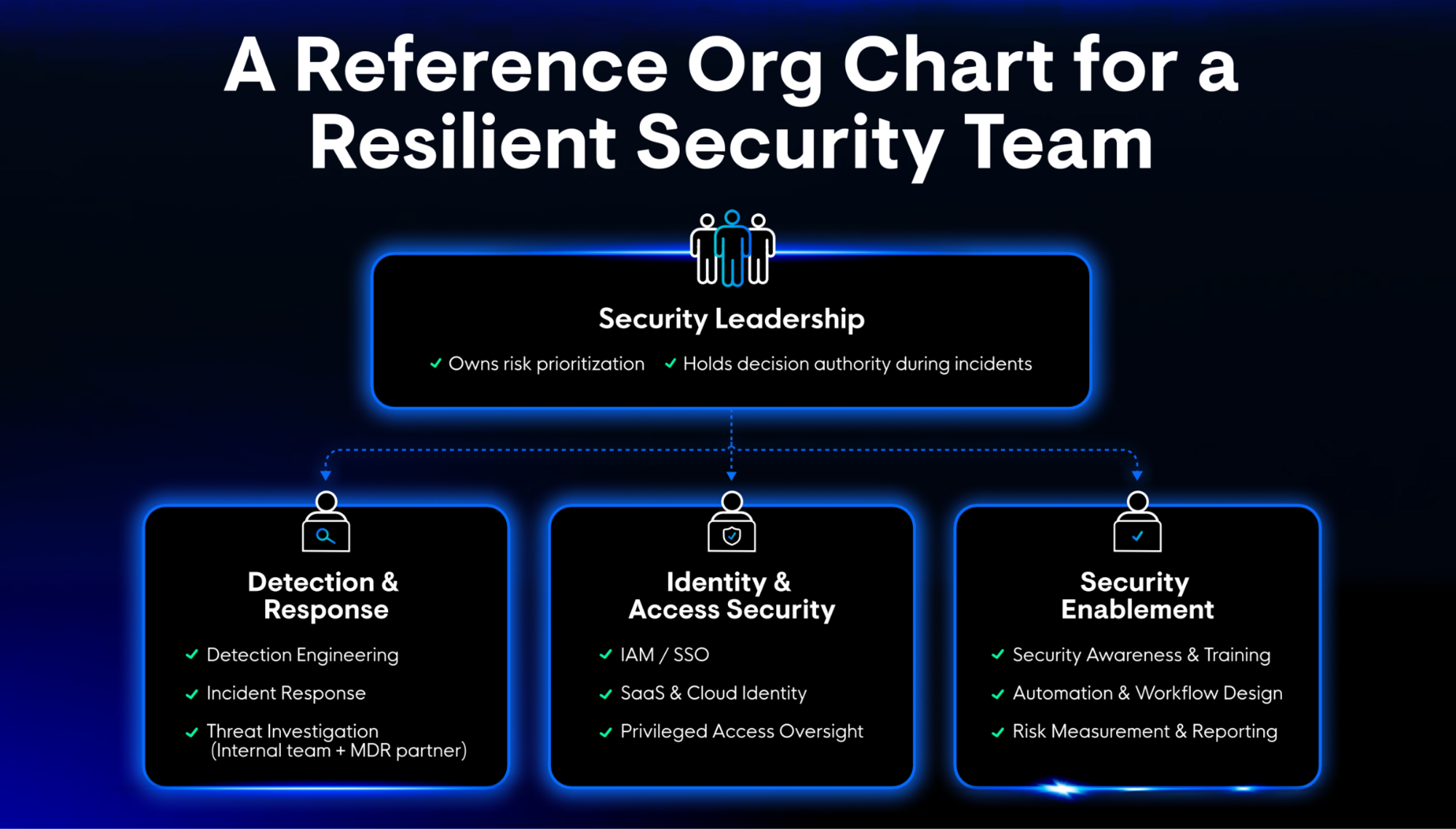

Resilient teams respond by redesigning the model itself around ownership, identity visibility, fast response, and operational clarity.

The shift: Resilience over assumption

The teams adapting fastest aren’t working harder. They’re making different decisions about what security is actually for.

- Speed over volume. The Railway/EvilTokens campaign issued valid session tokens that stayed active even after password resets. Limited damage means acting on the right signals fast.

- Clarity over coverage. Device code phishing works because it blends into a flow that looks normal at every step. Catching it requires cross-tenant visibility into identity and session behavior.

- Behavior over static indicators. Resilient teams are building detection around what attackers do, not just what their infrastructure looks like.

- Ownership over ambiguity. When an alert fires and nobody knows who owns the response, even a small incident becomes a major one. Resilient teams have defined that clearly before something goes wrong. When it does, the answer to “who handles this?” isn’t just another Slack thread.

Gavin Hill, Vice President of Product Marketing at Huntress, says:

“Prevention isn’t realistic anymore. Resilience comes from breach mitigation—limiting damage and recovering quickly. That’s why piecemealing tools doesn’t work long-term. Teams need platforms that can correlate identity, endpoint, and user behavior to support a single response.”

Attackers are pressure testing your organization daily with social engineering tactics. Resilience isn’t a bonus. It’s the only strategy that survives contact with the reality of today’s threat landscape.

See it in action

Your profile is already being used as intel. In the next episode of _declassified on May 20, Huntress Principal Product Researcher Truman Kain and digital safety educator Caitlin Sarian (aka “Cybersecurity Girl”) will show you exactly how attackers turn your public information into an attack path. Learn how to make yourself a harder target. Register now.