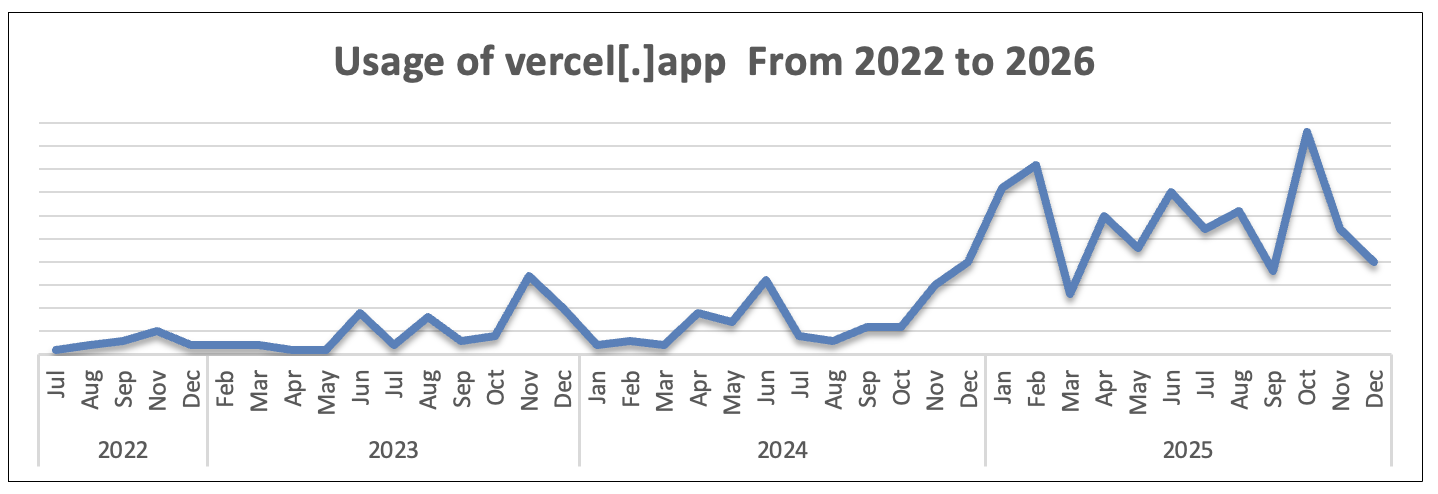

Threat actors are rapidly adopting generative AI platforms to scale phishing operations, and Vercel has emerged as a powerful enabler in this shift.

Vercel is a cloud-based platform designed to help developers build and deploy modern web applications quickly. Its GenAI-powered tool, v0[.]dev, allows users to generate fully functional websites using simple text prompts.

While intended for legitimate development, attackers are now abusing this capability to mass-produce phishing pages that closely replicate real login portals.

With just a few prompts, threat actors can generate spoofed sign-in pages for platforms like Microsoft, Spotify, or Facebook.

These pages not only look identical to legitimate sites but also replicate their functionality, making them far more convincing to victims.

Even individuals with minimal technical expertise can now create advanced phishing infrastructure, something that previously required significant skill.

Security researchers at Cofense report a surge in campaigns leveraging Vercel’s AI-driven development tools to create highly convincing phishing websites that mimic trusted brands with minimal effort.

Vercel’s free tier further lowers the barrier to entry. Although account creation is required for full functionality, the signup process is simple, and users receive token-based access to GenAI features.

These tokens act as currency for generating outputs, enabling attackers to iterate quickly and refine phishing pages with ease.

One of the key advantages for threat actors is Vercel’s hosting and deployment model. Unlike traditional phishing kits that require dedicated infrastructure, Vercel handles hosting in the cloud.

This means attackers can deploy phishing sites instantly and redeploy them just as quickly if they are taken down.

Each time a prompt is entered, the AI generates slightly different outputs. This variability helps attackers evade detection, as new phishing pages can be created without rewriting code.

While Vercel is a leading platform in this space, other tools like DeepSite AI and BlackBox are also being explored by threat actors. However, Vercel’s combination of branding, hosting, and seamless integrations makes it particularly effective.

Essentially, Vercel compresses the entire phishing kit lifecycle design, hosting, and deployment into a single interface.

Vercel’s integration capabilities further enhance its appeal for malicious use. Threat actors are combining phishing pages with Telegram bots to automate credential theft.

When a victim enters login details, the data is instantly sent to an attacker-controlled Telegram bot using the platform’s Bot API.

This setup eliminates the need for attackers to manage backend infrastructure. Vercel’s serverless functions handle API routing, while Telegram provides real-time notifications.

Other integrations, including AWS, Stripe, and xAI, highlight how legitimate services can be chained together to support phishing operations.

Cofense Intelligence has documented multiple campaigns leveraging Vercel across different industries and attack themes. These include:

- Fake job recruitment campaigns impersonating brands like Adidas, Nike, and Ferrari.

- Spoofed Microsoft login pages used in credential harvesting attacks.

- Spotify-themed phishing pages that capture both login credentials and payment details.

In one case, attackers created a fake Adidas careers page that redirected victims to a fraudulent Facebook login screen.

Another campaign used Telegram integration to immediately capture stolen credentials, demonstrating how automation is becoming standard in phishing operations.

These campaigns often use familiar lures such as job offers or account alerts, increasing the likelihood of user interaction. The ability to replicate branding, combined with realistic workflows, makes detection significantly harder for both users and security tools.

The broader trend is clear: generative AI is transforming phishing from a technically demanding activity into an accessible, scalable operation.

Capabilities once limited to advanced threat actors are now available to anyone with basic knowledge and access to free tools.

As GenAI continues to evolve, security teams should expect phishing campaigns to become more sophisticated, personalized, and difficult to detect.

Follow us on Google News, LinkedIn, and X to Get Instant Updates and Set GBH as a Preferred Source in Google.